Although JEDEC still needs to finalize the HBM4 specification, the industry seems to need the new memory technology as soon as possible, as demand for high-performance GPUs for AI is insatiable. To enable chip designers to build next-generation GPUs, Rambus has unveiled the industry's HBM4 memory controller IP, which surpasses the capabilities of HBM4 announced to date.

Rambus's HBM4 controller not only supports the JEDEC-specified 6.4 GT/s data transfer rate for HBM4 but also has headroom to support speeds up to 10 GT/s. This enables a memory bandwidth of 2.56 TB/s per HBM4 memory stack with a 2048-bit memory interface.

The Rambus HBM4 controller IP can be paired with third-party or customer-provided PHY solutions to create a complete HBM4 memory system.

Rambus is collaborating with industry leaders like Cadence, Samsung, and Siemens to ensure this technology integrates smoothly into the existing memory ecosystem, facilitating the transition to next-generation memory systems.

A preliminary version of JEDEC's HBM4 specification indicates that HBM4 memory will come in configurations featuring 4-high, 8-high, 12-high, and 16-high stacks supporting memory layers of 24 Gb and 32 Gb. A 16-high stack using 32 Gb layers will provide a capacity of 64 GB, allowing systems with four memory modules to reach up to 256 GB of total memory. This setup can achieve a peak bandwidth of 6.56 TB/s through an 8,192-bit interface, significantly boosting performance for demanding workloads.

If someone manages to make an HBM4 memory subsystem run at 10 GT/s, then four HBM4 stacks will provide bandwidth of over 10 TB/s. Still, support for enhanced (beyond-JEDEC) speeds enabled by Rambus and memory makers are typically offered to provide headroom and ensure stable and power-efficient operations at standard data transfer rates

"With Large Language Models (LLMs) now exceeding a trillion parameters and continuing to grow, overcoming bottlenecks in memory bandwidth and capacity is mission critical to meeting the real-time performance requirements of AI training and inference," said Neeraj Paliwal, SVP and general manager of Silicon IP, at Rambus. "As the leading silicon IP provider for AI 2.0, we are bringing the industry’s first HBM4 Controller IP solution to the market to help our customers unlock breakthrough performance in their state-of-the-art processors and accelerators."

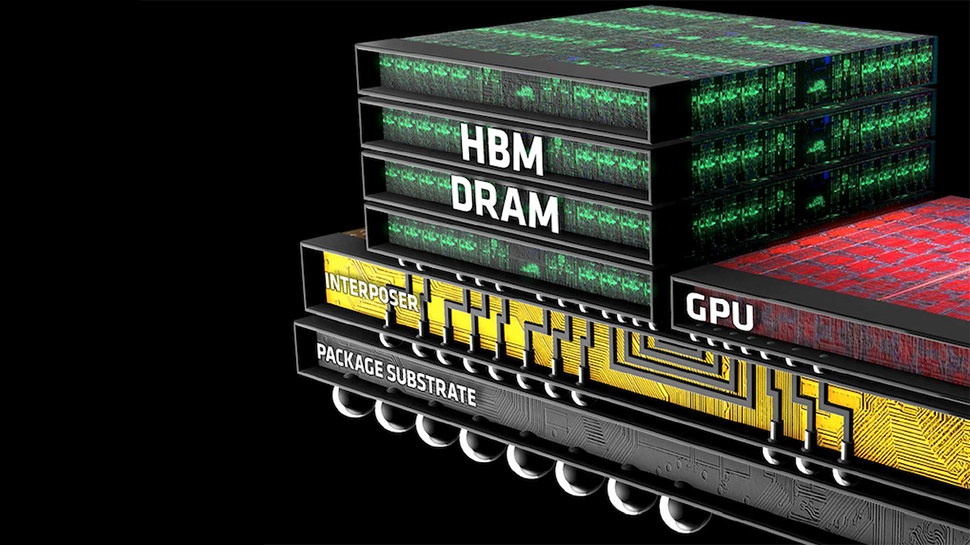

Since HBM4 will offer double the channel count per stack compared to HBM3, it will require a more extensive physical footprint due to a 2048-bit interface width. Also, interposers for HBM4 will be different from those for HBM3/HBM3E, which will again affect their data transfer rate potential.