Living in 1999 felt like standing on the edge of an event horizon. Our growing obsession with technology was spilling into an outpouring of hope, fear, angst – and even apocalyptic distress in some quarters. The dot-com bubble was swelling as the World Wide Web began spreading like a Californian wildfire. The first cell phones had been making the world feel much more connected. Let's not forget the anxieties over Y2K that were escalating into panic as we approached the bookend of the century.

But as this progress was catching the imagination of so many, artificial intelligence (AI) was in a sorry state – only beginning to emerge from a debilitating second 'AI winter' which spanned between 1987 and 1993.

Some argue this thawing process lasted as long as the mid-2000s. It was, indeed, a bleak period for AI research; it was a field that "for decades has overpromised and underdelivered", according to a report in the New York Times (NYT) from 2005.

Funding and interest was scarce, especially compared to its peak in the 1980s, with previously thriving conferences whittled down to pockets of diehards. In cinema, however, stories about AI were flourishing – with the likes of Terminator 2: Judgement Day (1991) and Ghost in the Shell (1995) – building on decades of compelling feature films like Blade Runner (1982).

It was during this time that the Wachowskis penned the script for The Matrix – a groundbreaking tour de force that threw up a mirror to humanity's increasing reliance on machines and challenged our understanding of reality.

It's a timeless classic, and its impact since its March 31 1999 release has been sprawling. But the chilling plot at its heart – namely the rise of an artificial general intelligence (AGI) network that enslaves humanity – has remained consigned to fiction more so than it's ever been considered a serious scientific possibility. With the heat of the spotlight now on AI, however, ideas like the Wachowskis' are beginning to feel closer to home than we had anticipated.

AI has become not just the scientific, but the cultural zeitgeist – with large language models (LLMs) and the neural nets that power them cannonballing into the public arena. That dry well of research funding is now overflowing, and corporations see massive commercial appeal in AI. There's a growing chorus of voices, too, that feel an AGI agent is on the horizon.

People like the veteran computer scientist Ray Kurzweil had anticipated that humanity would reach the technological singularity (where an AI agent is just as smart as a human) for yonks, outlining his thesis in 'The Singularity is Near' (2005) – with a projection for 2029.

Disciples like Ben Goertzel have claimed it can come as soon as 2027. Nvidia's CEO Jensen Huang says it's "five years away", joining the likes of OpenAI CEO Sam Altman and others in predicting an aggressive and exponential escalation. Should these predictions be true, they will also introduce a whole cluster bomb of ethical, moral, and existential anxieties that we will have to confront. So as The Matrix turns 25, maybe it wasn't so far-fetched after all?

Stepping into the Matrix

Sitting on tattered armchairs in front of an old boxy television in the heart of a wasteland, Morpheus shows Neo the "real world" for the first time. Here, he fills us in on how this dystopian vision of the future came to be. We're at the summit of a lengthy yet compelling monologue that began many scenes earlier with questions Morpheus poses to Neo, and therefore us, progressing to the choice Neo must make – and crescendoing into the full tale of humanity's downfall and the rise of the machines.

Much like we're now congratulating ourselves for birthing advanced AI systems that are more sophisticated than anything we have ever seen, humanity in The Matrix was united in its hubris as it gave birth to AI. Giving machines that spark of life – the ability to think and act with agency – backfired. And after a series of political and social shifts, the machines retreated to Mesopotamia, known as the cradle of human civilization, and built the first machine city, called 01.

Here, they replicated and evolved – developing smarter and better AI systems. When humanity's economies began to fall, they struck the machine civilization with nuclear weapons to regain control. Because the machines were not as vulnerable to heat and radiation, the strike failed and instead represented the first stone thrown in the 'Machine War'.

Unlike in our world, the machines in The Matrix were solar-powered and harvested their energy from the sun. So humans decide to darken — namely enslaving humans and draining their innate energy. They continued to fight until human civilization was enslaved, with the survivors placed into pods and connected to the Matrix – an advanced virtual reality (VR) simulation intended as an instrument for control – while their thermal, bio-electric, and kinetic energy was harvested to sustain the machines.

"This can't be real," Neo tells Morpheus. It's a reaction we would all expect to have when confronted with such an outlandish truth. But, as Morpheus retorts: "What is real?' Using AI as a springboard, the film delves into several mind-bending areas including the nature of our reality – and the power of machines to influence and control how we perceive the environment around us. If you can touch, smell, or taste something, then why would it not be real?

Strip away the barren dystopia, the self-aware AI, and strange pods that atrophied humans occupy like embryos in a womb, and you can see parallels between the computer program and the world around us today.

When the film was released, our reliance on machines was growing – but not final. Much of our understanding of the world today, however, is filtered through the prism of digital platforms infused with AI systems like machine learning. What we know, what we watch, what we learn, how we live, how we socialize online – all of these modern human experiences are influenced in some way by algorithms that direct us in subtle but meaningful ways. Our energy isn't harvested, but our data is, and we continue to feed the machine with every tap and click.

Intriguingly, as Agent Smith tells Morpheus in the iconic interrogation scene – a revelatory moment in which the computer program betrays its emotions – the first version of the Matrix was not a world that closely resembled society as we knew it in 1999. Instead, it was a paradise in which humans were happy and free of suffering.

The trouble, however, is that this version of the Matrix didn't stick, and people saw through the ruse – rendering it redundant. That's when the machine race developed version 2.0. It seemed, as Smith lamented, that humans speak in the language of suffering and misery – and without these qualities, the human condition is unrecognizable.

AI's 20th-century rollercoaster ride

By every metric, AI is experiencing a monumental boom – when you look at where the field once was. Startup funding surged by more than ten-fold between 2011 and 2021, surging from £670 million to $72 billion a decade later, according to Statista. The biggest jump came during the COVID-19 pandemic, with funding rising from $35 billion the previous year. This has since tapered off – falling to $40 billion in 2023 – but the money that's pouring into research and development (R&D) is surging.

But things weren't always so rosy. In fact, in the early 1990s during the second AI winter the term "artificial intelligence" was almost taboo, according to Klondike, and was replaced with other terms such as "advanced computing" instead. This is simply one turbulent period in a long near-75 year history of the field, starting with Alan Turing in 1950 when he pondered whether a machine could imitate human intelligence in his paper 'Computing Machinery and Intelligence'.

In the years that followed, a lot of pioneering research was conducted – but this early momentum fell by the wayside during the first AI winter between 1974 and 1980 – where issues including limited computing power prevented the field from advancing, and organizations like DARPA and national governments pulled funding from research projects.

Another boom in the 1980s, fuelled by the revival of neural networks, then collapsed once more into a bust – with the second winter spanning six years up to 1993 and thawing well into the 21st century. Then, in the years that followed, scientists around the world were slowly making progress once more as funding restarted and AI caught people's imagination once again. But the research field itself was siloed, fragmented and disconnected, according to Pamela McCorduck writing in 'Machines Who Think' (2004). Computer scientists were focusing on competing areas to solve niche problems and specific approaches.

As Klondike highlights, they also used terms such as "advanced computing" to label their work – where we may now refer to the tools and systems they built as early precursors to the AI systems we use today.

The AI sparks that fuelled the world of The Matrix

It wasn't until 1995 – four years before The Matrix hit theaters – that the needle in AI research really moved in a significant way. But you could already see signs the winter was thawing, especially with the creation of the Loebner Prize – an annual competition created by Hugh Loebner in 1990.

Loebner was "an American millionaire who had given a lot of money" and "who became interested in the Turing test," according to the recipient of the prize in 1997, the late British computer scientist Yorick Wilks, speaking in an interview in 2019. Although the prize wasn't particularly large – $2,000 initially – it showed that interest in building AI agents was expanding, and that it was being taken seriously.

The first major development of the decade came when computer scientist Richard Wallace developed the chatbot ALICE – which stood for artificial linguistic internet computer entity. Inspired by the famous ELIZA chatbot of the 1960s – which was the world's first major chatbot – ALICE, also known as Alicebot, was a natural language processing system that applied heuristic pattern matching to conversations with a human in order to provide responses. Wallace went on to win the Loebner Prize in 2000, 2001 and 2004 for creating and advancing this system, and a few years ago the New Yorker reported ALICE was even the inspiration for the critically acclaimed 2013 sci-fi hit Her, according to director Spike Jonze.

Then, in 1997, AI hit a series of major milestones, starting with a showdown starring the reigning world chess champion and grandmaster Gary Kasparov, who in May that year went head to head in New York with the challenger of his life: a computing agent called 'Deep Blue' created by IBM. This was actually the second time Kasparov faced Deep Blue – after beating the first version of the system in Philadelphia the year before, but Deep Blue narrowly won the rematch by 3.5 to 2.5.

"This highly publicized match was the first time a reigning world chess champion lost to a computer and served as a huge step towards an artificially intelligent decision making program," wrote Rockwell Anyoha in a Harvard blog.

It did something "no machine had ever done before", according to IBM, delivering its victory through "brute force computing power" and for the entire world to see – as it was indeed broadcast far and wide. It used 32 processors to evaluate 200 chess positions per second. “I have to pay tribute,” Kasparov said. “The computer is far stronger than anybody expected.”

Another major milestone was the creation of NaturallySpeaking by Dragon Software in June 1997. This speech recognition software was the first universally accessible and affordable computer dictation system for PCs – if $695 (or $1,350 today) is your idea of affordable, that is. "This is only the first step, we have to do a lot more, but what we're building toward is to humanizing computers, make them very natural to use, so yes, even more people can use them," said CEO Jim Baker in a news report from the time. Dragon licensed the software to big names including Microsoft and IBM, and it was later integrated into the Windows operating system, signaling much wider adoption.

A year later, researchers with MIT released Kismet – a "disembodied head with gremlin-like features" that learns about its environment "like a baby" and entirely "upon its benevolent carers to help it find out about the world", according to Duncan Graham-Rowe writing in New Scientist at the time. Spearheaded by Cynthia Greazeal, this creation was one of the projects that fuelled MIT's AI research and secured its future. The machine could interact with humans, and simulated emotions by changing its facial expression, its voice and its movements.

This contemporary resurgence also extended to the language people used too. The taboo around "artificial intelligence" was disintegrating and terms like "intelligent agents" began slipping their way into the lexicon of the time, wrote McCorduck in 'Machines Who Think'. Robotics, intelligent AI agents, machine surpassing the wit of man, and more: it was these ingredients that, in turn, fed into the thinking behind The Matrix and the thesis at its heart.

A critical flop – but a hit with audiences

When The Matrix hit theaters, there was a real dichotomy between movie-goers and critics. It's fair to say that audiences loved the spectacle, to say the least – with the film taking $150 million at the US box office – while a string of publications stood in line to lambast the script and the ideas in the movie. "It's Special Effects 10, Screenplay 0," wrote Todd McCarthy in his review in Variety. The Miami Herald rated it two-and-a-half stars out of five.

Chronicle senior writer Bob Graham praised Joe Pantoliano (who plays Cypher) in his SFGate review, "but even he is eventually swamped by the hopeless muddle that "The Matrix" becomes." Critics wondered why people were so desperate to see a movie that had been so widely slated – and the Guardian pondered whether it was sci-fi fans "driven to a state of near-unbearable anticipation by endless hyping of The Phantom Menace, ready to gorge themselves on pretty much any computer graphics feast that came along?"

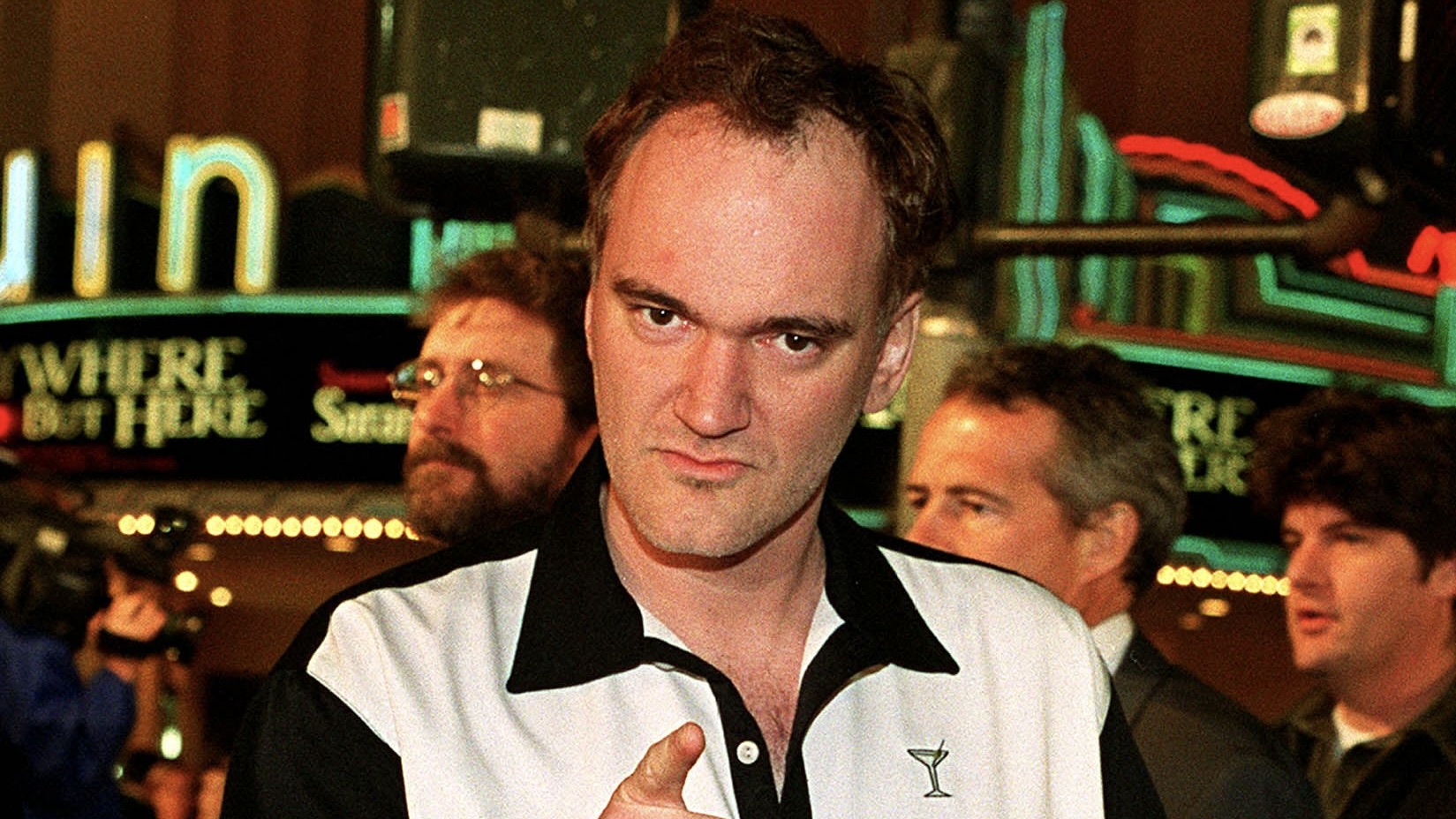

Veteran film director Quentin Tarantino, however, related more with the average audience member in his experiences, which he shared in an interview with Amy Nicholson. "I remember the place was jam-packed and there was a real electricity in the air – it was really exciting," he said, speaking of his outing to watch the movie on the Friday night after it was released.

"Then this thought hit me, that was really kind of profound, and that was: it's easy to talk about 'The Matrix' now because we know the secret of 'The Matrix', but they didn't tell you any of that in any of the promotions — in any of the big movie trailer or any of the TV spots. So we were really excited about this movie, but we really didn't know what we were going to see. We didn't really know what to expect; we did not know the mythology at all – I mean, at all. We had to discover that."

Living in our very own Matrix

The AI boom of today is largely centered around an old technology known as neural networks. Despite incredible advancements in generative AI tools, namely large language models (LLMs) that have captured the imagination of businesses and people alike.

One of the most interesting developments is the number of people who are becoming increasingly convinced that these AI agents are conscious, or have agency, and can think – or even feel – for themselves. One startling example is a former Google engineer who claimed a chatbot the company was working on was sentient. Although this is widely understood not to be the case, it's a sign of the direction in which we're heading.

Elsewhere, despite impressive systems that can generate images, and now video – thanks to OpenAI's SORA – these technologies still all rely on the principles of neural networks that many in the field don't believe will lead to the sort of human-level AGI, let alone a super intelligence that can modify itself and build even more intelligence agents autonomously. The answer, according to Databricks CTO Matei Zaharia, is a compound AI system that uses LLMs as one component. It's an approach backed by Goertzel, the veteran computer scientist who is working on his own version of this compound system – with the aim of creating a distributed open source AGI agent within the next few years. He suggests that humanity could build an AGI agent as soon as 2027.

There are so many reasons why The Matrix has remained relevant – from the fact it was a visual feast to the rich and layered parables one can draw between its world and ours.

Much of the backstory hasn't been a part of that conversation in the 25 years since its cinematic release. But as we look to the future, we can begin to see how a similar world might be unfolding.

We know, for example, the digital realm we occupy – largely through social media channels – is influencing people in harmful ways. AI has also been a force for tragedy around the world, with Amnesty International claiming Facebook's algorithms played a role in pouring petrol on ethnic violence in Myanmar. Although not generally taken seriously, companies like Meta are attempting to build VR-powered alternate realities known as the metaverse.

With generative AI now a proliferating technology, groundbreaking research found recently that more than half (57.1%) of the internet comprises AI-generated content.

Throw increasingly improving tools like Midjourney and now SORA into the mix – and to what extent can we know what is real and what is generated by machines – especially if they look so lifelike and indistinguishable from human-generated content? The lack of sentience in the billions of machines around us is an obvious divergence from The Matrix. But that doesn't mean our own version of The Matrix has the potential to be any less manipulative.

Read more from TechRadar Pro

- OpenAI just gave artists access to Sora and proved the AI video tool is weirder and more powerful than we thought

- We've rounded up the best AI tools you can use today

- Ready for more movies? Check out our full Matrix Resurrections review