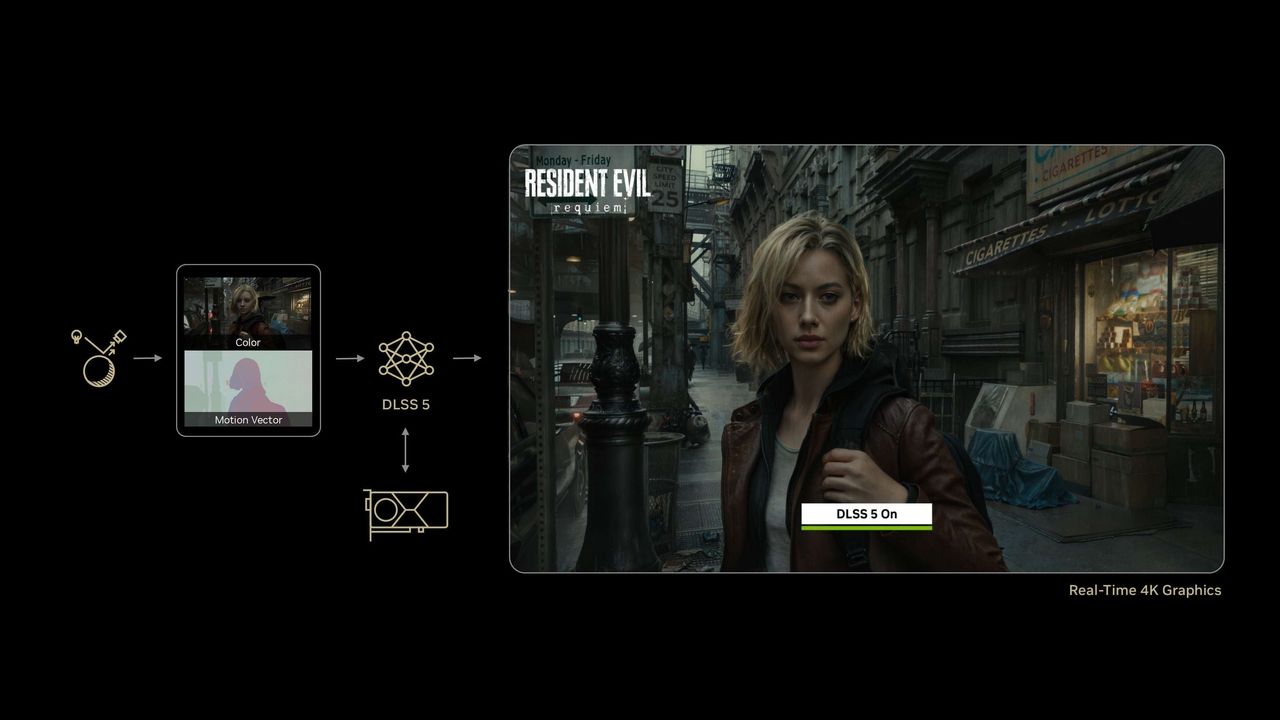

Neural rendering—the use of AI models to create pixels—is already a familiar concept in real-time graphics. When you’re using DLSS upscaling or frame generation, for example, many of the pixels you see are already generated rather than natively shaded, and those extra pixels and frames come at a surprisingly low computational cost via the matrix math acceleration of Nvidia’s Tensor Cores.

Given that almost magical cost-to-benefit ratio, research is unsurprisingly well under way to expand generative AI techniques beyond relatively transparent applications like upscaling and frame generation to replace portions of the traditional graphics pipeline as we know it today—and possibly even in its entirety.

Nvidia’s DLSS 5 reveal at GTC is a startling indication of just how close we are to that future. The RT cores that debuted in Turing nearly eight years ago have brought much more lifelike lighting effects into the realm of real-time graphics, but natively shading even a subset of the pixels in a frame to a Hollywood polish remains well outside the realm of today’s graphics hardware. Even the 575W, ~750mm² RTX 5090 isn’t up to the task, and further scaling-up of GPU die sizes and power envelopes in pursuit of that lifelike ideal would only make it less accessible. And given AI’s crowding-out of leading-edge fab capacity, next-gen gaming GPU silicon seems less and less likely to arrive any time soon.

DLSS 5 is the most prominent example so far of how neural rendering offers an alternate way forward compared to brute-force increases in compute resources. Its AI model is trained to infer how certain complex features of game scenes like characters, skin, hair, and environmental lighting “should” look in the real world given certain inputs from the game engine (including, but not limited to, the color buffer and motion vectors) in combination with an input frame.

The DLSS 5 model then uses this input data and its semantic understanding of parts of a scene to bring its appearance closer to how it might look in reality while still respecting the artistic intent embedded in environments and character models. Because it's deeply tied to the underlying game engine and assets, DLSS 5’s output is consistent and predictable in ways that the prompt-driven and iterative workflow of generative AI imagery and video distinctly aren’t.

We had an opportunity to preview DLSS 5 in five games at GTC, and for modern games with assets built to match, DLSS 5 unquestionably improved the image quality and fidelity of the small group of titles we saw. (For a group of high-resolution side-by-side comparisons that you can really pixel-peep, check out Nvidia's launch article.)

Even in games that already feature real time ray-traced effects, like Hogwarts Legacy, flipping DLSS 5 on and off creates even more convincing lighting effects for environments and characters alike. Hogwarts students standing in front of massive sunlit windows are rendered with convincing rim lighting around the edges of their hair and clothing that’s absent with DLSS 5 off. Improved ambient occlusion better darkens every fold of students’ robes and every nook, cranny, and corner of Hogwarts itself. Even everyday objects like couches look better situated in scenes thanks to more accurate shadows underneath.

I’ve also spent lots of time recently looking at Assassin’s Creed Shadows as I’ve begun a new round of benchmarking for our GPU Hierarchy, and it’s a game where RT plays a huge part in creating a rich and convincing-looking world. Even with Shadows’ already impressive RT implementation, DLSS 5 makes the light and shadow playing across the game’s forested vistas appear even closer to life, and it straightforwardly corrects minor rendering errors like a character’s robe not properly shadowing their leg in a crouch.

Those improvements carry over to games like Starfield that never implemented ray tracing to begin with. Flipping on DLSS 5 adds considerably greater sophistication to the appearance of environments and objects (and characters’ faces, but more on that in a second). That experience also holds in The Elder Scrolls IV: Oblivion Remastered, where reflections on water become more convincing and environmental features like the spaces under wooden docks and the arches and filigrees of bridges and buildings all look

DLSS 5 is also meant to better replicate how light interacts with human hair and skin, and nowhere are its enhancements more dramatic – for better or for worse – than with human faces.

Nvidia says that we’re not used to seeing faces of this fidelity in real-time rendering, and sometimes, the effect is breathtaking. The visages of the characters in Nvidia’s Zorah demo went from looking like “a good video game” to “incredibly lifelike.” And the flatly lit and (frankly) dead-eyed characters of Starfield practically come alive with DLSS 5 enabled, transforming into something resembling actual humans rather than aliens wearing human skin suits.

But in Oblivion Remastered, which uses character models that still exhibit some of the awkwardness of the 2006 original, the results are more mixed. It’s incredible that DLSS 5 can simply infer that flowing hair should create shadows that the game’s native lighting model completely fails to cast, but when that same character’s facial features are comically exaggerated, rendering their skin and hair with cinematic detail and precision can be more off-putting than immersive. The uncanny valley becomes the uncanny Grand Canyon.

And that’s where the advent of DLSS 5 and its reception moves into the realm of the philosophical rather than the purely technical. Real-time graphics as a field has relentlessly pursued more photorealistic rendering ever since the advent of the first GPUs, and working in the wide gap between real life and the capabilities of our tech to reproduce it has required considerable artistic skill, taste, and judgment to partially bridge those limitations.

If DLSS 5 is going to drastically narrow that gap, carelessly applying it has the potential to produce results that aren’t consistent with a game’s creative direction, and the inflamed community response to the results of some of Nvidia’s demos so far suggests that the company and its game dev partners will need to tread carefully to avoid those pitfalls. Assuming a developer includes DLSS 5 in a title using the existing Streamline SDK, Nvidia says that the model offers controls for color grading, intensity, and masking to fine-tune its overall effect on a game's appearance.

Of course, DLSS 5 will be toggle-able just like upscaling and frame gen, so if you’re not a fan of its implementation in a particular game, you can just leave it off entirely. And although the company acknowledges that the model could certainly be shoehorned into games by enterprising modders, the results thereafter are purely those folks’ responsibility, not devs' or Nvidia's.

The final open question for DLSS 5 regards its hardware requirements. The demos we saw were all running on a PC featuring dual RTX 5090s, one to run the game itself and one dedicated to accelerating the model. That’s a massive amount of compute, but the company said it hasn’t begun performance optimizations on the model yet, so we’ll have to withhold judgment on its hardware requirements until later. Nvidia also didn’t offer any indication of which of its RTX GPU architectures would be best compatible with DLSS 5, either.

All told, this remains an early look, but even at this stage, we’re excited and cautiously optimistic for the changes that expanded uses of neural rendering holds for gaming graphics. The fact that DLSS 5 is an AI model means that it can be continually fine-tuned and improved, just as DLSS upscaling has progressed in its capabilities over time.

Given that fact, Nvidia will doubtless continue to work internally and with game studios to refine DLSS 5’s outputs and requirements as the tech continues to be developed ahead of its launch this fall. The company claims over a dozen games will support DLSS 5 at launch so far, and given the widespread adoption of DLSS tech generally, that number is sure to grow by leaps and bounds. From what we’ve seen so far, we can’t wait to get our hands on it and give it a spin in a wider range of titles.