A Democratic senator has introduced a bill that would prevent the military from using AI to spy on Americans or launch kill strikes without human input.

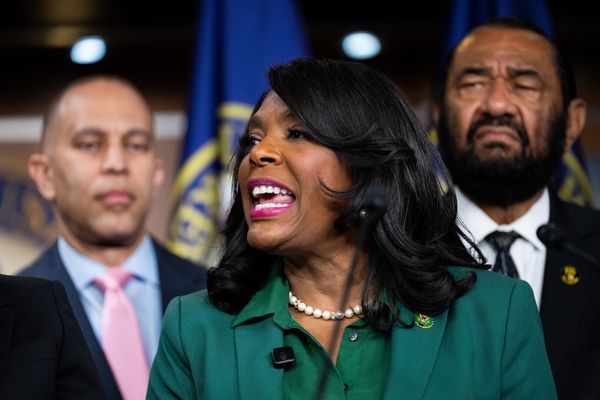

The AI Guardrails Act, introduced on Tuesday, comes from Sen. Elissa Slotkin of Michigan, who previously angered the Trump administration by taking part in a video encouraging members of the military to refuse “illegal orders.”

Slotkin, a former CIA analyst, argues the bill is necessary, given the rapid escalation of AI use in wars like the Iran conflict, as well as the dearth of regulations on the quickly evolving technology.

“If we were a healthier country politically right now, we would be putting up left and right limits around the use of AI,” she said during a recent Armed Services Committee hearing. “We have really no guidelines for you all, really no law. That’s not your fault. That’s on us, on a bipartisan basis.”

The bill would also ban AI-based decisions to launch a nuclear strike.

The Pentagon, which recently saw negotiations with AI firm Anthropic break down over ethics questions around autonomous weapons, insists it already complies with the guardrails the act is seeking.

“The Department of War has no interest in using AI to conduct mass surveillance of Americans (which is illegal) nor do we want to use AI to develop autonomous weapons that operate without human involvement,” Defense Department official Sean Parnell said last month in a statement on X.

As The Independent has reported, the U.S. military has made extensive use of AI in its Iran campaign.

A key tool has been Palantir’s Maven system, which pairs with Anthropic’s Claude large language model to scrutinize intelligence, mapping data, and other systems, giving commanders real-time information about battles and helping generate potential targets.

“These systems help us sift through vast amounts of data in seconds, so our leaders can cut through the noise and make smarter decisions faster than the enemy can react,” Central Command’s Adm. Brad Cooper said last week. “Humans will always make final decisions on what to shoot and what not to shoot, and when to shoot. ... But advanced AI tools can turn processes that used to take hours and sometimes even days into seconds.”

Other major Silicon Valley players, including OpenAI, Google, Elon Musk’s xAI, and Anduril, all either already provide or have agreements to supply the U.S. with various defense-related AI systems.

U.S. targeting processes have come under heavy scrutiny after a likely American strike leveled a girls' primary school in Iran, killing at least 175 people.

In the run-up to the Iran war, the Pentagon sparred with Anthropic about contract terms over how its AIs would be incorporated into defense systems.

Anthropic alleges the Defense Department wanted unrestricted use of its AIs, and the company sought assurances its products wouldn’t be used for mass surveillance or fully autonomous weapons. The Pentagon says it assured the company it would only use Anthropic models for lawful purposes.

Anthropic ultimately concluded it “cannot in good conscience” accept such terms, CEO CEO Dario Amodei said last month, prompting Defense Secretary Pete Hegseth to declare the company a supply chain risk. The Trump administration has directed federal agencies to stop using Anthropic technology within six months.

Anthropic has sued to challenge the risk designation, arguing the administration is punishing the company on ideological grounds.

Cryptocurrency and AI industries tested their influence in Illinois. It didn't go that well

What to know about the Jones Act as the Trump administration unveils a 60-day waiver

Military report says 'one in a million' live fire malfunction rained shrapnel on California highway

Fox News uses a headline that Hegseth ranted about, demanding more ‘patriotic press’

Fed leaves rates unchanged as Powell vows to stay on through DOJ probe

Trump blames Dems for shutdown despite GOP controlling three branches of government