I’ve spent a lot of time testing every AI video tool that hits the market, from OpenAI’s Sora to the latest Runway updates. Usually, the pitch is the same: "Type a prompt, get a movie."

But Netflix just quietly released a research model called VOID, and it’s doing something completely different. Instead of building new worlds and scenes from scratch, it rewrites the one you’ve already filmed — and it’s so good at it, you might never trust a "real" video again.

What is Netflix VOID?

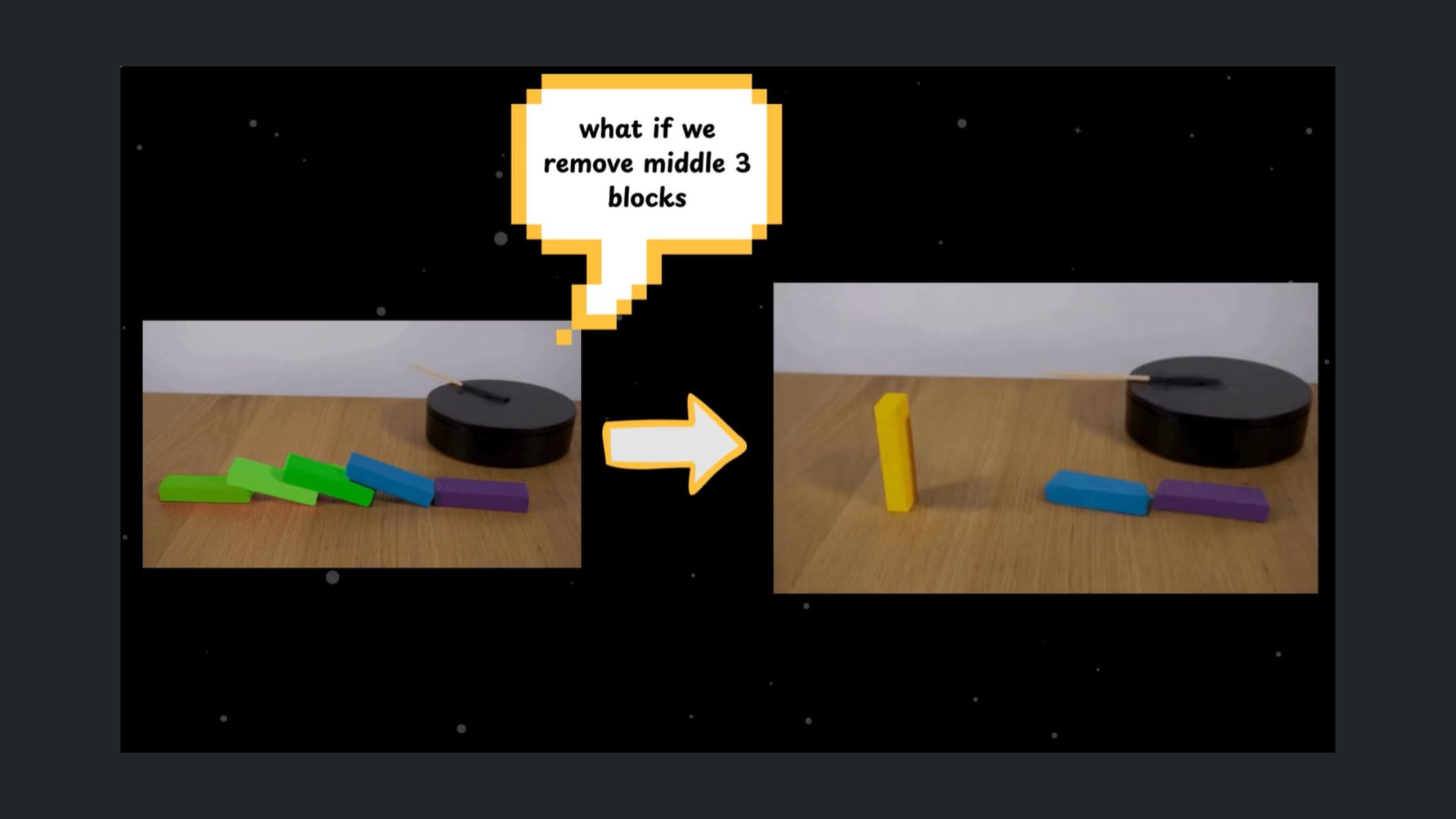

VOID stands for Video Object and Interaction Deletion. At first glance, it looks like a high-end version of the "Magic Eraser" on your Pixel 8 or Galaxy S24. You select an object, and it disappears.

But here’s where it gets wild: VOID understands physics and causality. In other words, while most editing tools just "patch" the hole left behind with background textures, VOID actually rewrites the logic of the scene to account for the missing object.

Several tests on GitHub highlight what the AI can do:

- The Guitar Test: In a research demo, a person holding a guitar is deleted. In any other tool, the guitar would just float or vanish. VOID realizes the guitar is no longer supported, so it generates frames where it falls naturally to the ground.

- The Crash Test: Remove one car from a head-on collision, and VOID doesn't leave a ghost-impact of fire and smoke. It "re-imagines" the path of the remaining car as if the accident never happened — turning a wreck into a peaceful drive down an empty road.

Why this is the "end of the reshoot"

For a company like Netflix, this underscores a massive cost-saving trick in the movie industry. Think about the infamous "Game of Thrones" Starbucks cup moment. Usually, fixing that requires expensive frame-by-frame digital surgery.

With VOID, a producer could simply remove the unwanted object and let the AI realistically simulate what should happen next — whether that’s water splashing, dust settling or nothing at all.

It goes beyond small fixes, too. Instead of bringing a 100-person crew back for a reshoot, the AI could correct mistakes after filming wraps. It could even change a story detail by removing a key object and recalculating the scene so everything still looks natural.

Can you try it?

The most surprising part of this release is that Netflix open-sourced it. You can find the model right now on Hugging Face (under an Apache 2.0 license).

However, don't expect to run this on your MacBook Air. VOID is a beast. It requires a GPU with at least 40GB of VRAM (think NVIDIA A100 or H100) to run inference comfortably. Plus, It's built on a 5-billion parameter version of CogVideoX and uses a proprietary "quadmask" system to tell the AI which parts of the physics need to be recalculated.

The takeaway

The “visual receipt” used to be the ultimate proof. Now it’s starting to lose its power. Netflix has introduced a tool that can rewrite real footage so seamlessly it looks completely real.

At the same time, AI “slop” is getting more convincing than ever — flooding the internet with content that feels authentic but isn’t. The result looks like a world where seeing something no longer means you can trust it. We’ve officially entered the era of editable reality.