The other day I got a text from a developer friend of mine and we chatted for a minute about how AI launches have started to feel predictable. Bigger models, better benchmarks and incremental upgrades that don't always change how these tools actually work.

This friend said, "I just want newer versions of the smaller models!" Luckily, if you feel that way, too, OpenAI's latest release has broken a pattern. Yesterday, OpenAI introduced GPT-5.4 mini and GPT-5.4 nano, two new models designed to be faster, more efficient and better suited for high-volume tasks. On the surface, they might sound like scaled-down versions of something bigger.

In reality though, they point to a much bigger shift. The focus has shifted from power to speed. Similarly, Google recently released Gemini 3 flash-lite — a smaller model with improved speed.

Move over power, users want speed

GPT-5.4 mini is a significant upgrade over GPT-5 mini, with improvements across coding, reasoning, multimodal understanding and tool use — while running more than twice as fast.

It also approaches GPT-5.4 on several evaluations, including coding-focused benchmarks like SWE-Bench and OSWorld-Verified. For those keeping track, Anthropic's Opus 4.6 still tops the benchmarks.

But it's the combination — near-flagship performance with much faster response times — where things get interesting.

Because for most people, the best AI isn’t the smartest one. I know that may sound surprising but most AI users want a model that responds instantly that can fit into their workflow. Think of it this way, you don't need a rocket scientist helping with your summarization or content calendar for social media. A brain of average intelligence that gets the job done fast helps with productivity most in this case.

So, that’s the difference between something you try once and something you actually use every day.

The real shift is happening behind the scenes

The real shift is happening behind the scenes. How these models are meant to be used is already changing the trajectory of AI. Specifically, “subagents” — smaller models like GPT-5.4 mini running in parallel, each handling a specific task while a larger model oversees the bigger picture.

In other words, instead of one model doing everything, AI systems are starting to look more like teams where a powerful model handles planning and coordination while smaller models execute tasks quickly and multiple processes run at the same time in the background.

It’s a more efficient way to work — and it’s how many modern AI tools are already starting to evolve. And yet, you may never use this model directly. Most people won’t ever choose GPT-5.4 mini or nano from a dropdown.

But they’ll absolutely notice the impact. These smaller models are designed to power:

- faster responses inside apps

- real-time assistants that don’t lag

- background tasks like summarizing, ranking and extracting data

- AI tools that feel more responsive and less like they’re “thinking”

GPT-5.4 nano, in particular, is built for high-throughput tasks — the kind of invisible work that supports everything from search results to smart features inside apps.

For a long time, AI development focused on making a single model as powerful as possible. But that's starting to change. Instead, we’re seeing a shift toward systems where different models handle different parts of a task — all working together at once.

That leads to faster outputs, more consistent performance and tools that feel smoother and more reliable. Essentially, AI is becoming less about one big brain — and more about coordinated systems that get things done faster.

Bottom line

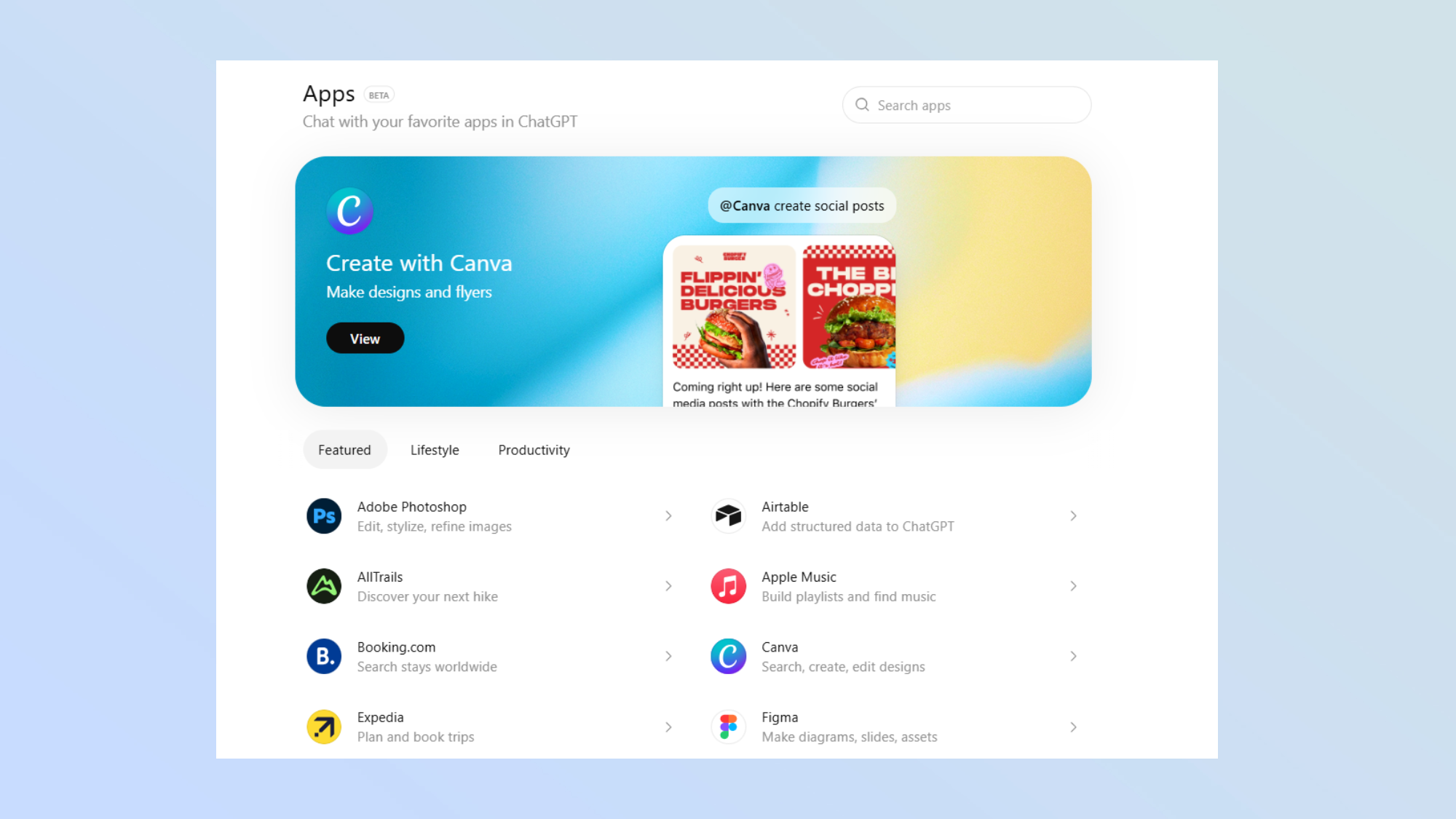

The new models are available in the drop down menu now. Give them a try and see what you think. GPT-5.4 mini and nano are more efficient and soon to be more distributed and increasingly invisible.

And as these models start powering the tools people already use, the biggest change won’t be what AI can do. Instead, it’ll be how seamlessly AI does it to the point of not even noticing.

.png?w=600)