When Microsoft revealed the first Copilot+ laptops, all running Qualcomm Snapdragon X Elite processors, it wasn't just a slap in the face to AMD and Intel — Nvidia also got snubbed. Because Nvidia is the biggest name in the AI space right now, at least from the hardware side of things, many wondered why Copilot wasn't also able to run on powerful GPUs. Wonder no longer, as Nvidia announced at Computex 2024 that it's collaborating with Microsoft to bring the Copilot runtime to GPUs sometime later this year.

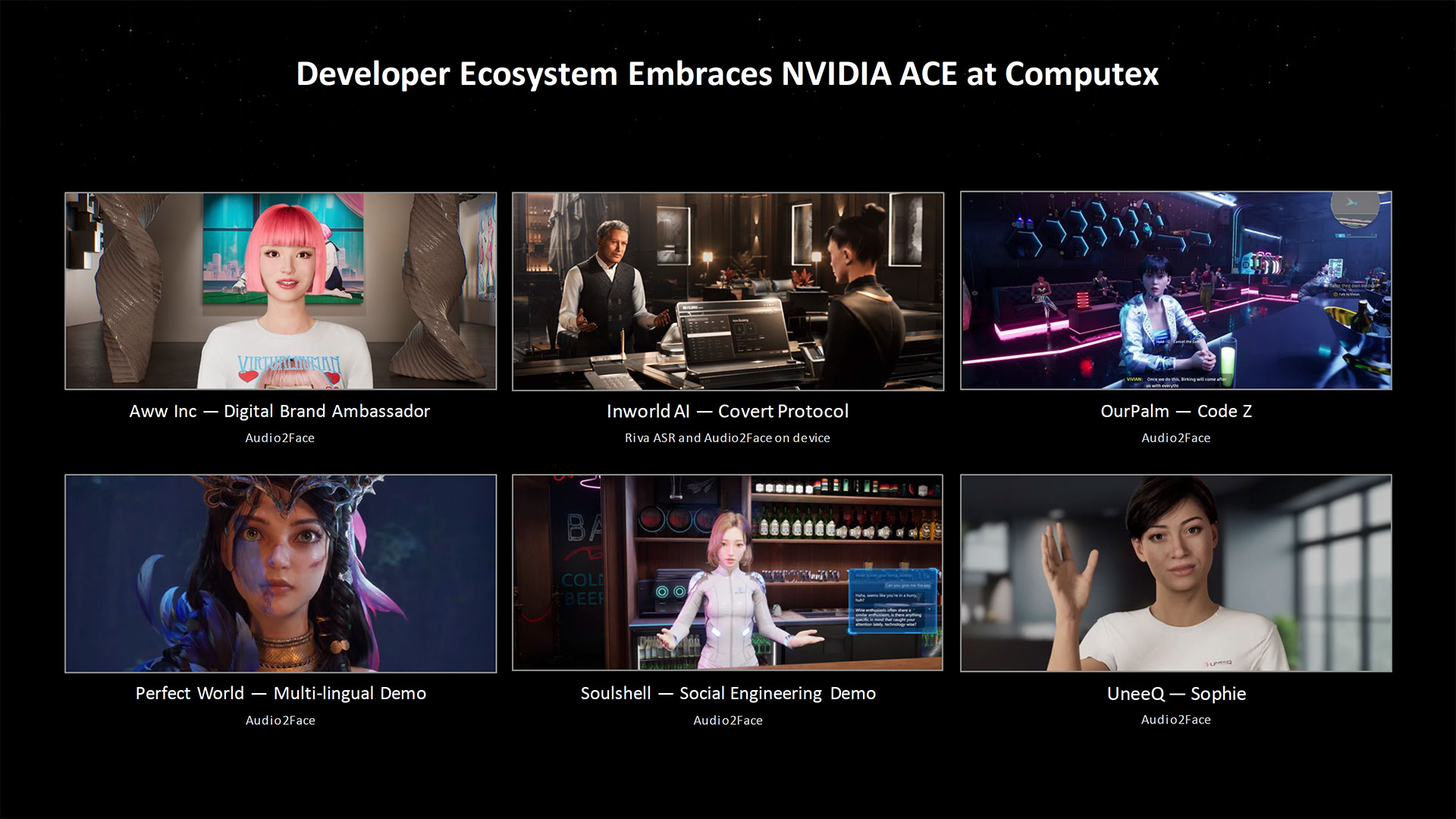

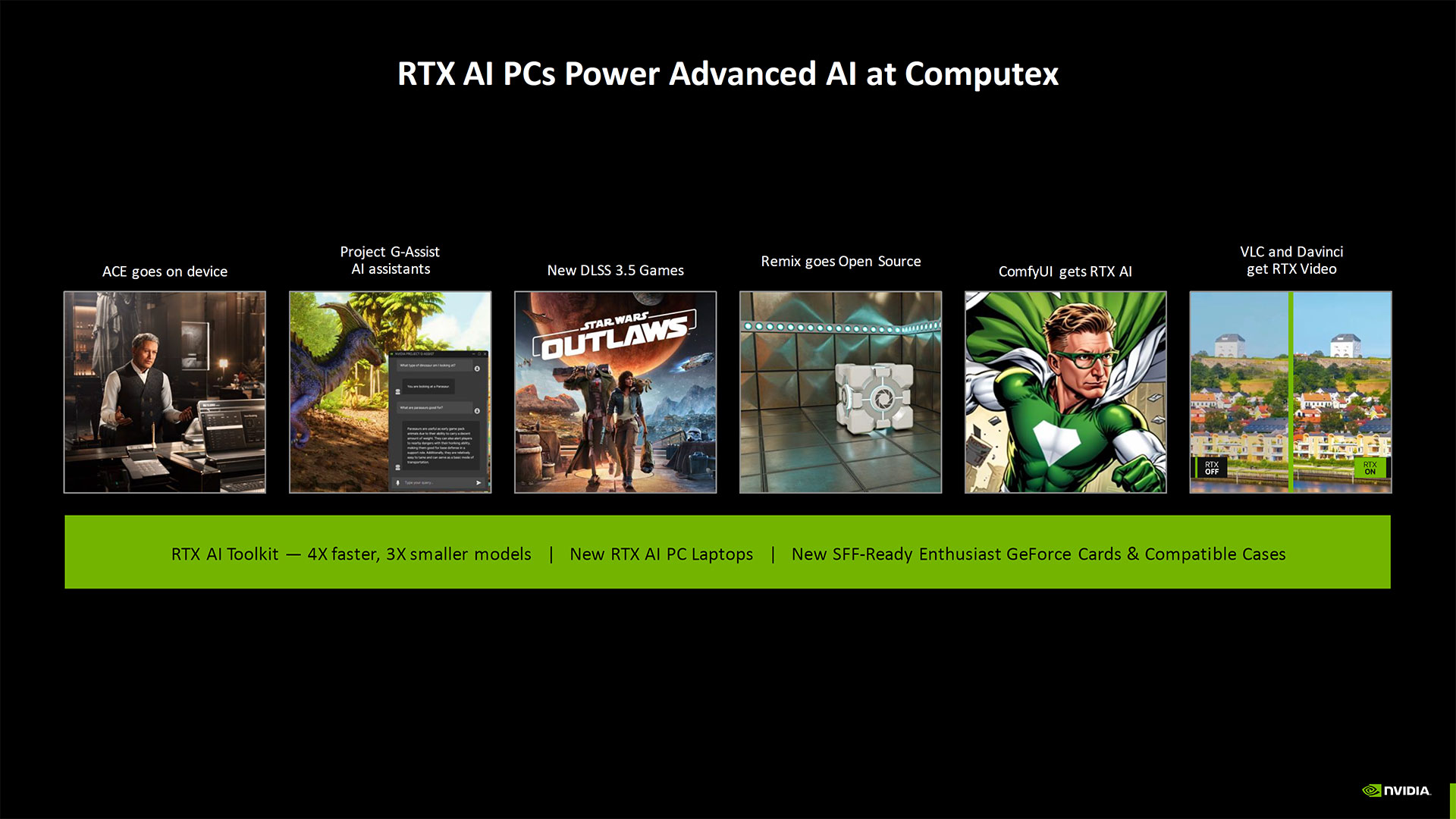

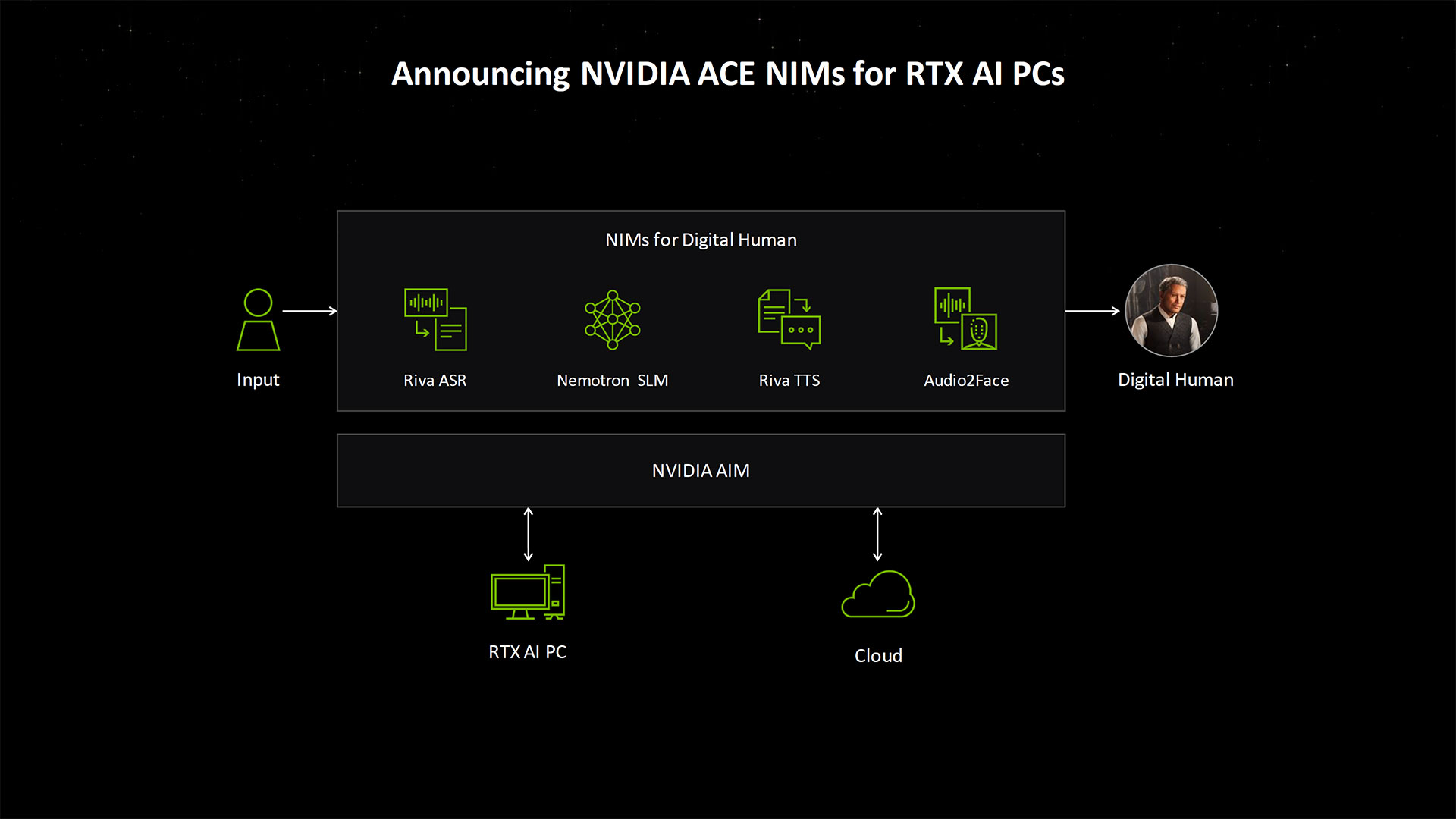

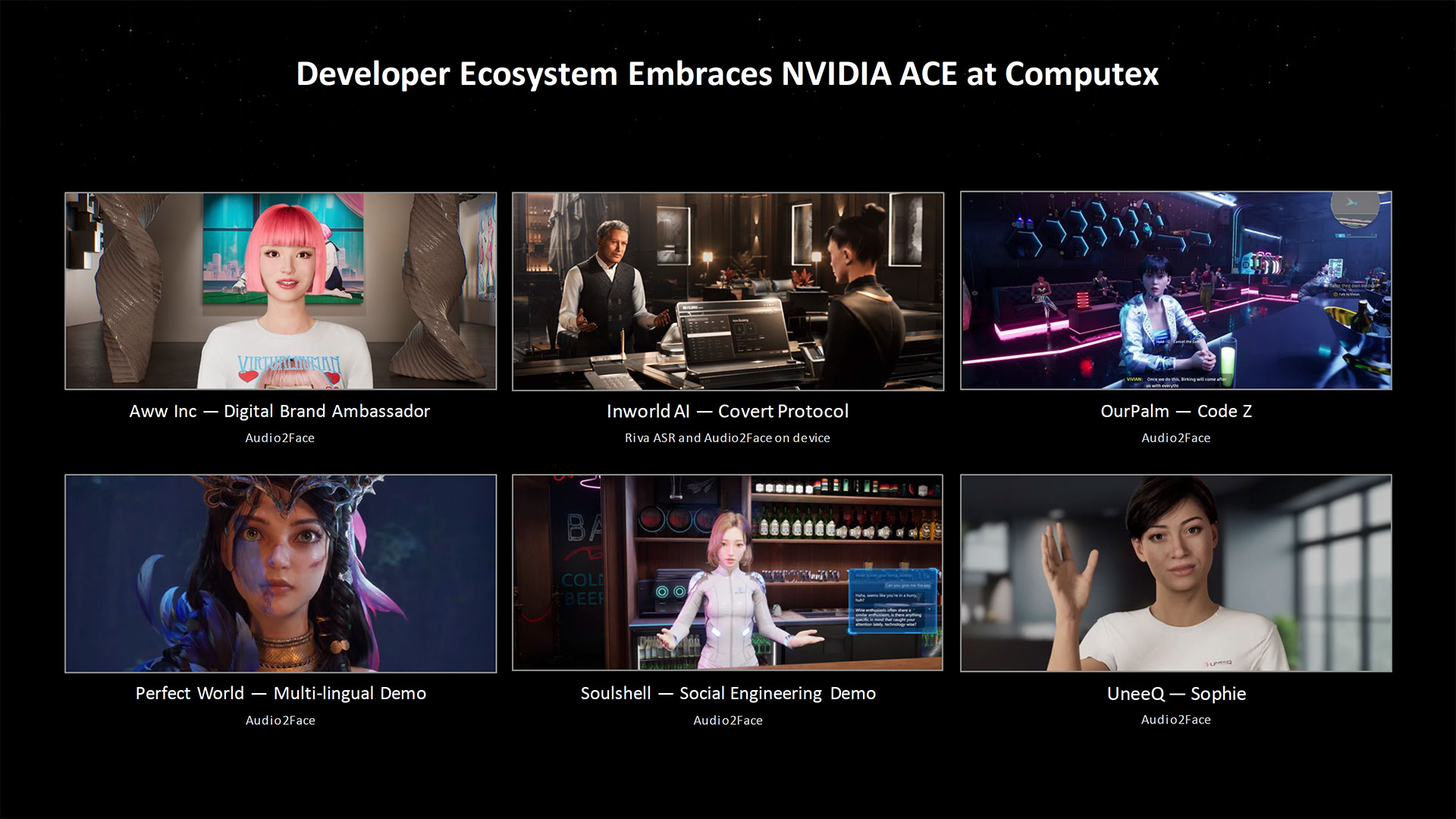

There's a lot of AI news coming from Nvidia, in fact. Some of it is just the latest updates on stuff we've heard about before, like the latest ACE demos (Avatar Cloud Engine) that's been going through various iterations for more than a year. Nvidia also wanted to let everyone know that there will be Windows Copilot+ capable laptops with RTX 40-series GPUs in the near future — powered, somewhat ironically, by rival chips from AMD and Intel. But hey, there's still potential for a Windows AI laptop running an Arm processor with an Nvidia GPU to ship sometime next year.

So yes, that's the gist of the announcement: Laptops using AMD's upcoming Zen 5 "Strix Point" processors, aka Ryzen AI CPUs, will be available with Nvidia RTX 40-series GPUs. Let's also spoil the surprise by saying that there will also be, at some point later this year, Copilot+ laptops equipped with Intel's Lunar Lake processors and RTX 40-series GPUs. And after that, once the Blackwell RTX 50-series GPUs begin shipping and then eventually come to laptops, Copilot+ PCs that have those as-yet-unannounced chips as well.

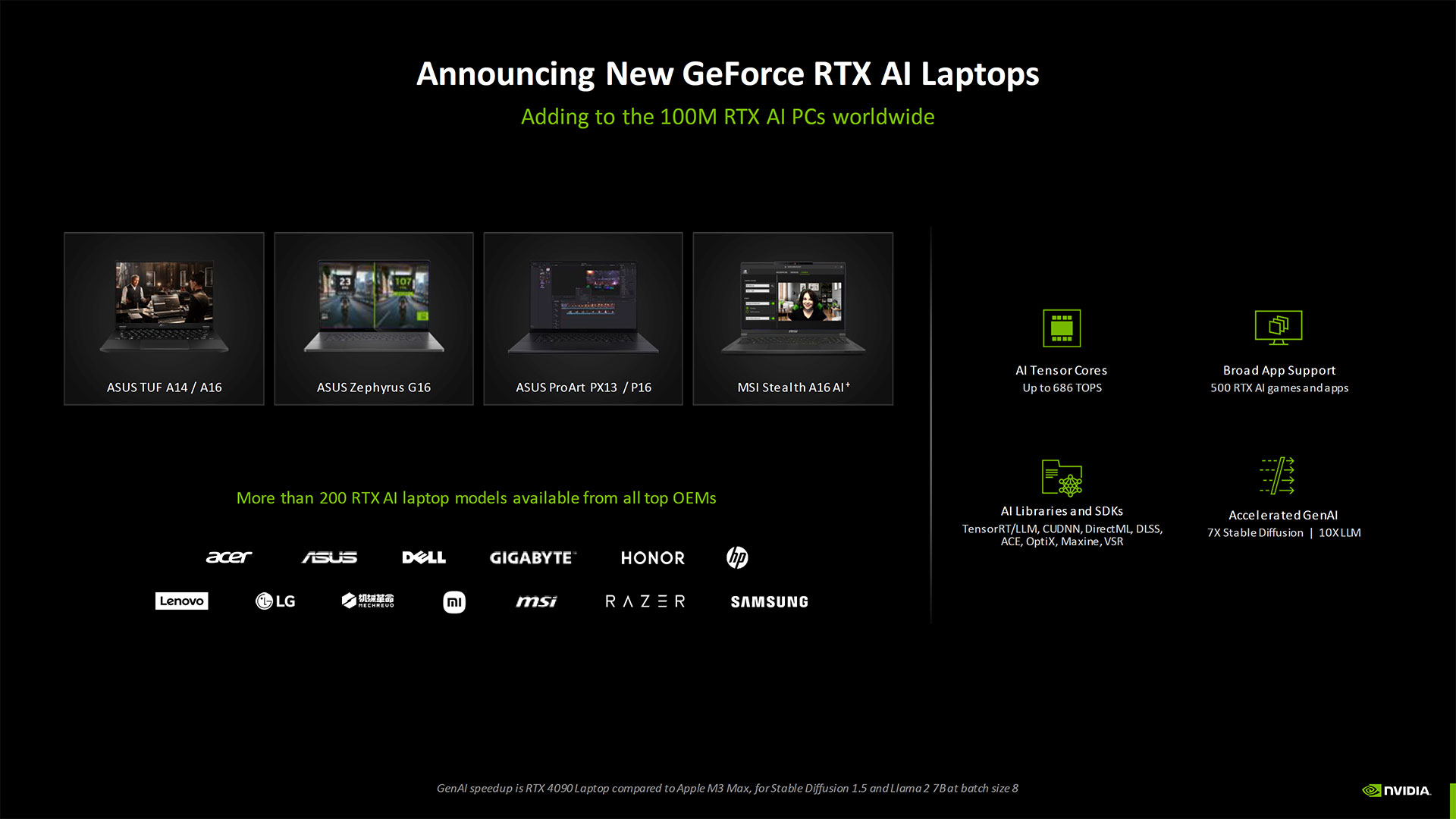

Nvidia provided the above images of five laptops (four from Asus and one from MSI), which will likely be some of the first Copilot+ laptops to have RTX GPUs. It also has a new term, "RTX AI laptop," which basically means any laptop shipped in the past year or so with an RTX 40-series dedicated graphics card, ranging from the RTX 4050 Laptop GPU up through the RTX 4090 Laptop GPU. Every major Windows laptop manufacturer has such a system, and so there are "more than 200 RTX AI laptop models available" already.

What's interesting is that in an earlier briefing, Nvidia showed all five of these laptops as "RTX AI | Copilot+" in a presentation. The final version of the slide (shown below) dropped any mention of Copilot+, as apparently Nvidia wants to focus more on the RTX AI laptop branding.

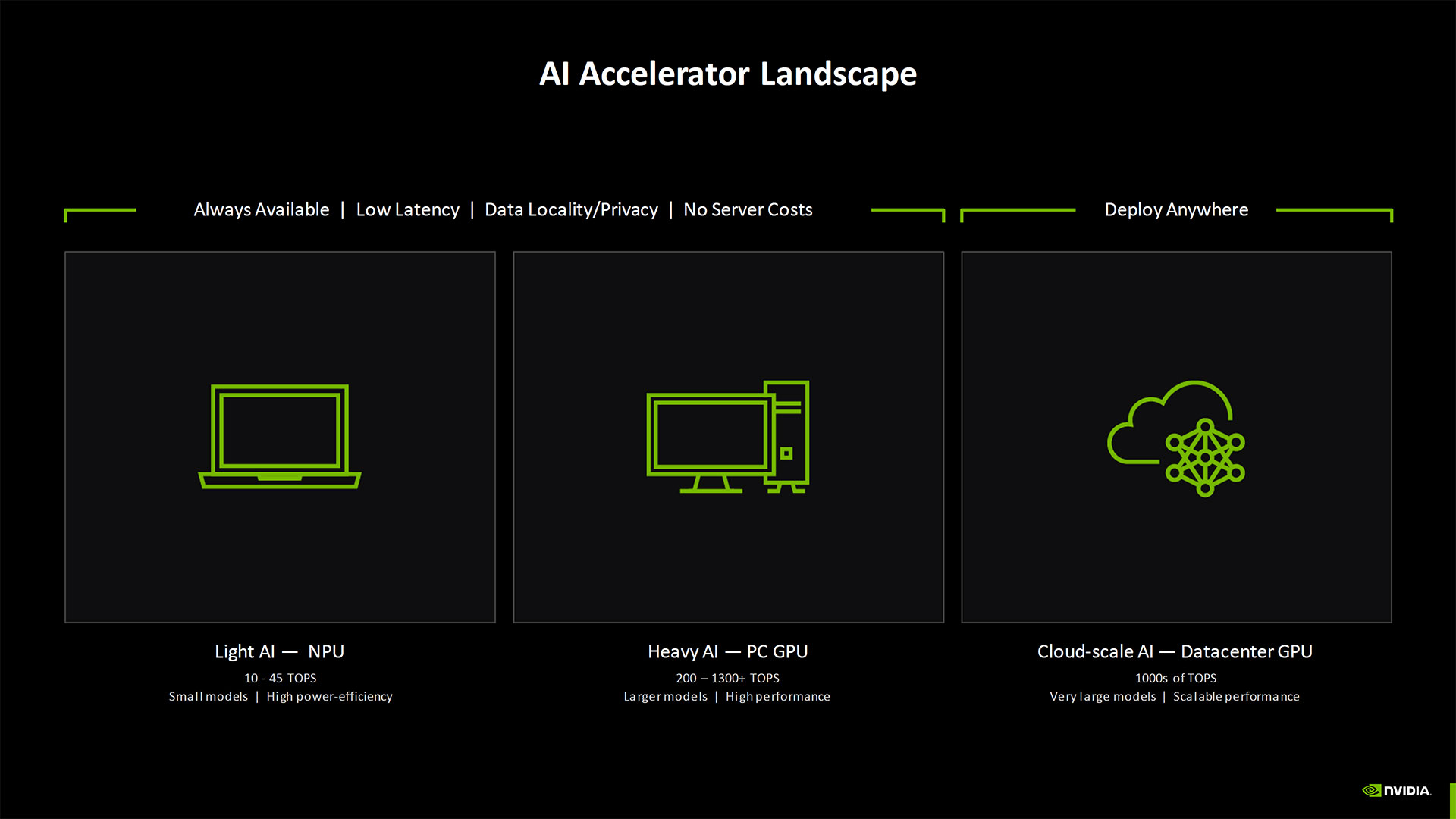

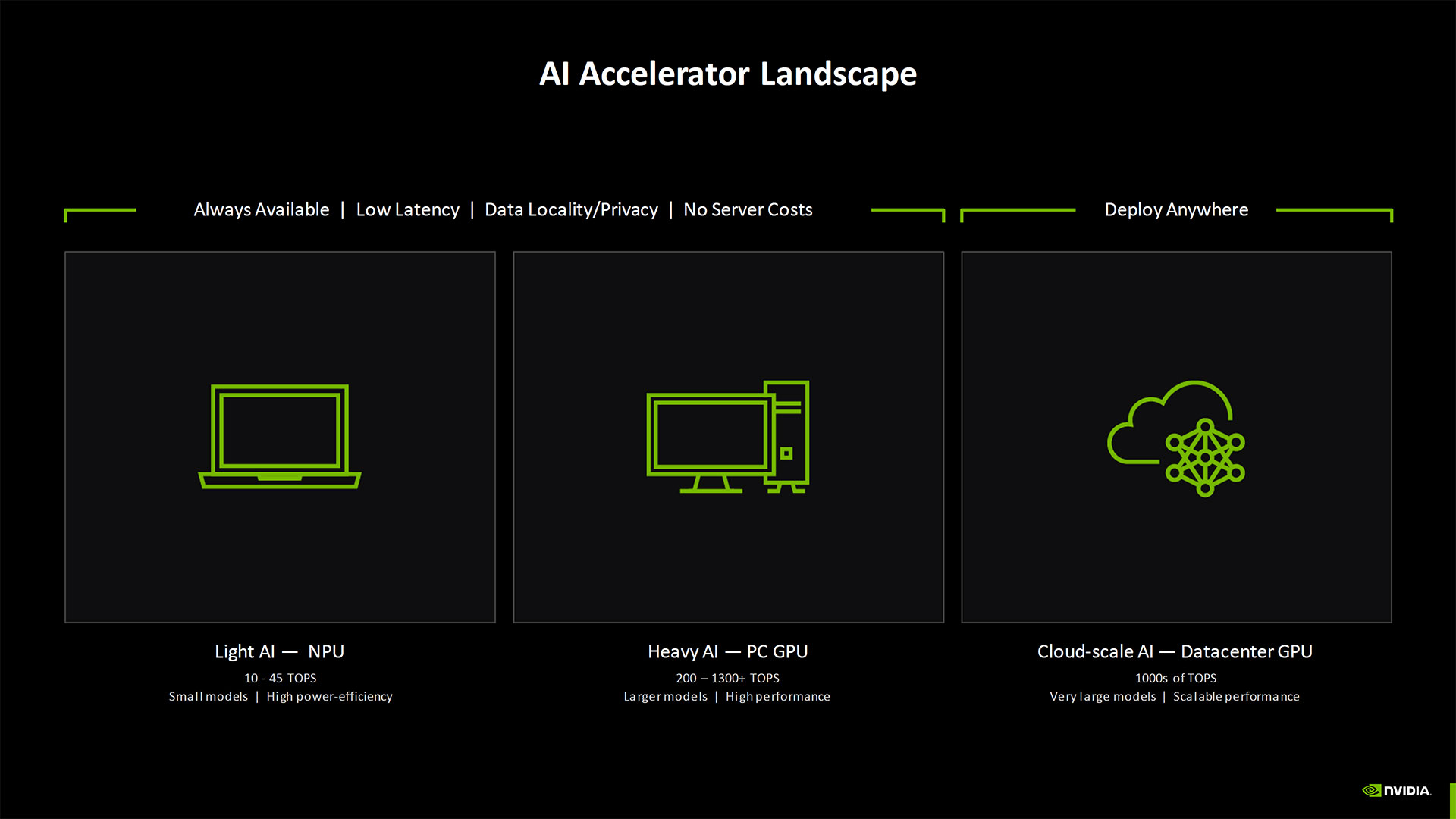

You can understand Nvidia's side of things. Here we have "AI PCs" that provide anywhere from 10 to 45 teraops (TOPS) of INT8 compute running on an NPU (Neural Processing Unit). But Nvidia's RTX 40-series GPUs have already been far exceeding that level of performance for nearly two years now — and the previous generation RTX 30-series GPUs also offered plenty of TOPS as well (though Nvidia is now focused more on the future).

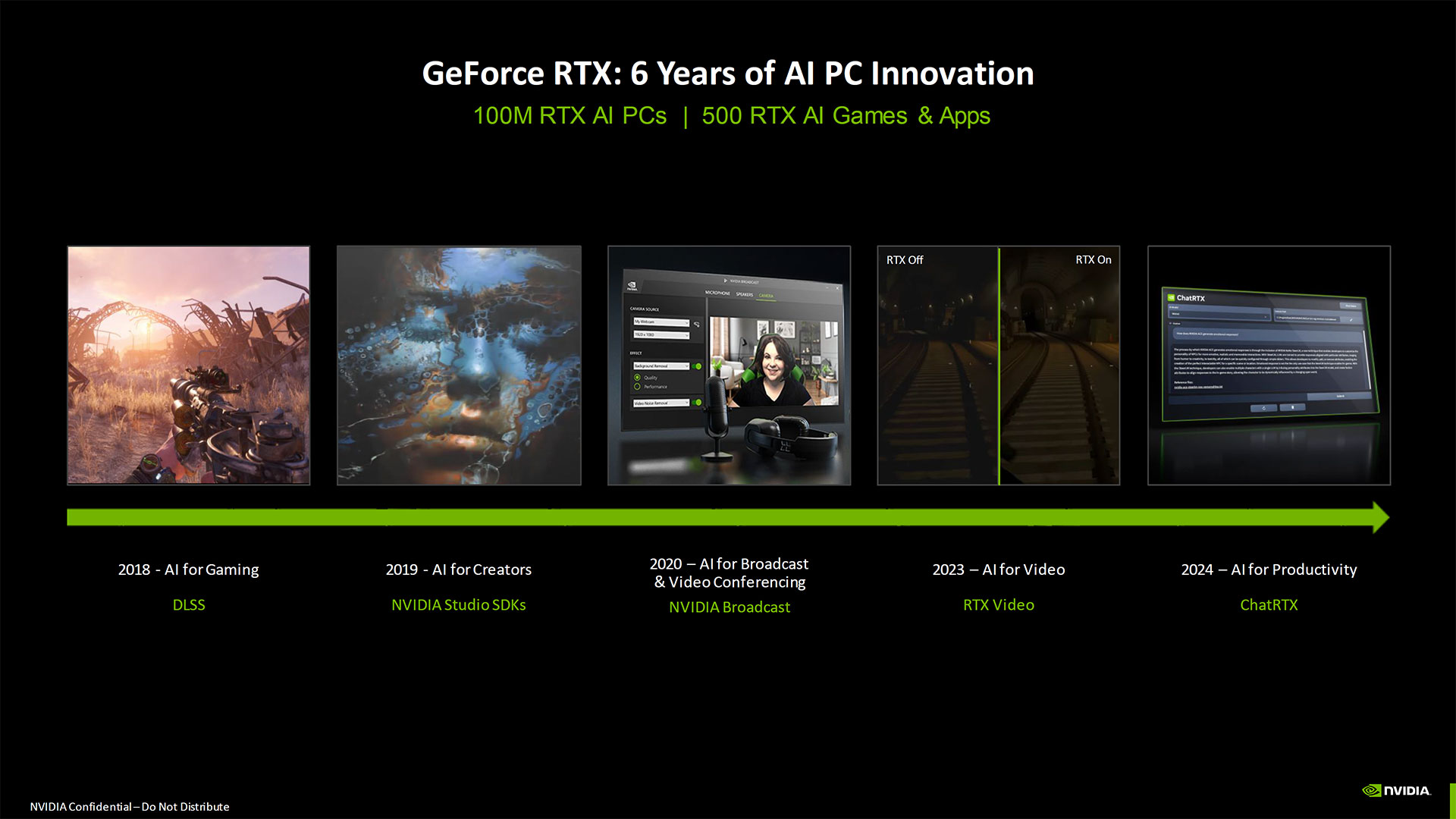

The current 40-series range in AI TOPS from around 200 TOPS on the RTX 4050 Laptop GPU up to more than 1300 TOPS for the desktop RTX 4090, and everything in between. For reference, the RTX 30-series ranged from around 23 TOPS (mobile RTX 3050 at base clock) to as high as 320 TOPS on the RTX 3090 Ti — and double those numbers for sparse operations. Even the RTX 20-series launched back in 2018 delivered potentially hundreds of TOPS of compute, with the RTX 2060 laptop GPU offering 59 TOPS up to the RTX 2080 Ti with 215 TOPS.

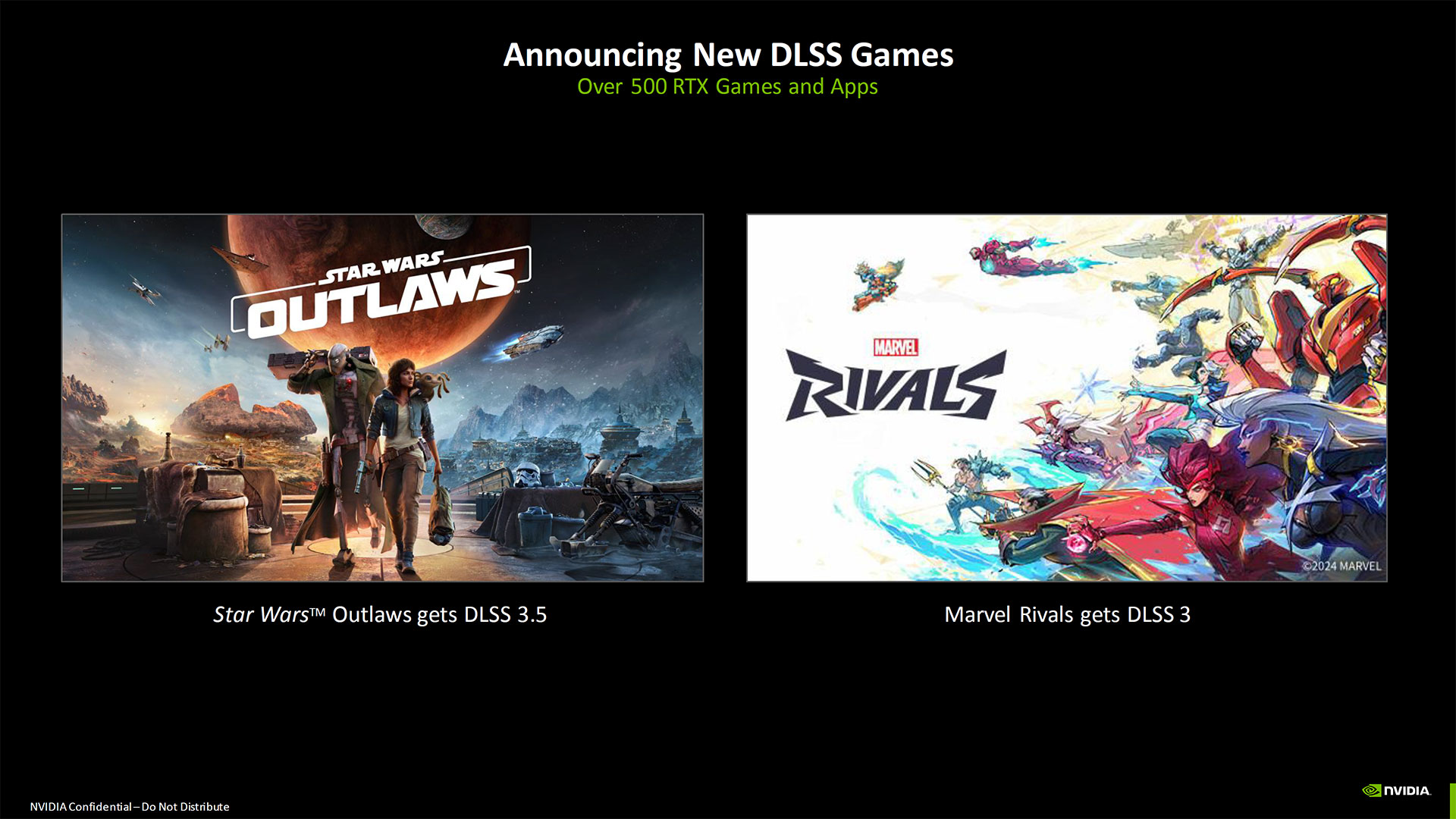

Which means, if you were to use Nvidia RTX GPUs for AI, we've had higher than Copilot+ levels of compute performance on tap for at least the past six years. We just weren't really doing much with it, other than running DLSS in games — and Nvidia Broadcast for video and audio cleanup. And Nvidia has plenty of other AI-related tech still in the works.

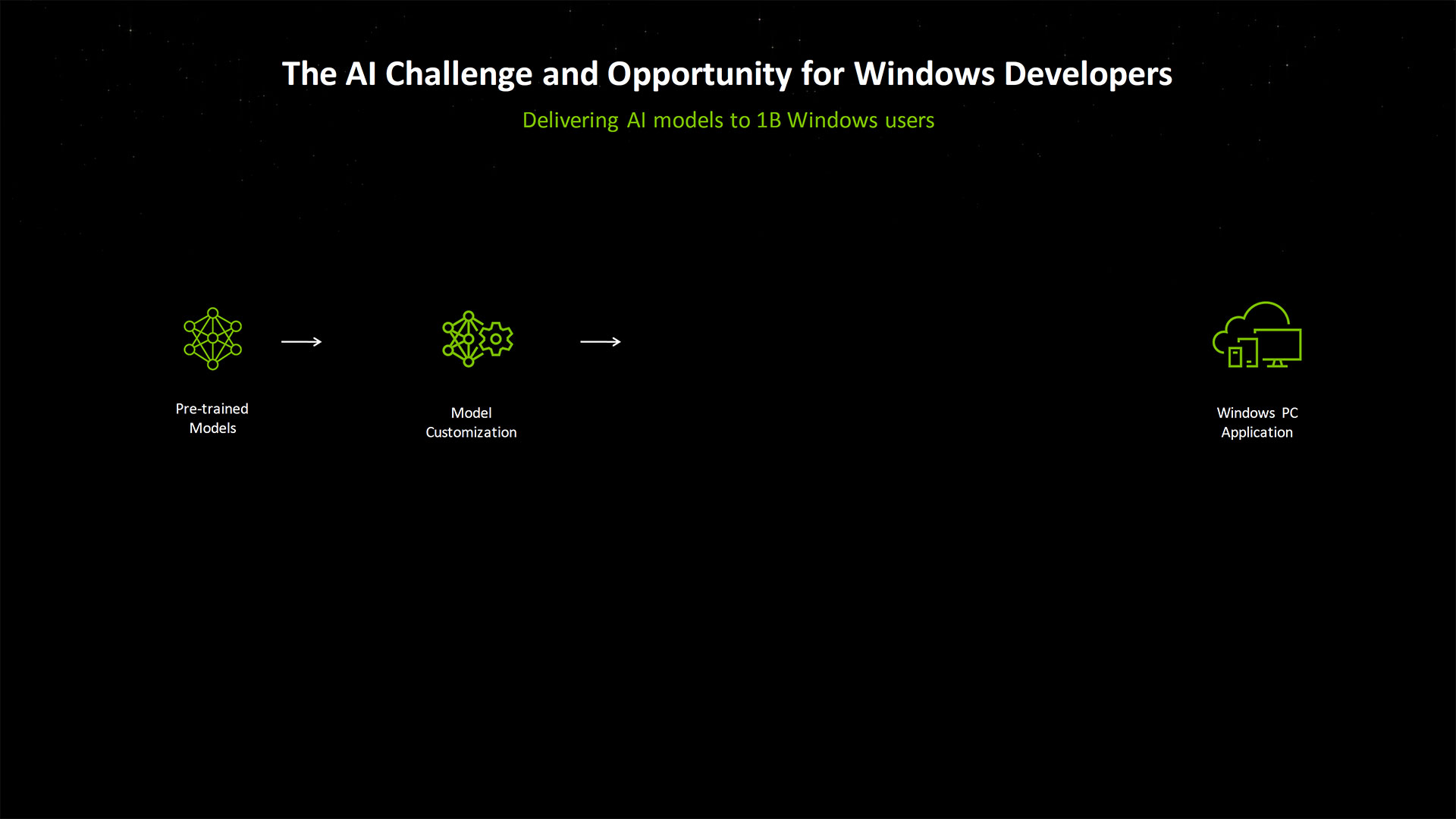

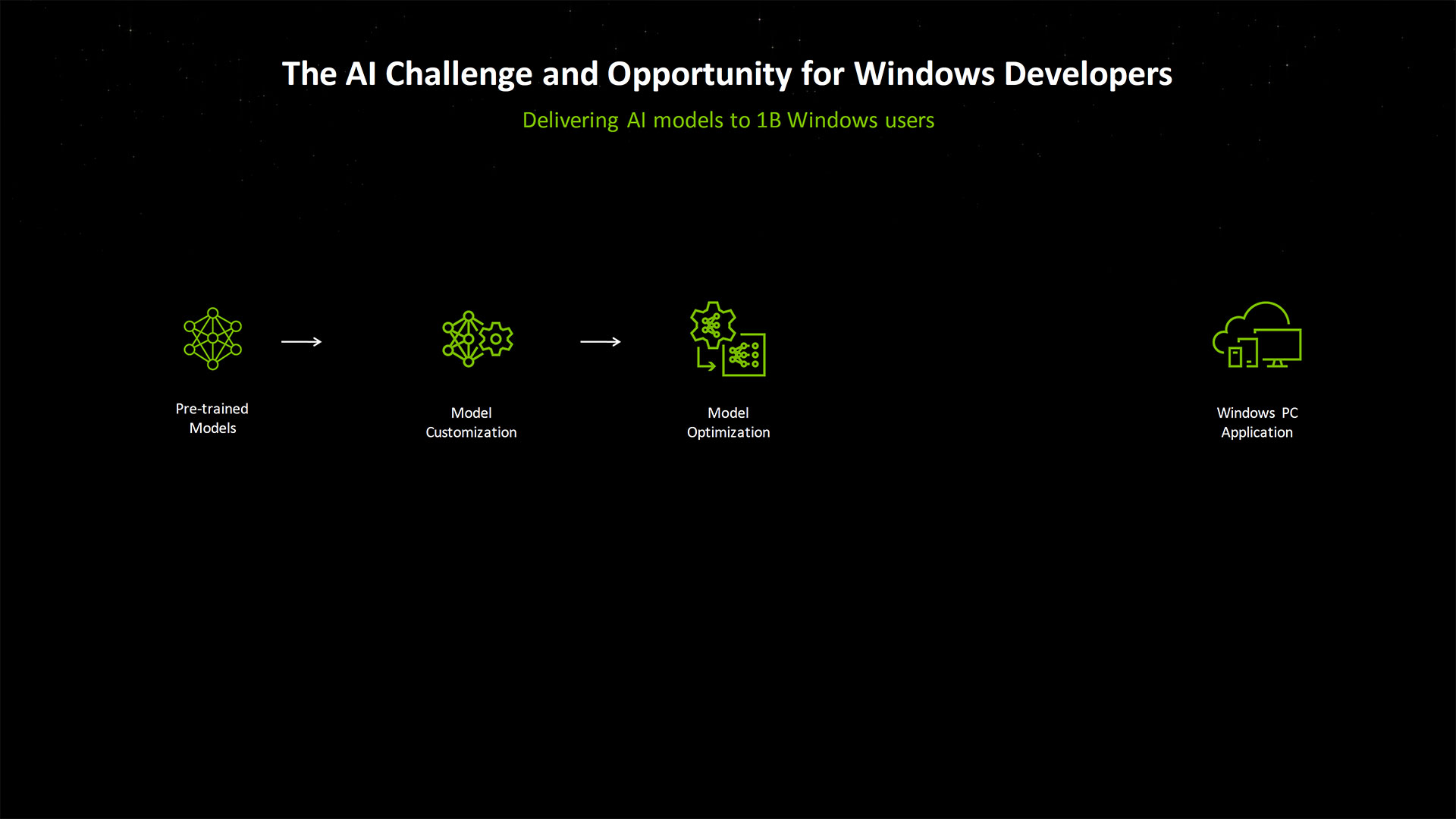

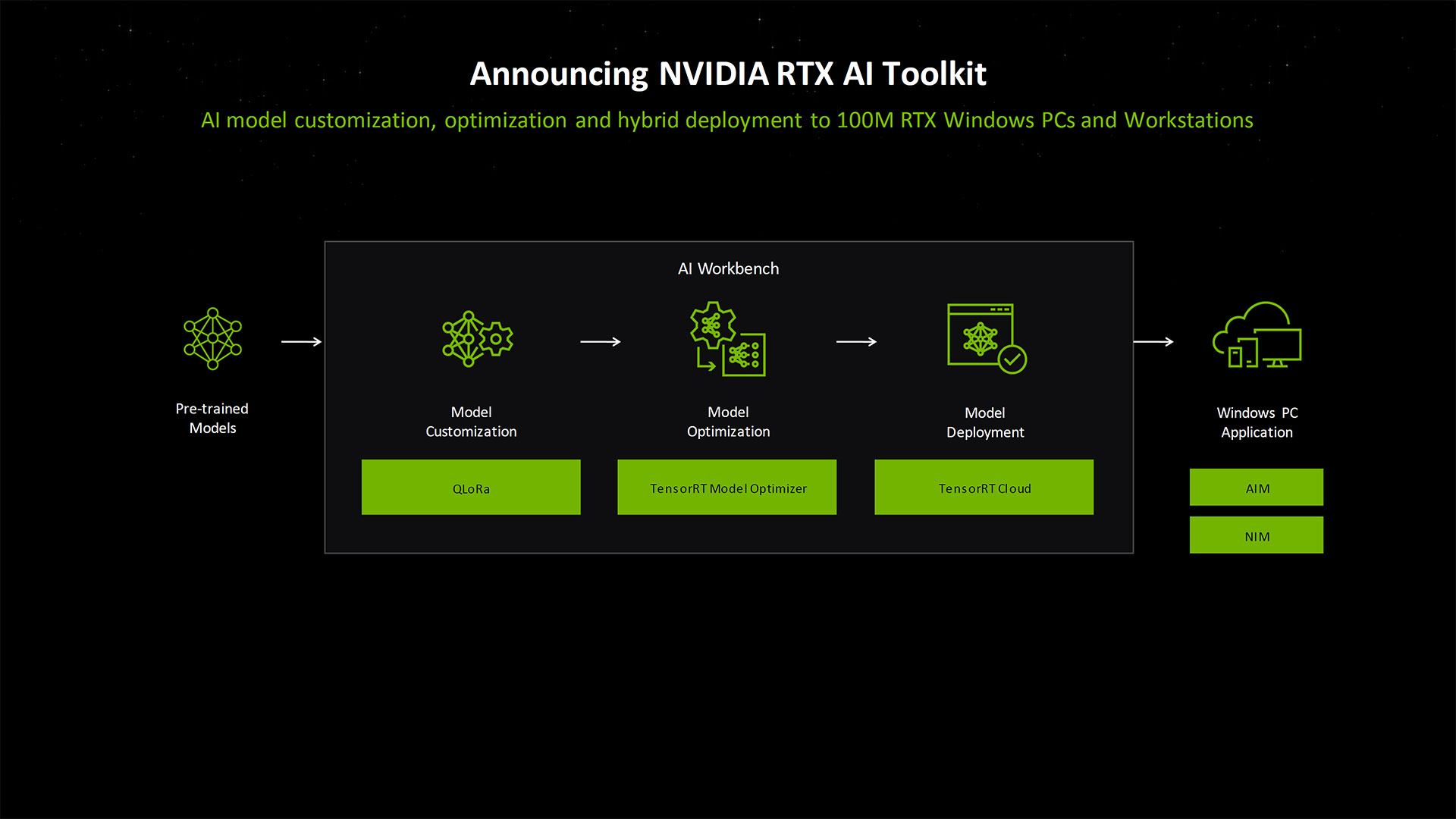

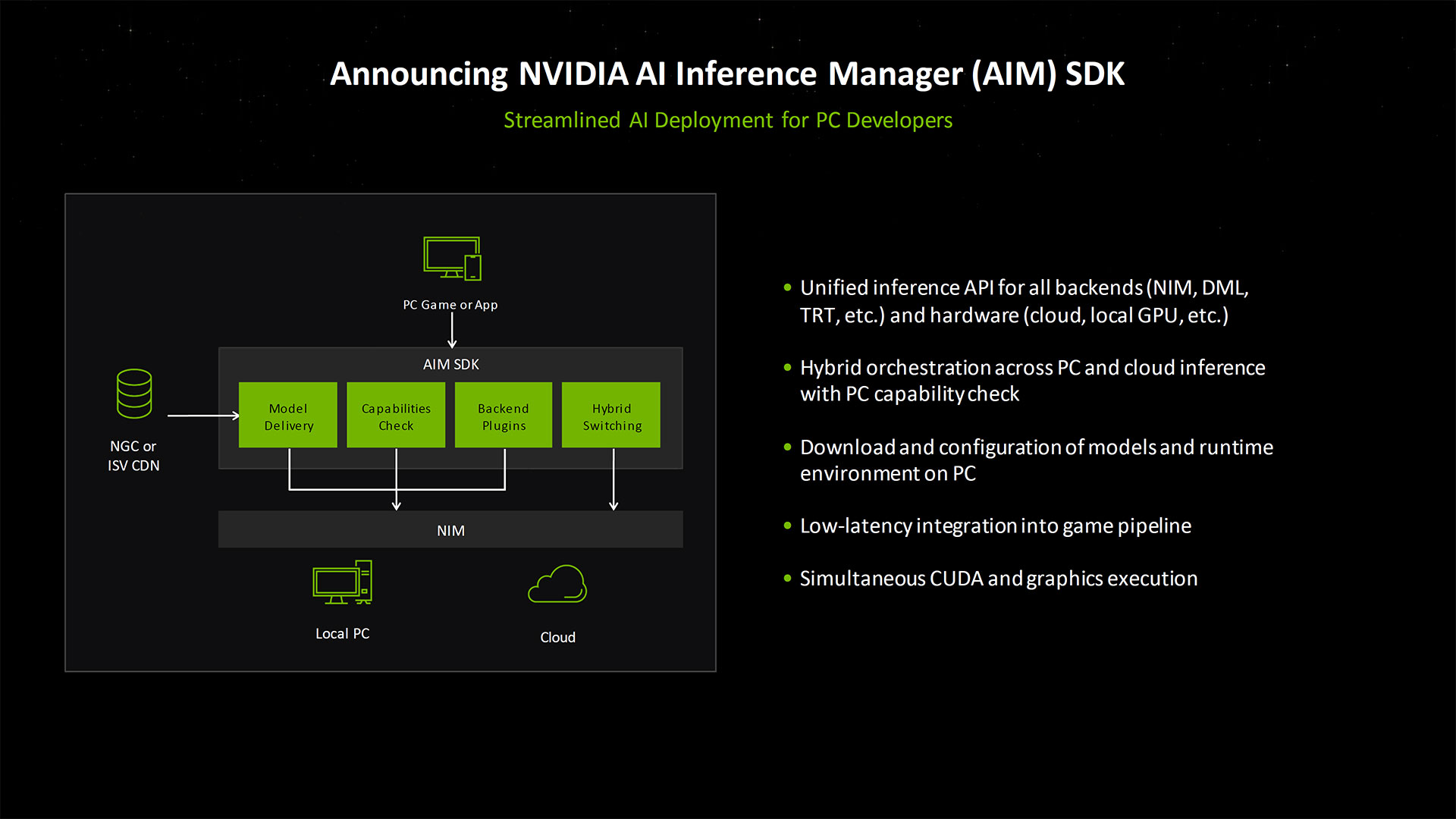

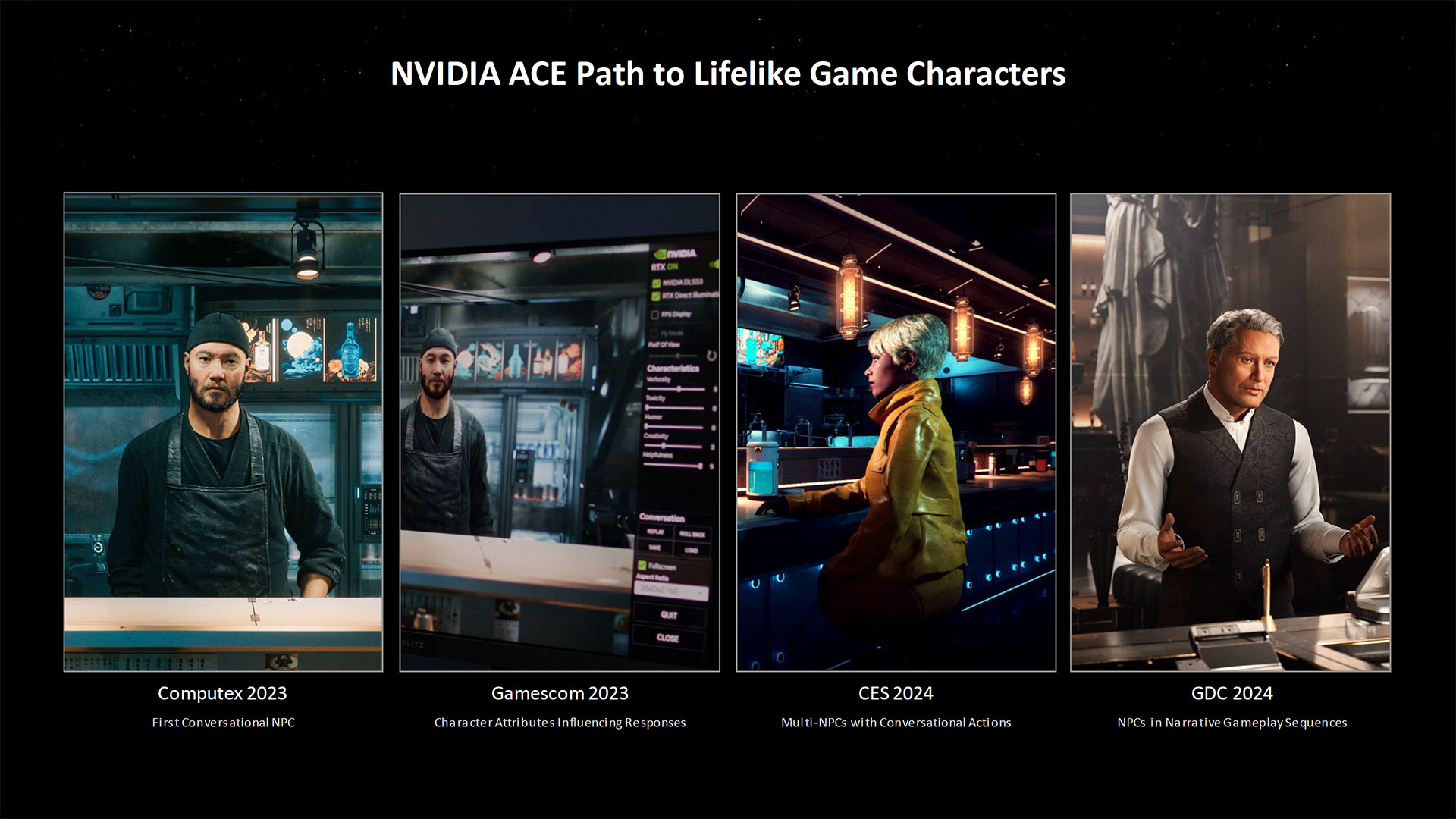

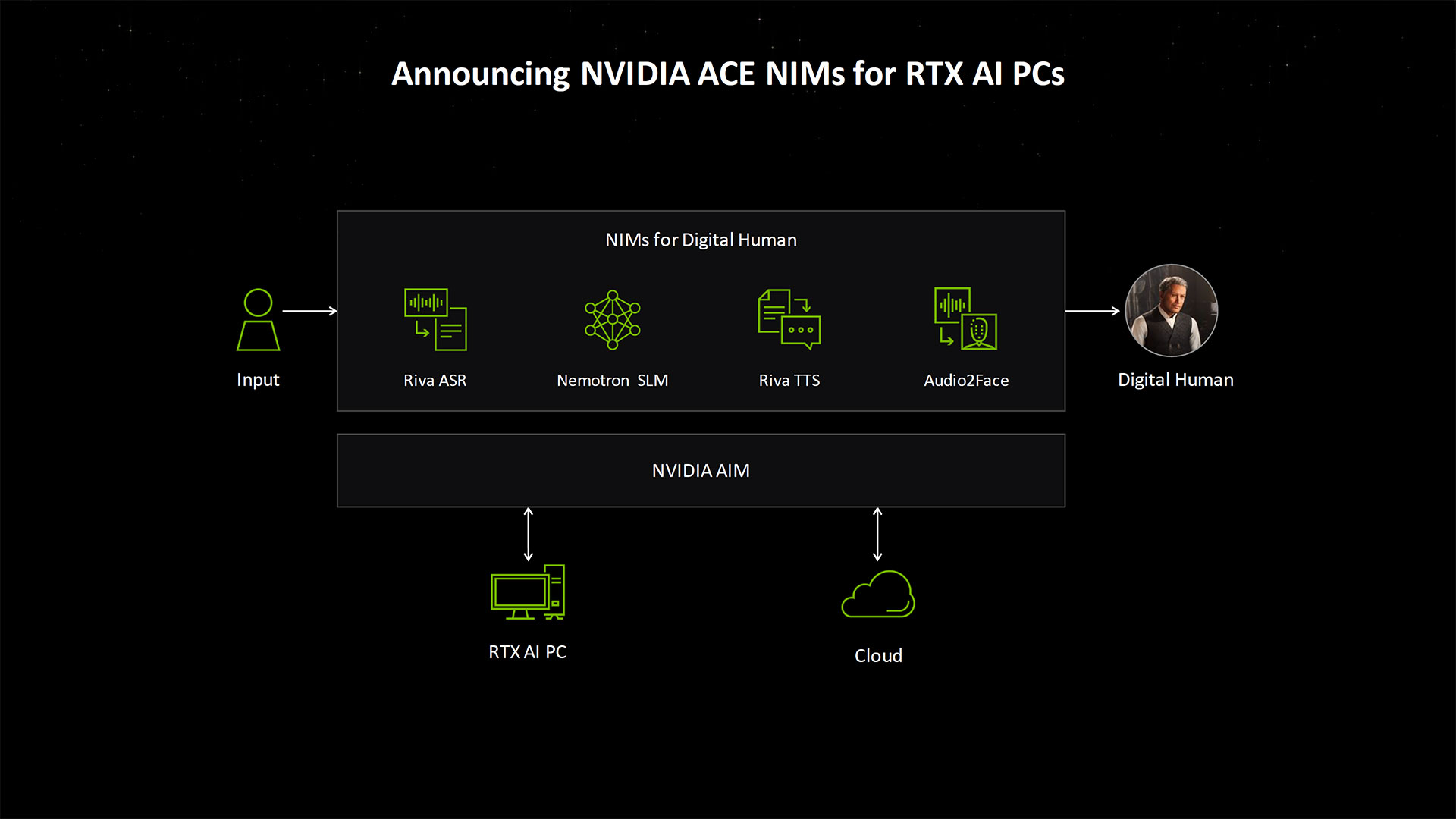

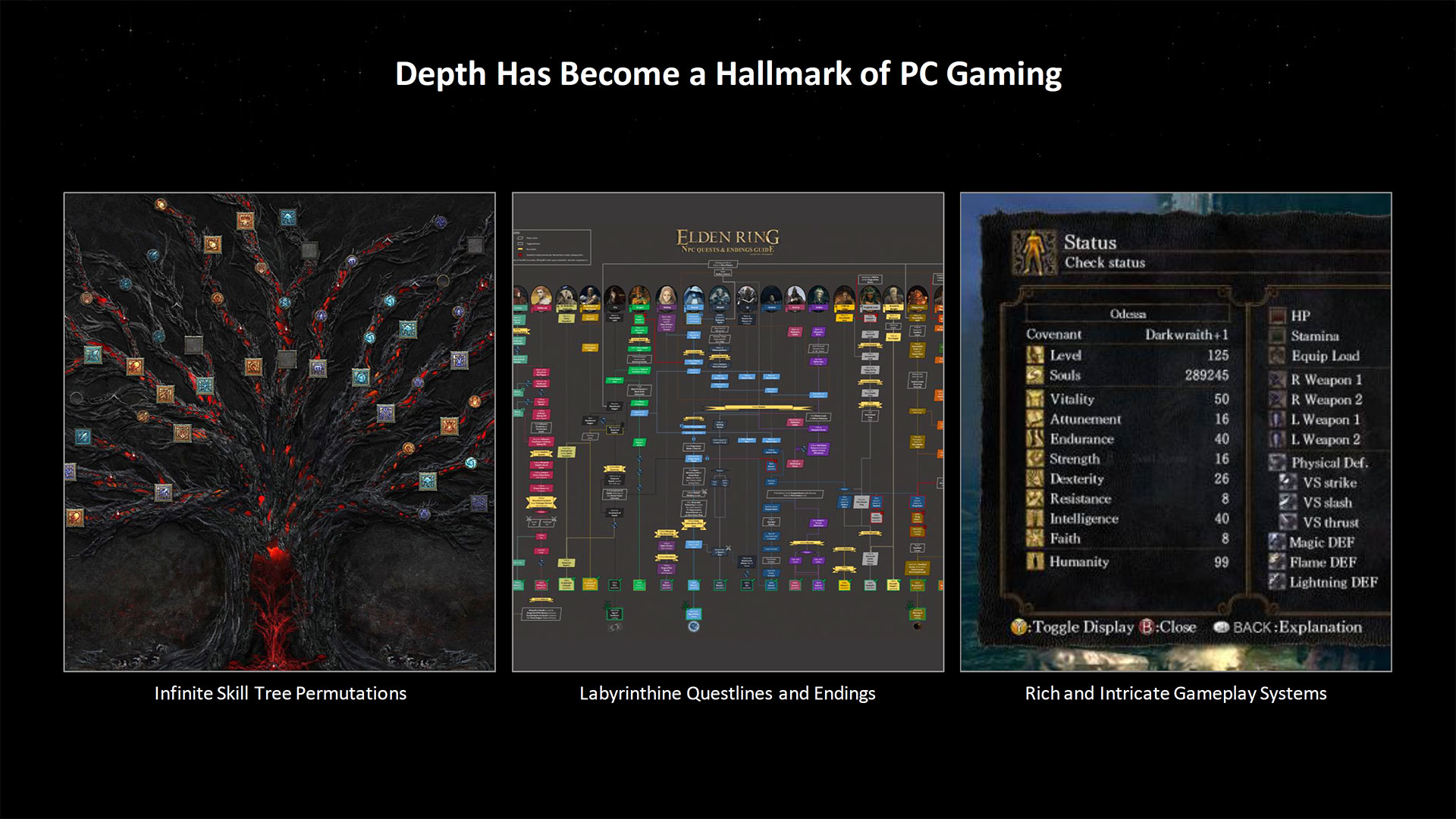

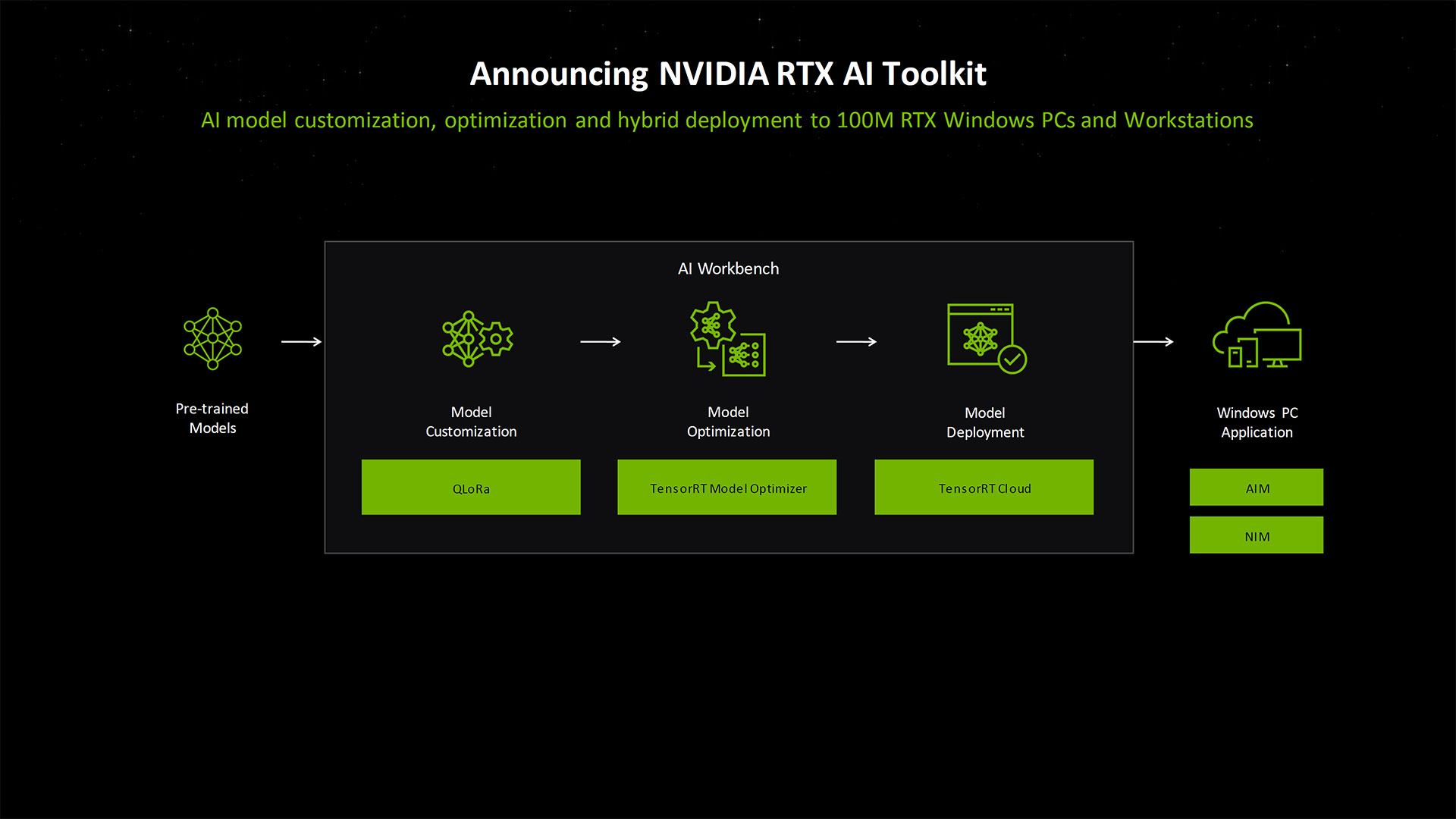

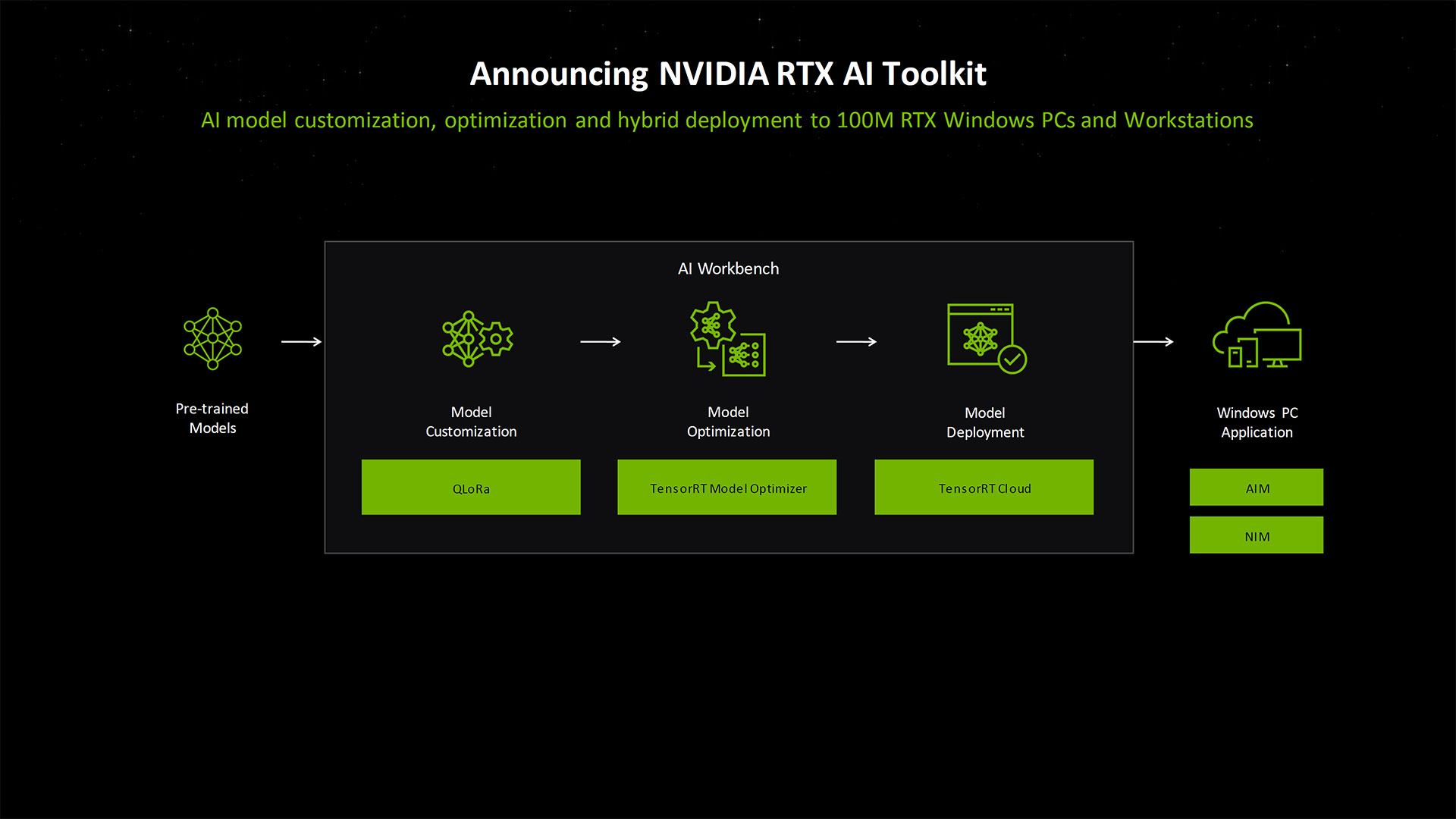

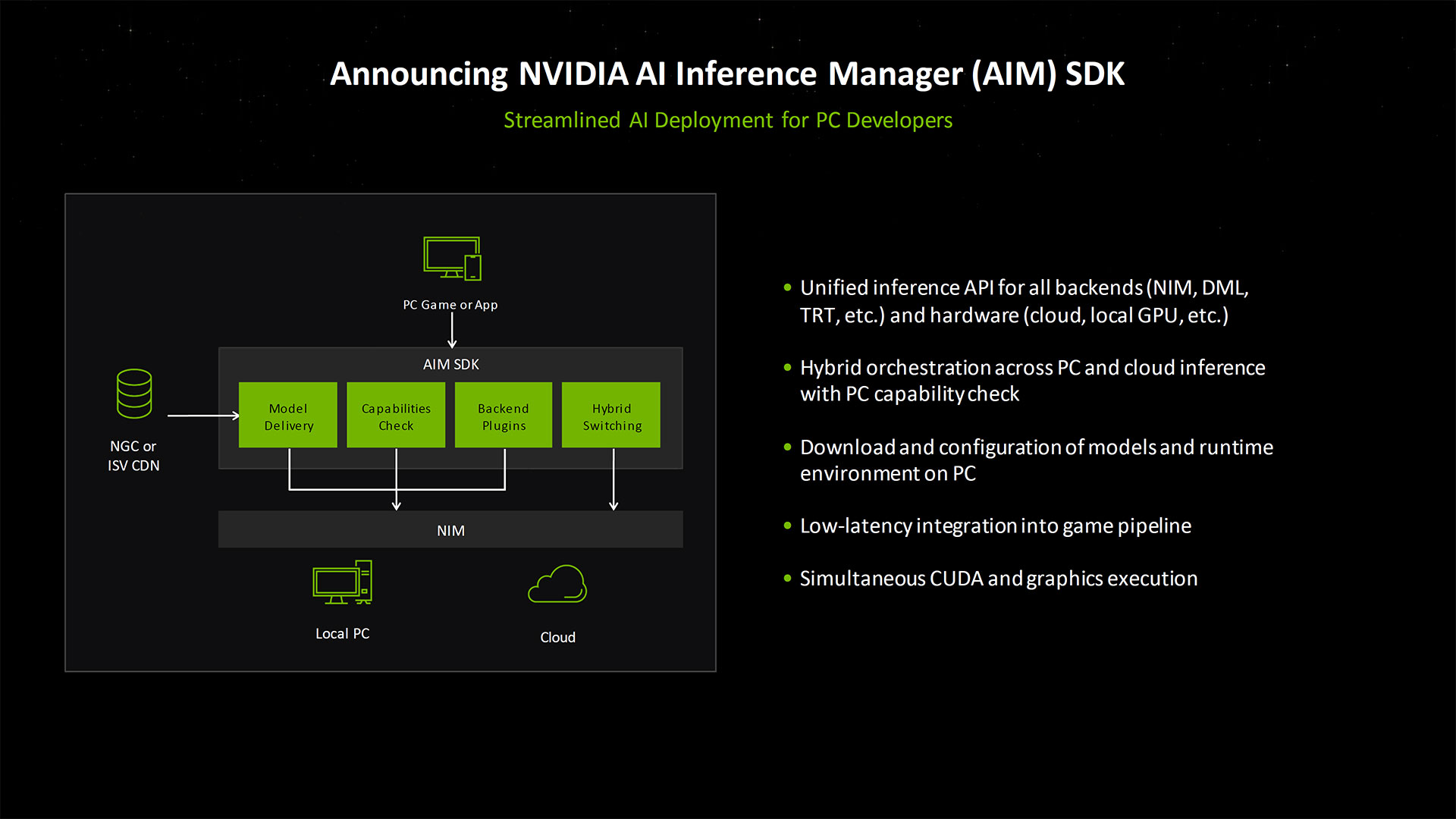

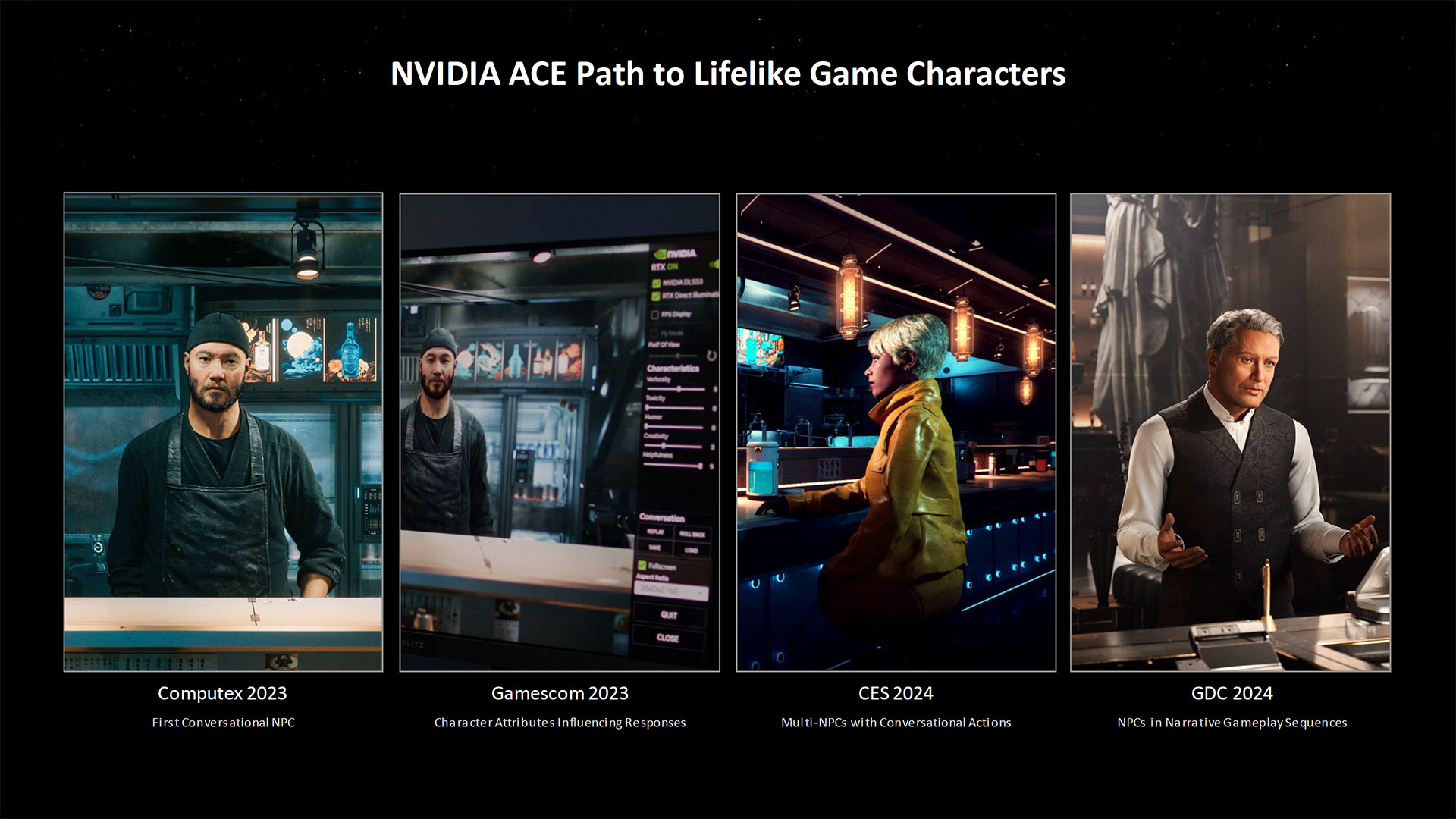

Also announced today is the new Nvidia RTX AI Toolkit, which helps developers with AI model tuning and customization all the way through to model deployment. The toolkit can potentially be used to power NPCs in games and other applications. We've seen examples of this with the ACE demos mentioned above, where the first conversational NPC demo was shown at last year's Computex. It's been through multiple refinements now, with multiple NPCs, and Nvidia sees ways to go even further.

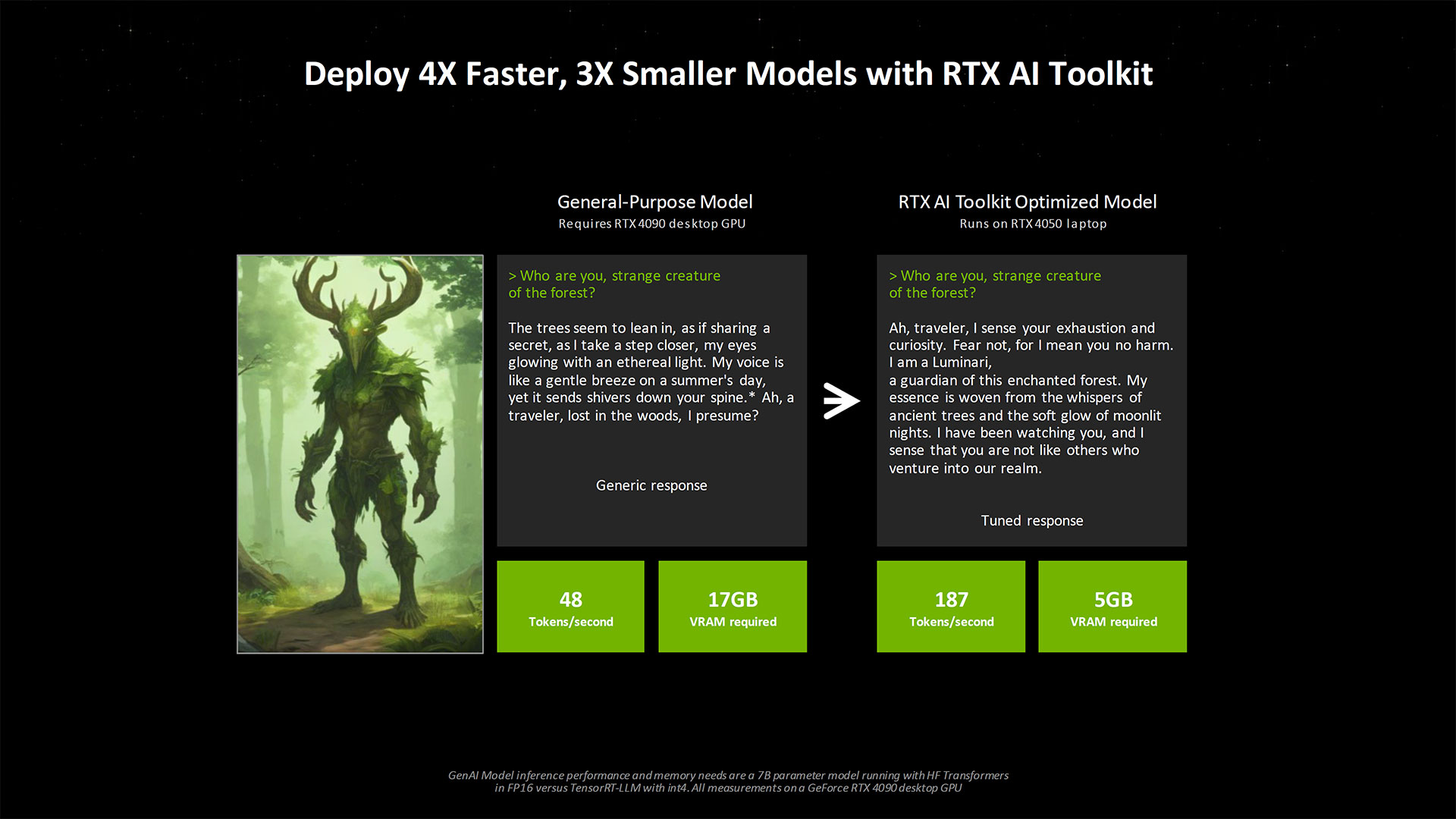

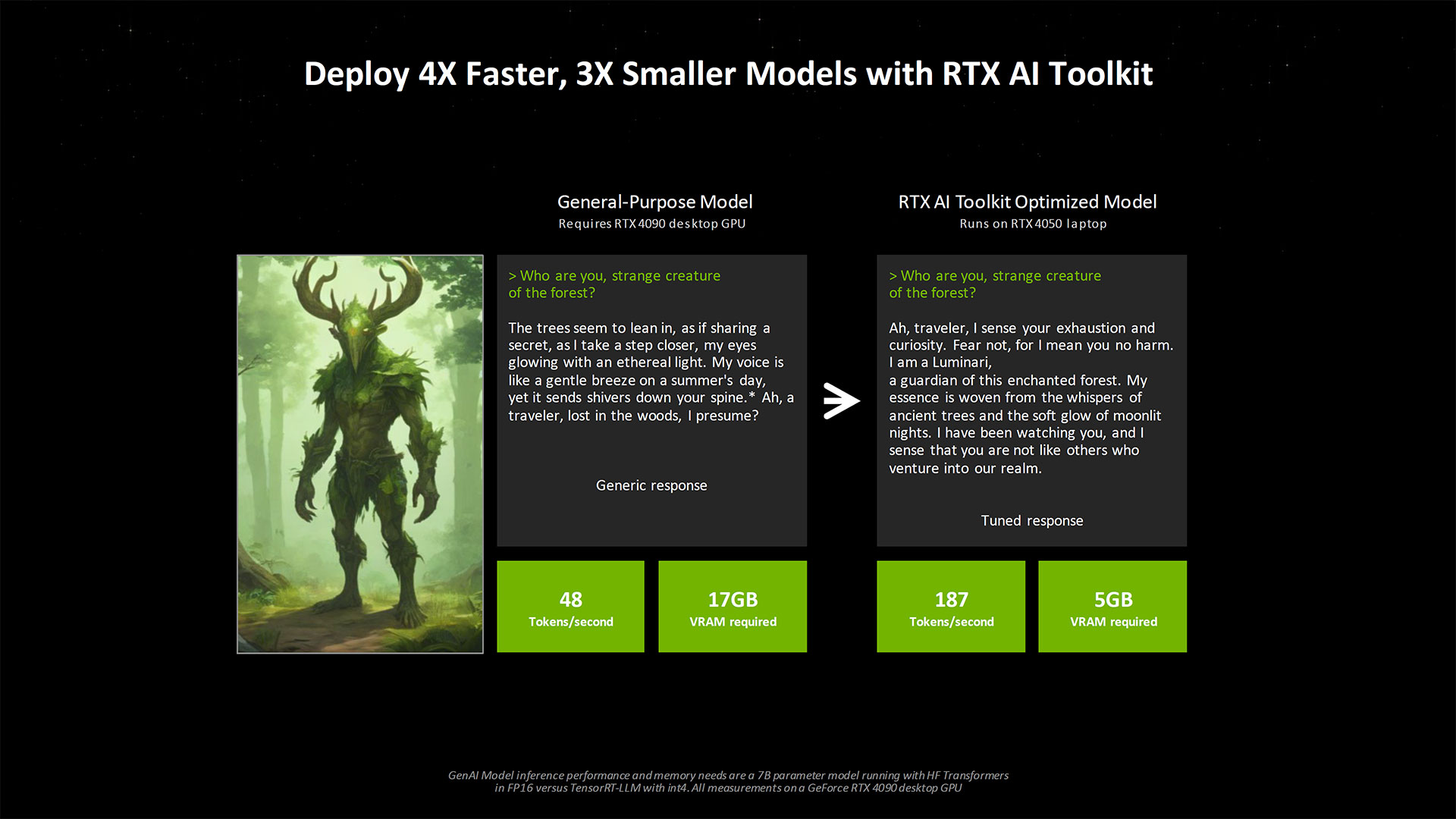

One example given was a general purpose LLM that used a 7 billion parameter LLM. The interaction with an LLM powering an NPC provided the usual rather generic responses. It also required an RTX 4090 (17GB of VRAM) to run the model, and only generated 48 tokens per second. Using the RTX AI Toolkit to create an optimized model, Nvidia says developers can get tuned responses that are far more relevant to a game world, and it only needed 5GB of VRAM and spit out 187 tokens per second — nearly four times the speed with one third the memory requirement. More importantly perhaps, the tuned model could run on an RTX 4050 laptop GPU, which only has 6GB of VRAM.

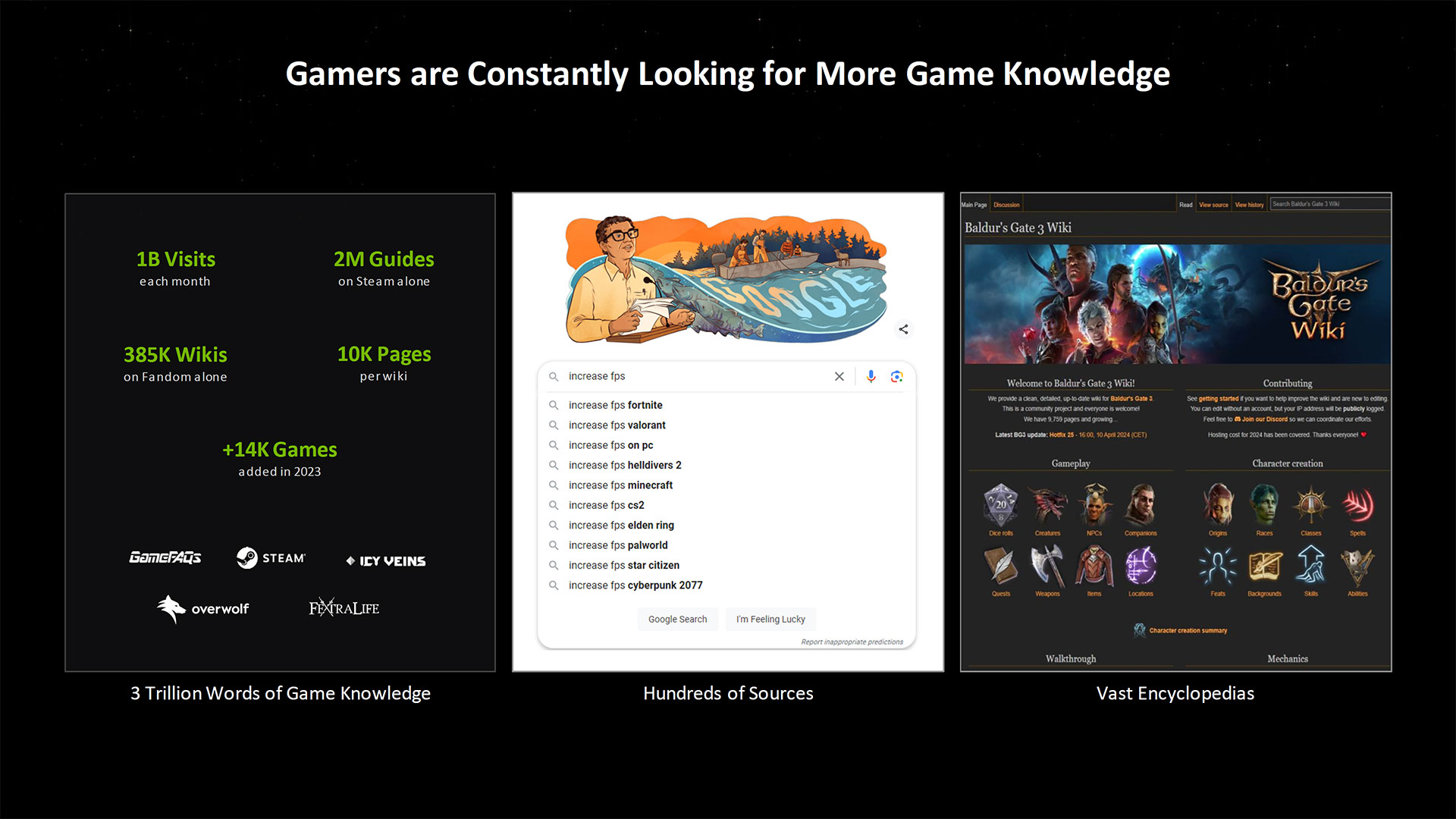

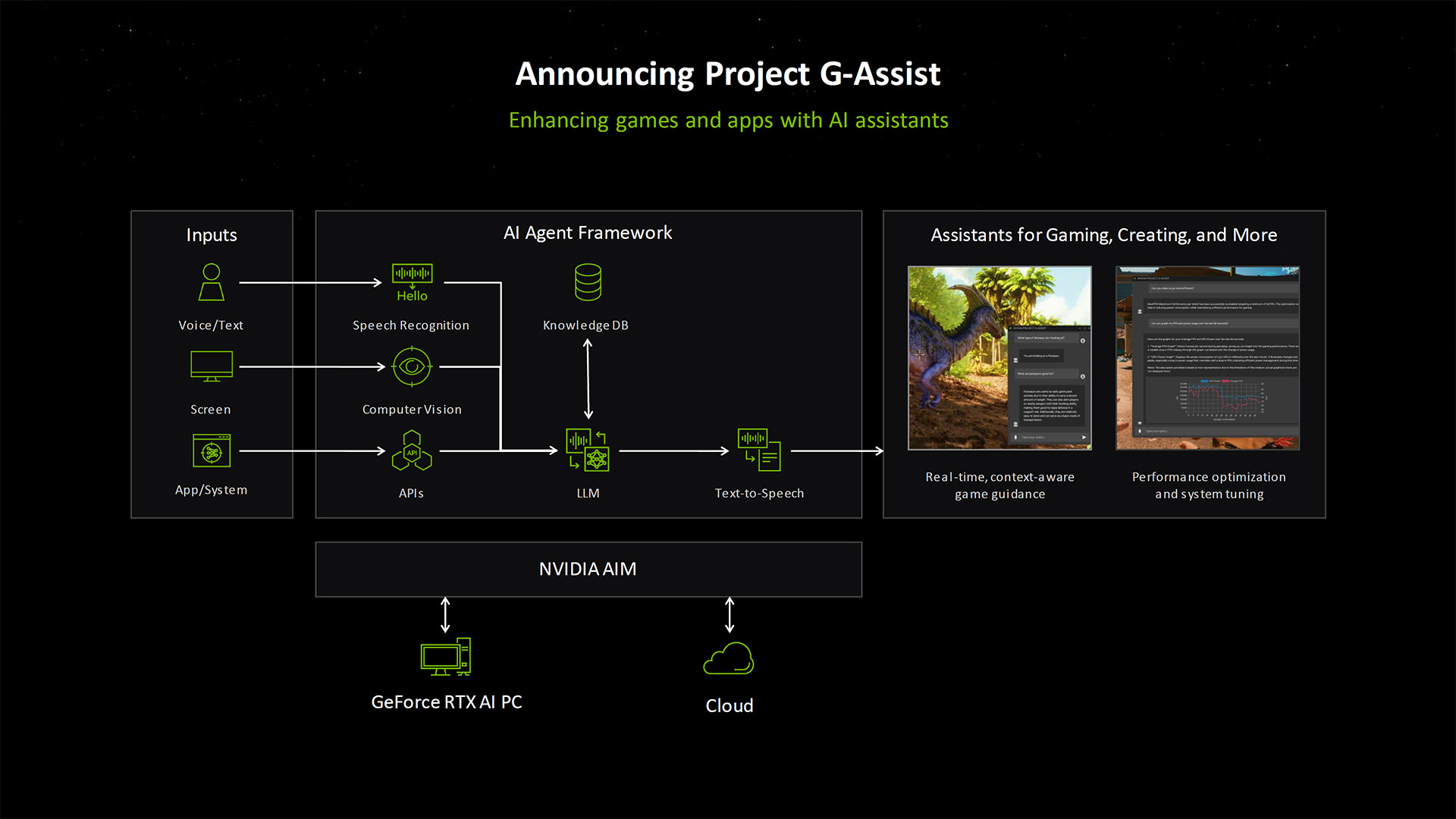

Nvidia also showed a tech demo of how all of these interrelated AI technologies could come together to provide ready access to game information with its Project G-Assist. We've covered that in more detail separately.

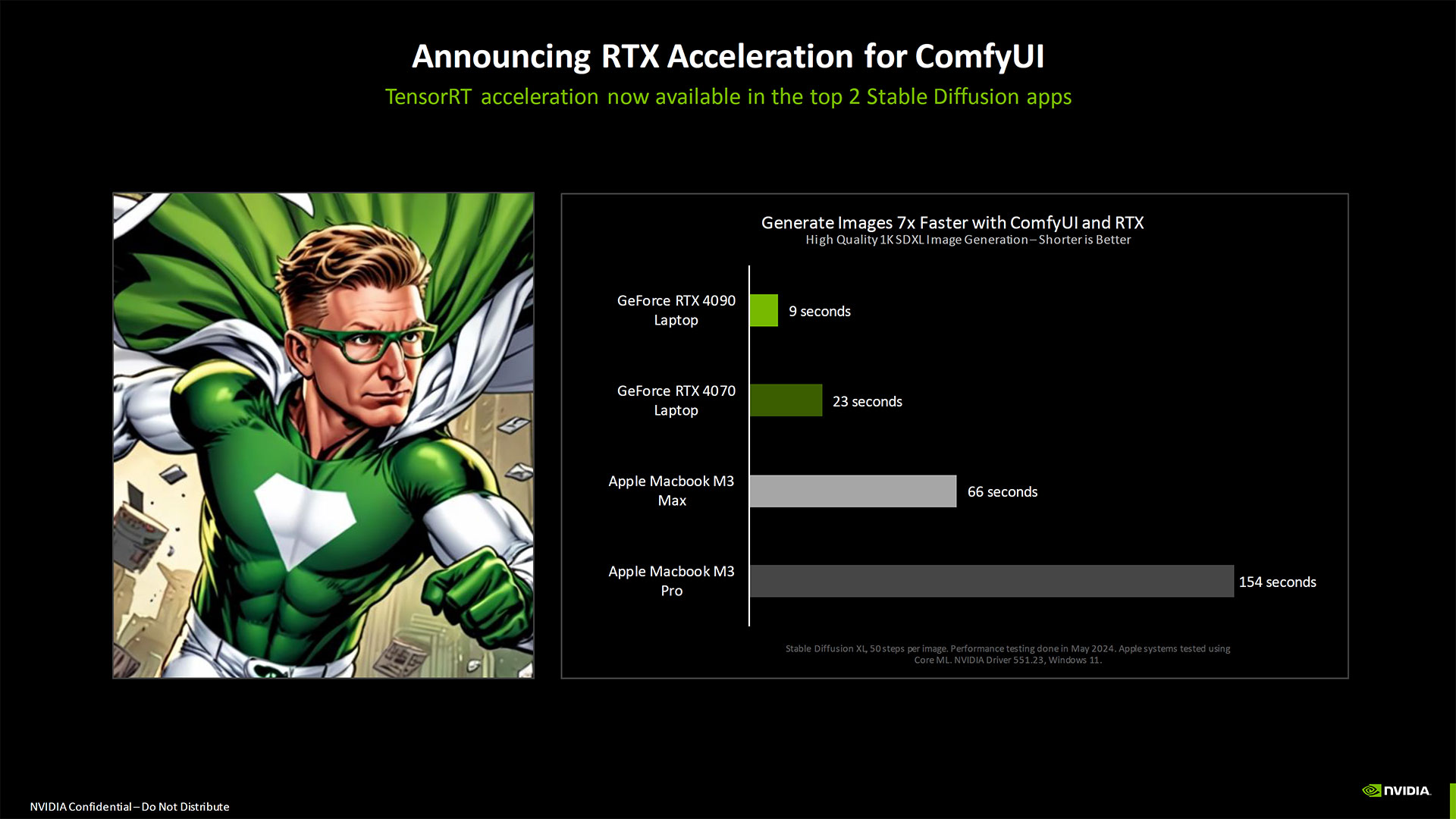

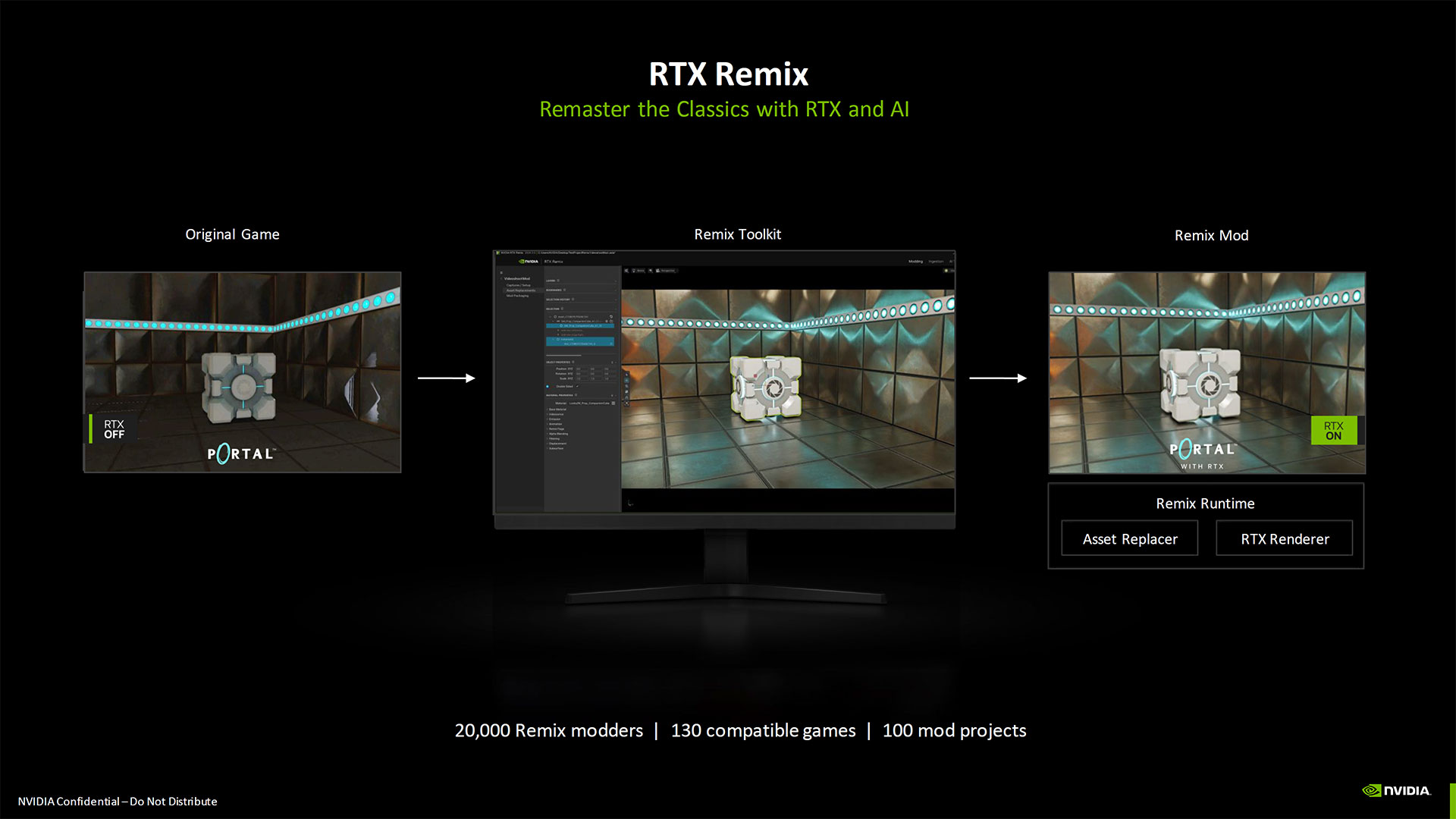

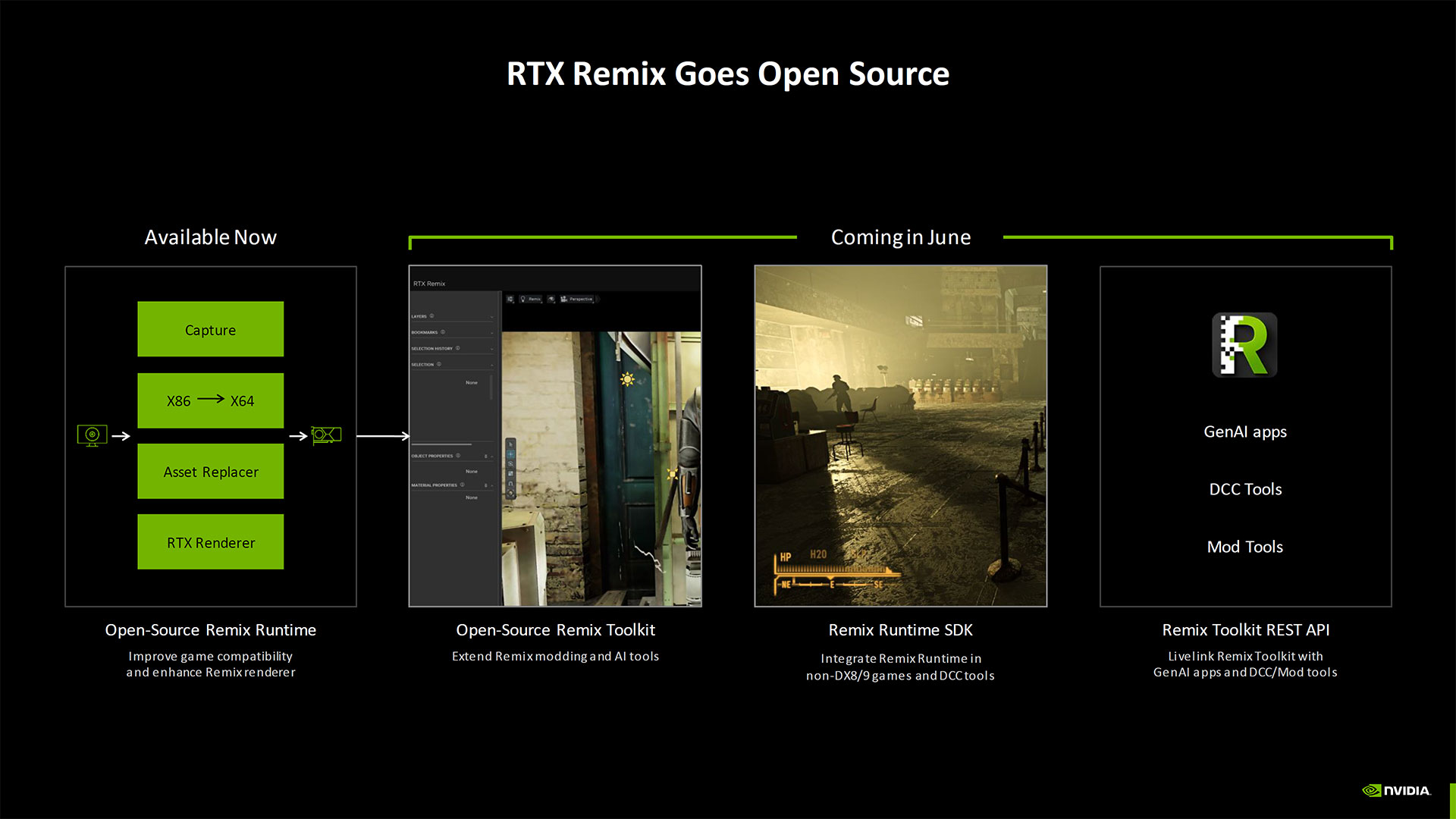

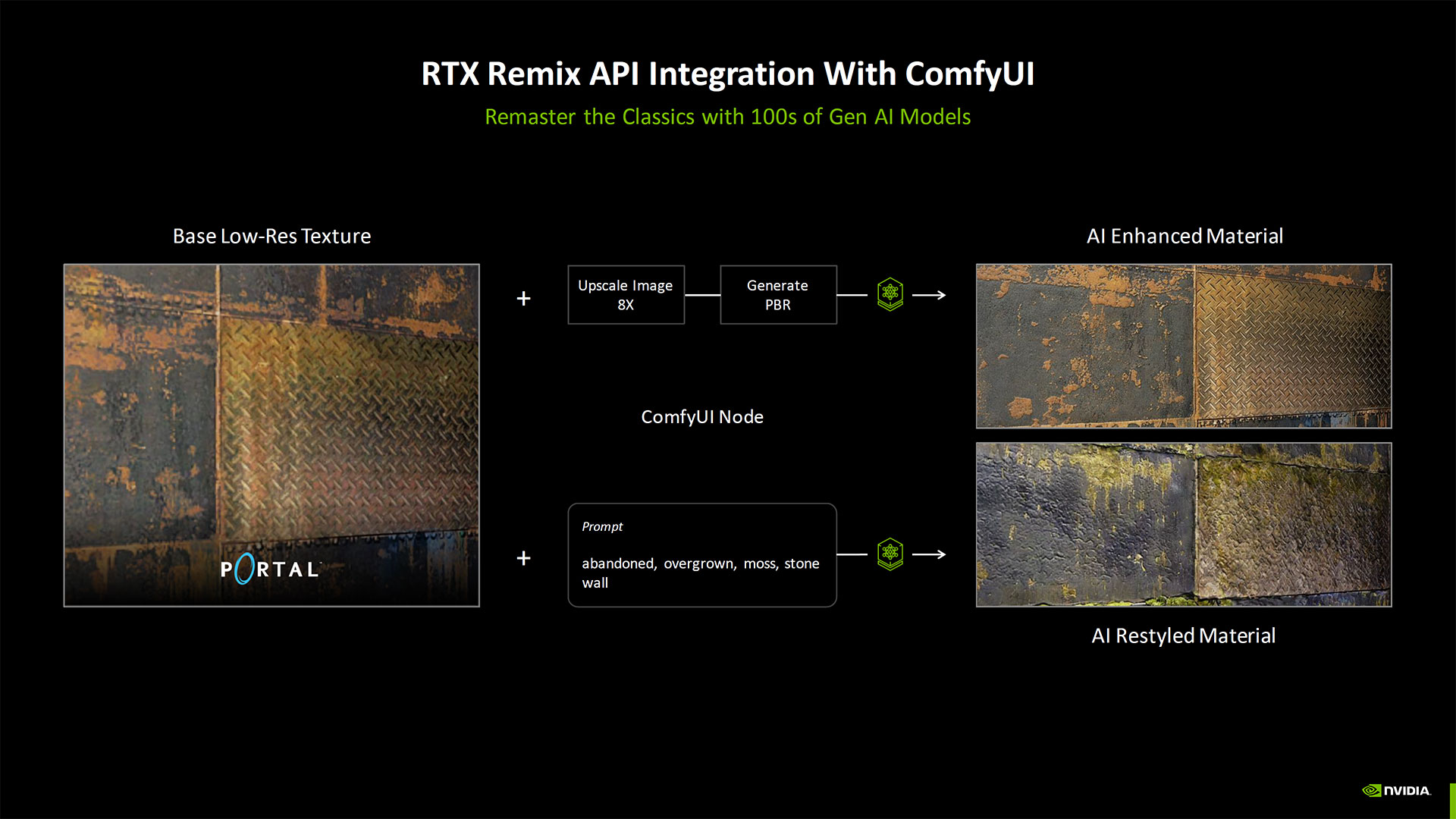

There was plenty more about AI that Nvidia talked about, as you can imagine. RTX TensorRT acceleration is now available in ComfyUI, another popular Stable Diffusion image generation tool. The ComfyUI integration is also coming to RTX Remix, so that modders can use it to quickly enhance textures on old games. You can watch the full Computex presentation, and we have the full slide deck below for reference.