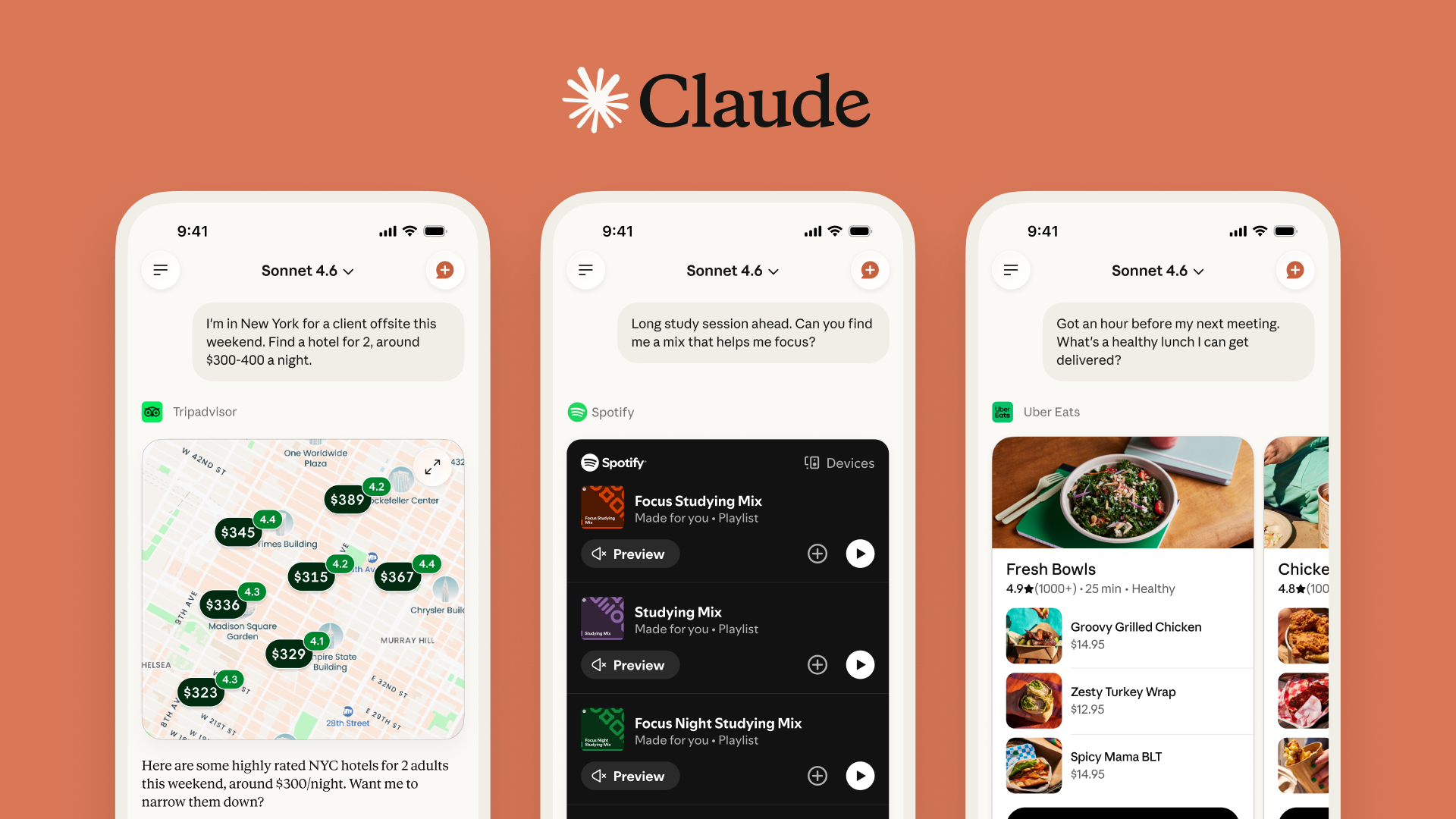

A growing number of Claude users are reporting unauthorized purchases tied to their Claude accounts, with patterns surfacing across Reddit and security reports.

According to findings highlighted by Frontiers in Computer Science and reported in The Guardian, modern phishing attacks are increasingly powered by automated, AI-assisted tactics, making account takeovers faster and harder to detect than ever.

This isn't a breach of Claude, it's actually something faster and much sneakier.

How the scam actually works

This attack doesn’t rely on breaking into Anthropic’s systems. Instead, it exploits how accounts, saved payments and gift subscriptions are currently handled. Scammers are gaining access using leaked passwords from past breaches or stolen browser sessions. In some cases, this happens through phishing emails or malware that captures login data without the user even realizing it.

But, this is where things gets sneaky, bad actors are using a "gift" loophole. Once inside, attackers don’t change your password or email, because that would raise alarms for you. Instead, they go straight to billing and send multiple gift subscriptions to external email addresses they control.

Since gifting often has fewer verification steps than account changes, it’s the fastest path to cash. From there, digital gift codes get delivered immediately, which means scammers can resell them on third-party marketplaces, often for crypto, before you even notice the charges.

Why this is happening now

This type of fraud isn’t unique to AI. We've seen prompt injection scams and similar hacks before, but now AI platforms are becoming a new target because of how quickly they’ve scaled.

Here’s where the gap is showing up:

- Limited payment verification: Some users report no secondary authentication (like a bank text code) for gift purchases.

- Trusted device loophole: If a hacker hijacks an existing session, activity can look “normal” to the system. Remember, no password or user name is changed in the sneaky process.

- Faster attack automation: AI-powered phishing and credential attacks are getting more efficient and harder to spot, especially if a user doesn't login to their account every day.

In short, even though the tools are getting smarter, protections and security are still catching up. Just last week, even the most dangerous AI in the world, got into the wrong hands.

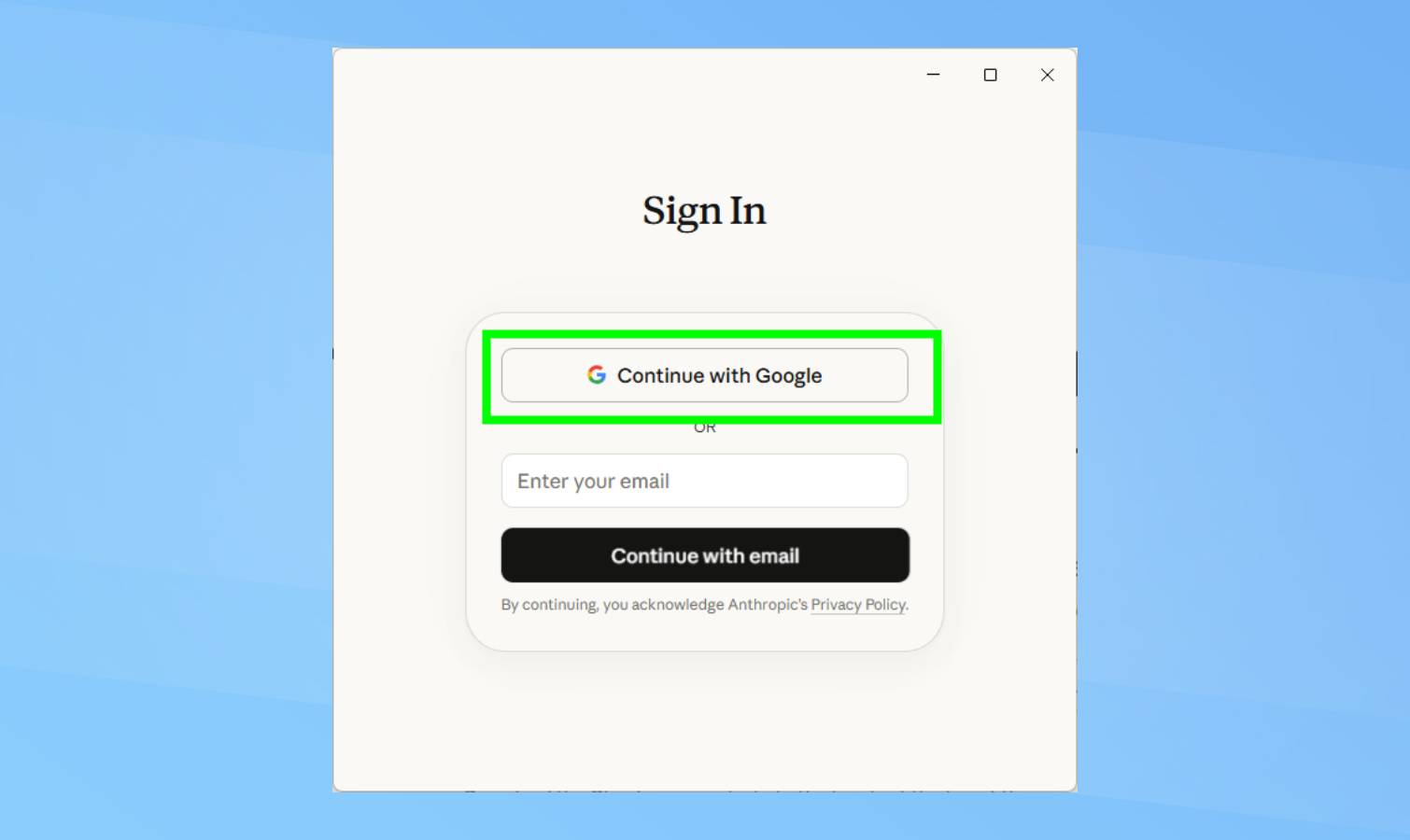

How to protect your account right now

If you use Claude (or any AI tool with saved payments), you'll want to do these three steps to stay safe:

- Remove saved payment methods. Go to Settings > Billing and delete stored cards or payment options. Only add them when you’re actively making a purchase.

- Log out of all devices. I'm guilty of always staying signed in, but by logging out, it forces a reset of active sessions, including any that may have been hijacked. If a scammer is already inside your account, this can cut them off instantly.

- Watch for “gift” confirmations. Check your email for messages like “Your gift has been delivered.” If you didn’t send it, contact your bank immediately, request a refund, then secure your account.

The bottom line

This isn't a flaw with Claude, but it is a preview of what happens when fast-growing AI tools don't have enough protections in place. AI tools are rapidly improving, but the real risk is how quickly (and quietly) scammers can take control of your account.

To keep yourself safe, assume your saved payment methods are a liability, and consider removing them until Anthropic puts a 2FA system in place for gift subscriptions.