“And all them fat cats they just think it's funny

I'm going on the town now looking for easy money.” – Bruce Springsteen.

“Technology is too important to be left to technologists.” – Richard Susskind, How to Think About AI

“Kabhi kabhi yun bhi humne apne ji ko behlaya hai, jin baton ko khud nahi samjhe auron ko samjhaya hai.” – Nida Fazli

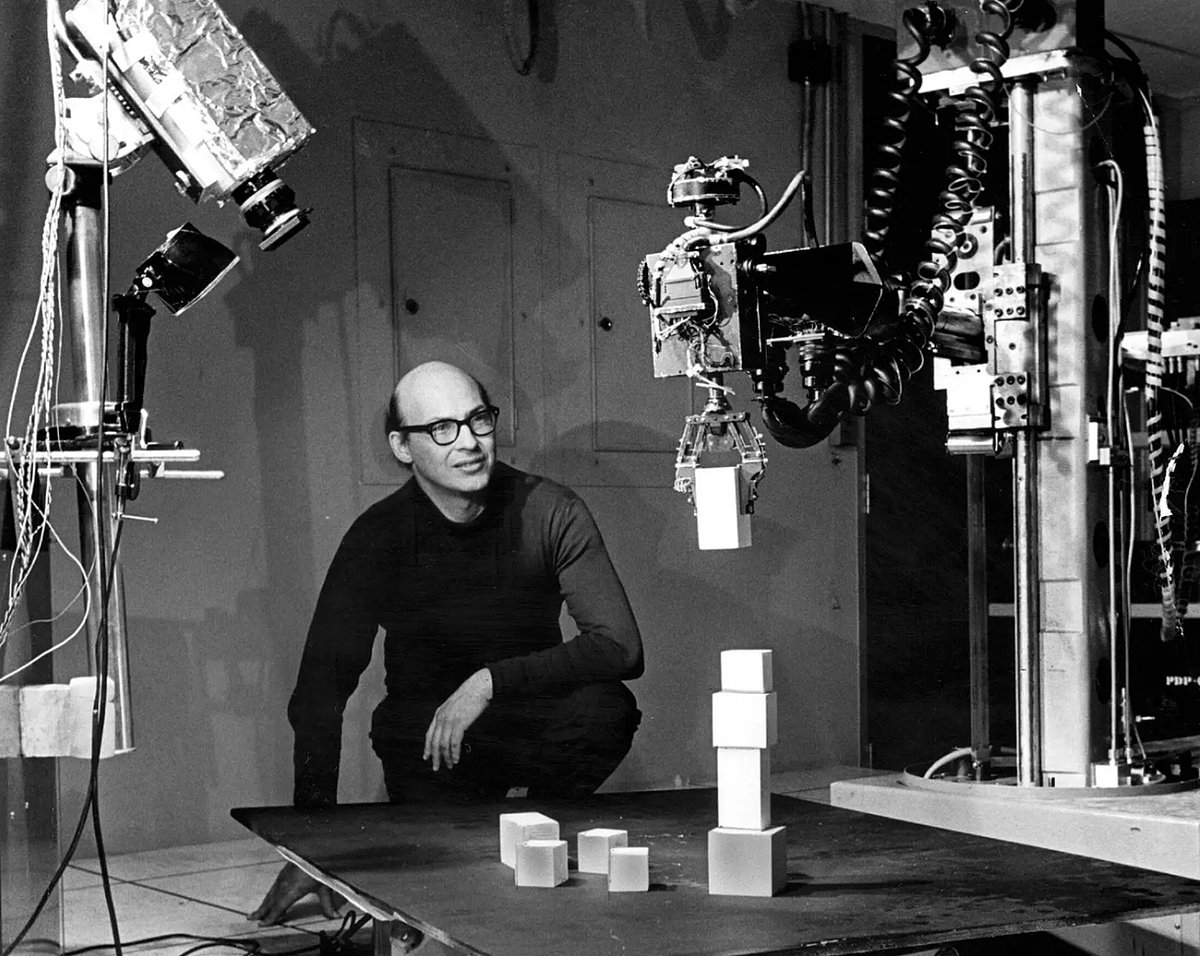

In 1956, John McCarthy and Malvin Minsky organised a summer-long workshop on artificial intelligence(AI) at the Dartmouth College in Hanover, New Hampshire, United States.

The workshop was funded by the Rockefeller Foundation. The term AI was coined by McCarthy specifically for this workshop and has stuck since then, though what the term stands for has continuously evolved over the years.

In 1970, Minsky told Life magazine: “From three to eight years we will have a machine with the general intelligence of an average human being. I mean a machine that will be able to read Shakespeare, grease a car, play office politics, tell a joke, have a fight. At that point the machine will begin to educate itself with fantastic speed. In a few months it will be at genius level and a few months after that its powers will be incalculable.”

We are in 2026, and we are still a long way from what Minsky – one of the pioneers of AI – thought was achievable by 1978. Nonetheless, breathless and grandiose predictions on the future of AI continue to be made.

Artificial intelligence is being sold as the next great economic revolution. Governments, corporations and investors have raced to embrace it. But history suggests technological revolutions rarely transform economies as quickly as their evangelists claim.

Indeed, AI will reshape work, but its impact will likely unfold more gradually than predicted, unevenly and with real social costs. But before we get to that let’s sample a few breathless predictions that have been made over the years.

“AI is probably the most important thing humanity has ever worked on. I think of it as something more profound than electricity or fire.” – Sundar Pichai, CEO of Alphabet in 2018.

“In 10 years, I think we will have basically chat bots that work for an expert in any domain you’d like. So you will be able to ask an expert doctor, an expert teacher, an expert lawyer whatever you need and have those systems go accomplish things for you.” – Sam Altman, CEO of OpenAI in 2021.

“AI to take over 80% jobs in 3-5 years.” – Vinod Khosla, Venture capitalist, 2026. (And something similar in 2016).

This basically tells us two things.

First, as David Edgerton, a historian of science, puts it in The Shock of the Old – Technology and Global History Since 1900: “Too often the agenda for discussing the past, present and the future of technology is set by promoters of new technologies.” The reason for that is simple: They have an incentive to do so, even though “the time of maximum use [of the technology] is typically decades away from invention”.

They will gain the most if their predictions turn out to be true. Also, in order to keep companies that are likely to buy their technology interested, they need to project a vision of the future that appeals to these companies, and that has to be an exciting one. So, techno optimism works like any other demand-supply market.

To offer a parallel, it’s like those in the business of managing other people’s money (OPM). They are optimistic all the time irrespective of the state of the world and the stock market, simply because they make money when others keep investing through them.

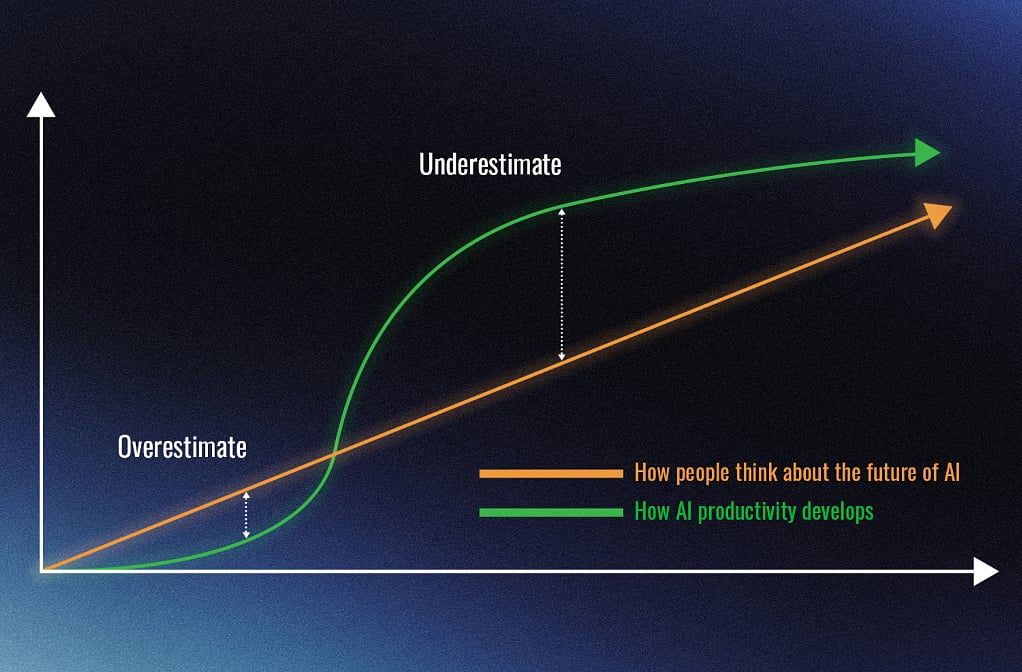

Second, Amara’s Law is at work.

As Roy Amara, an American researcher and futurist observed: “We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.” Of course, there are no real definitions of the short run and the long run here.

The larger point is that all the mahaul – for the lack of a better word – around AI, is being made by tech entrepreneurs and the system that they fund.

The business media which needs faces on pages and these entrepreneurs at its conferences, is a part of this phenomenon. The corporates also like to talk about AI – given that it’s the latest buzzword going around. It helps them keep popping up in the media as well. The public relations ecosystem keeps this mahaul going. And all this is happening simply because a lot of money is riding on AI.

In fact, a recent report by DSP Mutual Fund points out that big technology companies have spent more than $1 trillion on AI capital expenditure over the last five years. As the report points out, this is “the largest known and recorded spend in [the] history of [the] United States”.

The AI hype is an ecosystem where tech bros manufacture the “future,” the media packages it, and corporations parrot it – all to protect the massive piles of cash they’ve already bet on the table. When that much money is at stake, hype isn’t accidental – it’s structural.

Indeed, the AI narrative has therefore become a self-reinforcing ecosystem: technology firms promote the possibilities, the media amplifies them and corporations echo the language to signal that they are part of the future.

Why the future arrives slowly

While the purveyors of new technologies like to talk it up, it actually takes time for any new technology to get adopted and spread through society. There are multiple reasons for it.

First, as Richard Susskind writes in How to Think About AI – A Guide for the Perplexed: “We should distinguish between the advance and the adoption of technology.”

Even though the technology itself may seem to be improving very fast and in a smooth, steady way, the way people actually start using it grows differently. Adoption does not move in a straight, rapid line – it usually happens more slowly and unevenly.

In simple terms, just because AI can be built and made to work does not mean people, firms and institutions, will start using it right away. Business concerns, cultural attitudes, government rules, politics, or ethical worries can slow it down.

Second, it’s not just the performance of the technology that matters, there are other multiple bottlenecks that need to be overcome.

Consider the period when desktop computers first began entering organisations. As Carl Benedikt Frey writes in The Technology Trap – Capital, Labour, and Power in the Age of Automation: “In the early days of automation, the training and retraining of employees often took longer than expected, and many companies did not fully appreciate the obstacles involved in getting machines, computers, and sophisticated software to work together effectively.” Things started to change in the 1990s once companies started redesigning business processes and workflows.

Or take the case of the driverless car, which has been talked up by technology entrepreneurs for years. Now, without getting into the technology part of it, and recognising that there are advantages of using such cars, there are other serious problems which need to be thought through.

As Hector Macdonald writes in Truth – How the Many Sides to Every Story Shape Our Reality, if driverless cars “lead to the deaths of a few hundred people on our streets, that may be politically unacceptable, even if the total number of road deaths is lower than before”. This is a moral and a philosophical problem and not a technological one. Indeed, how do you think through something like this?

Third, sometimes the technology simply doesn’t make economic sense. Take the case of what happened after tractors were first introduced in the United States (US). Given that there was cheap labour available in the countryside, mechanising agriculture through the use of tractors did not make economic sense for a long time.

As Frey writes: “In 1921, The New York Times pointed out that there were still seventeen million horses on American farms but only 246,139 tractors and expressed concern that adoption was lagging.” This illustrates an important point: technological feasibility alone does not guarantee rapid adoption – economics still decides.

Fourth, sometimes the technology itself is work in progress, and requires a lot of work before it can become a mass-used technology or a general purpose technology, as economists like to call it.

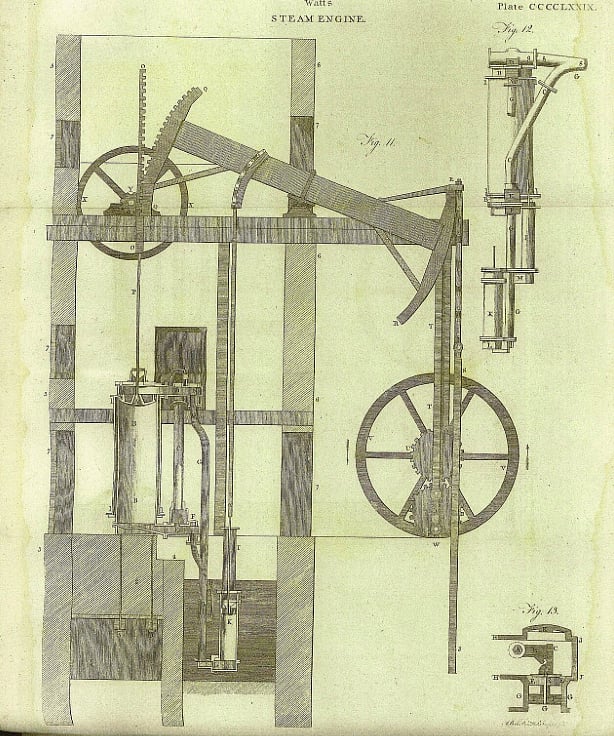

Consider James Watt’s steam engine which ultimately revolutionised industrial production and was an important part of unleashing the Industrial Revolution. But it took time. It delivered its main boost to productivity some eight decades after it was invented.

As Frey writes: “When John Smeaton examined Watt’s invention, patented in 1769, he declared that ‘neither the tools nor the workmen existed that could manufacture so complex a machine with sufficient precision.’” Smeaton was an engineer.

In fact, as Andrew Leigh writes in The Shortest History of Economics: “When James Watt’s patent expired in 1800, British factories were still using three times as much water power as coal power.” And it took people almost a century to properly harness coal and that in a way delayed the economic impact of the steam engine.

Fifth, given that this is an essay and not a book, it prevents me from offering more examples, but the history of technology has been studied long and well enough to conclude that things take time.

Or as Jeremey Grantham writes with Edward Chancellor in The Permabear – The Perils of Long-term Investing in a Short-term World: “Every technological revolution prior to AI – going back from the internet to telephones, railroads and canals – has been accompanied by early massive hype and a stock market bubble as investors focus on the ultimate possibilities of the technology, pricing most of the very long-term potential immediately into current market prices.” The situation with AI is no different.

The authors offer the example of the railway line between Leeds and Manchester in the United Kingdom in the 1840s, when railway was the most talked about new technology: “For instance, during Britain’s railroad bubble of the 1840s, six railroads were planned between Leeds and Manchester when only one or two were needed.”

Of course, the railway bubble of the 1840s burst. A lot of money was lost. But the railway still went on to change the world. It’s just that it took time. There are so many other examples. The 1990s saw a huge bubble in internet/dotcom companies. The bubble burst in the early 2000. But the internet is a very important part of our lives in 2026.

The world saw the first wave of electric cars in the 1900s. But mass adoption of these cars started only in the 2020s. Even with that internal combustion engine cars continue to remain reasonably popular, especially in a country like India, where charging infrastructure is weak.

Or take the case of the personal computer. The initial hype around it started in the 1960s. But it only started gaining traction in the 1990s.

So, what does this mean in the context of AI?

Throughout 2025, AI dominated headlines and keynote slots – and the hype shows little sign of slowing down. There are bold predictions about productivity gains, promises of transformation across industries and almost every app now has some kind of AI-assisted feature.

As Emily M Bender and Alex Hanna write in The AI Con – How to Fight Big Tech’s Hype and Create the Future We Want: “Corporate executives in nearly every industry and mega margin-maximizing consultancies like McKinsey, BlackRock, and Deloitte want to “increase productivity” with AI, which is consultant-speak for replacing labour with technology.”

The trouble is that this hype is not really translating into profitable outcomes for companies. As a piece published on Forbes.com in late February 2026 says: “Gartner research finds that only one in 50 AI investments delivers transformational value, and only one in five delivers any measurable return on investment.”

Further, as per the GenAI Divide: State of AI in Business 2025 report published by MIT Media Lab’s Project NANDA: “Despite $30–40 billion in enterprise investment into GenAI… 95% of organizations are getting zero return.” This report includes a systematic review of over 300 publicly disclosed AI initiatives and structured interviews with representatives from 52 organizations. Generative AI (GenAI) is a subset of artificial intelligence that creates new content – including text, images, code and audio – by learning patterns from large datasets.

Of course, low early returns are not unusual for general-purpose technologies. Electricity and computers also took years before businesses learned how to reorganize work around them.

Despite the low-returns, the consultancy BCG says that: “Corporations expect to double their spending on AI in 2026, from 0.8% to about 1.7% of revenues.” So, why are corporations spending more money on AI despite not getting much measurable return on investment?

First, because everyone else is. A chief executive cannot take the risk of not investing in AI. If AI does not succeed in the years to come, then their job is still safe, because they did what everyone else was doing and failed. That’s much better than not spending on AI and taking the risk of watching it succeed.

It’s like fund managers in the OPM business chasing hot stocks. They have to because everyone else is irrespective of whether the price of the stock is very high with respect to its earnings. Indeed, not investing in such stocks is a huge career risk which they can’t afford to take.

Second, the hype is so much that there’s huge pressure on chief executives to show the world that they are doing something about AI. Like many simpler souls, they may have bought into a vision of the future that’s being spun by techno-optimists.

Third, large firms like the idea of cost-cutting through algorithms. Take the case of so many service companies. If you require any help these days, the first line of response now comes from chatbots, even though they aren’t really up to the mark, and in many cases can’t offer anything beyond the most basic responses. To go beyond the chatbot and get to a human takes a lot of time and effort. It basically spoils the service levels offered by a company, but that isn’t something that they seem to be bothered about.

As Eswar Prasad writes in The Doom Loop – Why the World Economic Order is Spiralling Into Disorder: “Rather than being frustrated by incompetent and unhelpful call center employees and customer service agents, one is more likely these days to encounter incompetent and unhelpful AI chatbots.”

Fourth, AI is a great negotiation tool, even if it doesn’t succeed. As Bender and Hanna point out, “just the existence of a possible low-cost replacement,” which AI can be, can be used to drive down wages of workers or not offer reasonable increments.

Fifth, as the Forbes.com piece referred to earlier points out: “The issue is that most organizations have treated AI adoption as a technology decision when it is fundamentally a leadership and governance decision.”

It’s like when companies started to use desktop computers a few decades back. The computer per se was ready to be used in organisations, but there were multiple other things that needed to be sorted out first.

To conclude, none of this means that AI will not reshape labour and job markets. Nonetheless, the lesson from history is simple: technological revolutions are real, but they are rarely as fast as their promoters claim. Railways, electricity, computers and the internet all arrived amid extraordinary hype and speculative excess. Many investors lost money, many companies failed, yet the technologies themselves eventually reshaped the world.

AI may follow a similar path. It could transform work, productivity and economic structures over time. But the transformation will be uneven, slower and messier than the breathless predictions suggest. In the end, the real story of AI may not be a sudden revolution, but a long, grinding evolution – one that unfolds over many years rather than just sensational headlines.

Read Part 2: Will AI destroy jobs? Here are the questions no one is asking

Newslaundry is a reader-supported, ad-free, independent news outlet based out of New Delhi. Support their journalism, here.