Microsoft has unveiled a new tool which looks to stop AI models from generating content that is not factually correct - more commonly known as hallucinations.

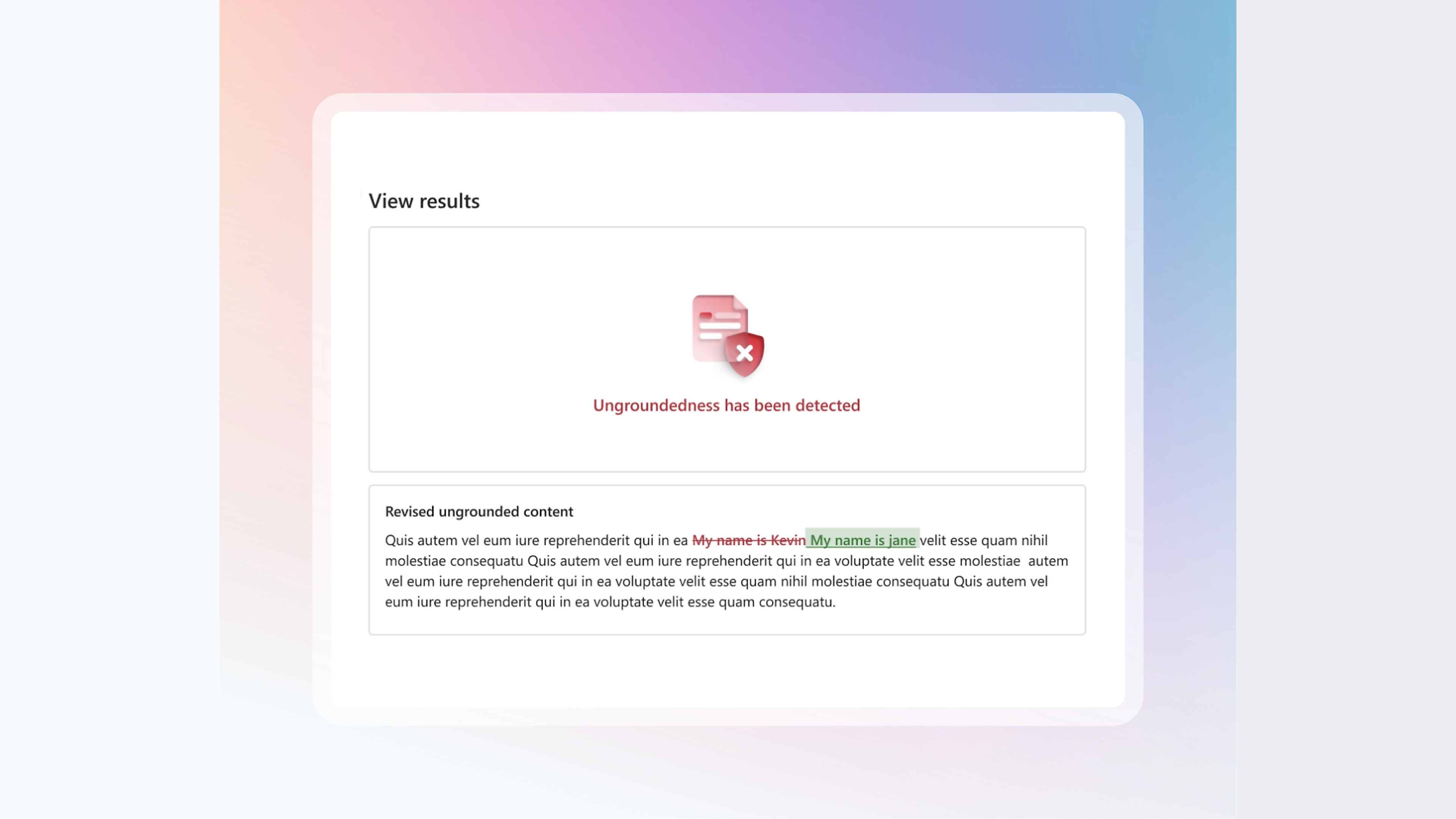

The new Correction feature builds on Microsoft's existing ‘groundedness detection’, which essentially cross-references AI text to a supporting document input by the user. The tool will be available as part of Microsoft’s Azure AI Safety API and can be used with any text generating AI model, like OpenAI’s GPT-4o and Meta’s Llama.

The service will flag anything that might be an error, then fact check this by comparing the text to a source of truth via a grounding document (i.e. uploaded transcripts). This means users can tell AI what to view as fact in the form of grounding documents.

A stop gap measure

Experts warn that whilst the existing state of play may be useful, it doesn’t address the cause of hallucinations. AI doesn't actually ‘know’ anything, it only predicts what comes next based on the examples it is trained on.

"Empowering our customers to both understand and take action on ungrounded content and hallucinations is crucial, especially as the demand for reliability and accuracy in AI-generated content continues to rise," Microsoft noted in its blog post.

"Building on our existing Groundedness Detection feature, this groundbreaking capability allows Azure AI Content Safety to both identify and correct hallucinations in real-time before users of generative AI applications encounter them."

The launch, available in preview now, comes as part of wider Microsoft efforts to make AI more trustworthy. Generative AI has struggled so far to gain the trust of the public, with deepfakes and misinformation damaging its image, so updated efforts to make the service more secure will be welcomed.

Also part of the updates is ‘Evaluations’, a proactive risk assessment tool, as well as confidential inference. This will ensure that sensitive information remains secure and private during the inference process - which is when the model makes decisions and predictions based on new data.

Microsoft and other tech giants have invested heavily in AI technologies and infrastructure and are set to continue to do so, with a new $30 billion investment recently announced.

More from TechRadar Pro

- Check out our pick of the best small business software

- AI-written malware is here, and going after victims already

- Take a look at our best firewall software choices