Unless you’ve been living under a rock or in a simulated Mars capsule in a desert somewhere you may have noticed AI has taken over. From chatbots making pictures to catflaps refusing entry if your feline friend has a mouse in its mouth — artificial intelligence is watching.

However, we’ve barely scratched the surface of what AI can do, might do and will do for humanity over the next few years and Groq hopes to be at the centre of that revolution.

Formed by the side of a pool, Groq’s money maker is the Language Processing Unit (LPU), a new category of chip designed not for training AI models but for running them very fast.

The GroqChip is currently a 14nm processor and gains its performance benefit from scale, operating in the cloud as a cluster of well-structured units efficiently parsing data.

Having access to very low latency AI inference is helping close some of the bottlenecks in the delivery of AI solutions. For example text-to-speech and vice-versa can happen in real time, allowing for natural conversations with an AI assistant, including allowing you to interrupt it.

Creating a chip specifically for running AI

Many of the companies trying to compete with Nvidia in the artificial intelligence space are going after the training market, but Groq took the decision to focus on running the models.

"We've been laser-focused on delivering unparalleled inference speed and low latency,” explained Mark Heap, Groq’s Chief Evangelist during a conversation with Tom’s Guide. “This is critical in a world where generative AI applications are becoming ubiquitous."

The chips, designed by Groq founder and CEO Jonathan Ross, who also led the development of Google's Tensor Processing Units (TPU) that were used to train and run Gemini, are designed for rapid scalability and for the efficient flow of data through the chip.

Heaps explained it as working more like a planned, gridded city where traffic knows where to go and can easily follow the layout, where other chips are like driving in Delhi with complex road layouts and heavy traffic.

"Our architecture allows us to scale horizontally without sacrificing speed or efficiency... It's a game-changer for processing intensive AI tasks,” he told me.

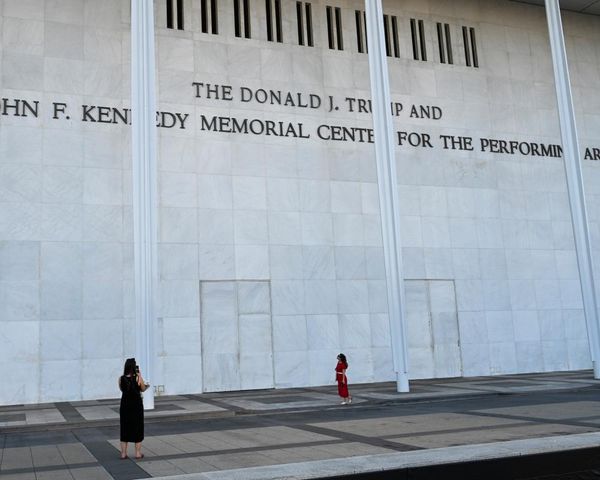

Thrust into the limelight

The company is being built on sets of core pillars including tackling latency whilst ensuring the entire program is scalable. This is being delivered largely through its own cloud infrastructure with more global data centers coming online this year or next.

While edge devices such as driverless cars is something that could become viable when they shrink the chips down to 4nm in version two, for now the focus is purely on the cloud.

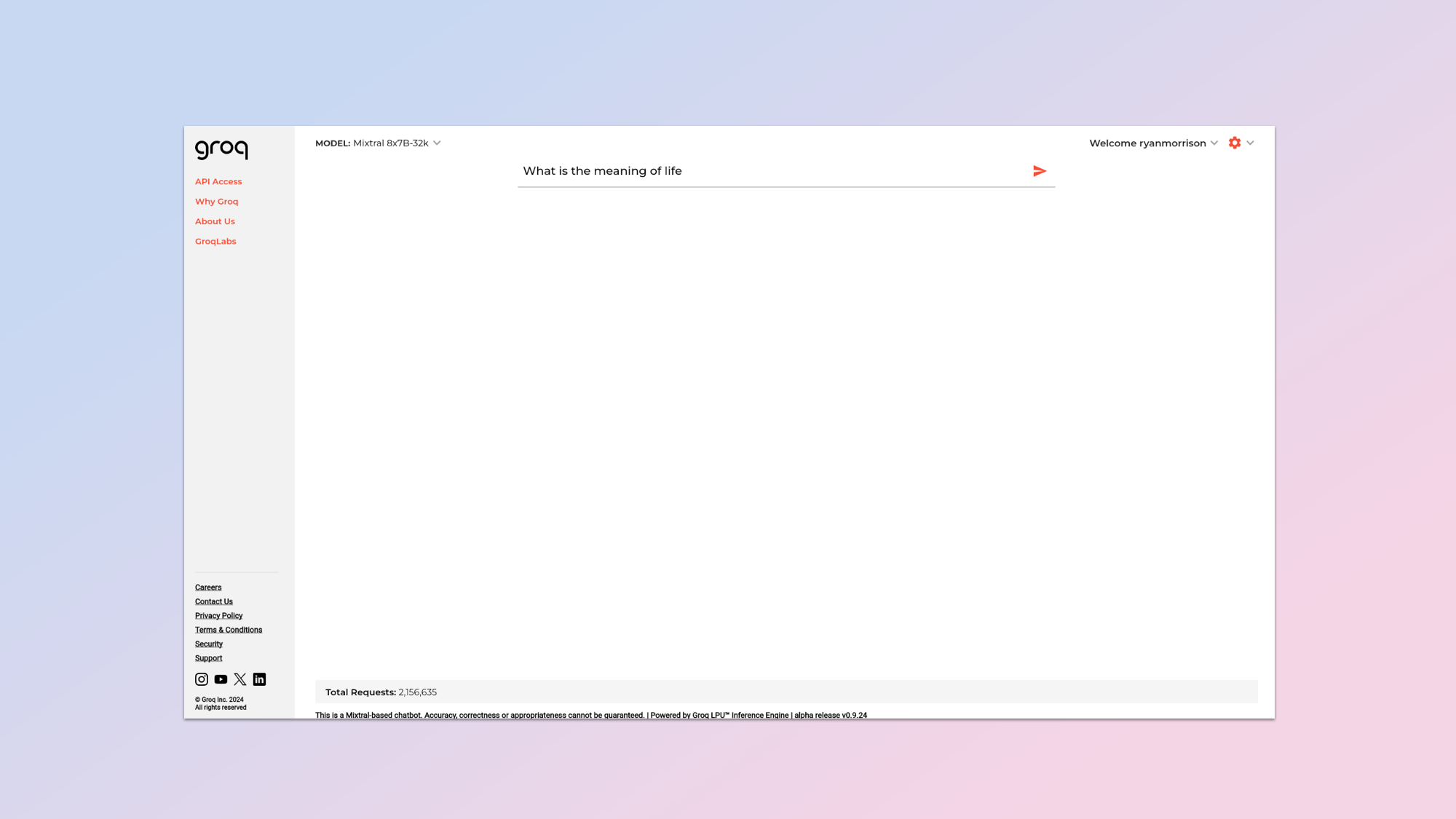

This includes access through an API for third-party developers looking to offer high speed and reliable access to open source models from the likes of Mistral or Meta. As well as a direct consumer chatbot-type interface called GroqChat.

It is the launch of this public, and easy to access interface that seemed to propel this six year old company into the limelight. They’d been working away in the background including during the Covid pandemic providing rapid data processing for labs, but this was a pivotal moment.

Our architecture allows us to scale horizontally without sacrificing speed or efficiency... It's a game-changer for processing intensive AI tasks,

Mark Heaps

Heaps told me that the discussion with Jonathan Ross was “why don't we just put it on there and make it so that people can try it.” This was off the back of internal experiments getting open source models like Llama 2 and Mixtral running on GroqChips.

“Going back even a month and a half ago we had a completely different website and you had to click three links deep to find it. And it was just kind of nested and it was sort of an experiment,” Heaps explained. “And then a few people hit it and said, you know, this is great, but gosh, why do you make me go through all these clicks?”

Ross told the team to make it the homepage. Literally, the first thing people see when visiting the Groq website. “It was a little scary,” Heaps admitted. “His goal was: I want there to be no website in regards to marketing pages. I only want it to be the chat.” So that is what they implemented.

What you can do with low latency AI

That's a strong-looking GroqRack™ right there, don't ya think? Serving up tokens faster than anyone. We're building A LOT more hardware and increasing capacity weekly. Scaling to be a token factory to help change the world of AI through the world's greatest inference engine. pic.twitter.com/l3q32XjC6bFebruary 27, 2024

Low latency AI allows for genuine realtime generation. For now the focus has been on large language models including code and text. We’re seeing up to 500 tokens per second which is dozens of times faster than a human can read and its happening on even complex queries.

There will be new models added soon but then they’ll work on delivering the same rapid generation of images, audio and even video. That is where you’ll see the real benefit including potentially real time image generation even at high resolutions.

The other significant advantage is being able to find a single piece of information from within a large context window, although that is in the future versions where you could even have real-time fine-tuning of the models, learning from human interaction and adapting.

This could then allow for a true open world game, something akin to the Oasis in Ernest Cline's seminal novel Ready Player One. Live AI rendering and re-training would allow for the sort of adaptability required to reflect so much interact and change from multiple players.

The pivot to running AI models was a side project

Groq has been around since 2016 with much of the first few years spent perfecting the technology. This included working with labs and companies to speed up run-time on complex machine learning tasks such as drug discovery or flow dynamics.

The pivot to running LLMs coincided with the rise of ChatGPT and the leak of Meta’s Llama large language model. Heaps told Tom’s Guide: “We literally had one engineer who, who said, I wonder if I can compile [Llama]. He then spent 48 hours not getting it to work on GroqChip.”

What took most of the time was actually removing much of the material put into Llama to make it run more efficiently on a GPU as that “was going to bog it down for us,” said Heaps. Adding: “Once he got all that scrubbed out, because we don't use CUDA libraries or kernels or anything, we were like, ‘oh, we can run llama’. So we've been using it internally since then.”

We literally had one engineer who, who said, I wonder if I can compile [Llama]. He then spent 48 hours not getting it to work on GroqChip.

Mark Heaps

Over the next few months they started to integrate other models and libraries and, while only Mixtral and Llama 2 are available on the public Groq interface, others, including audio AI like text-to-speech generators, are being actively tested and converted to run on GroqChips.

One thing we can expect to see is significant disruption to a tech space that is already disrupting the entire technology sector. We’re seeing a rise in AI PCs and local hardware, but with improved internet connectivity and solving the latency issue — are they still needed?

.png?w=600)