I’ve gotten into the habit of tinkering with any and every chatbot that’s available.

ChatGPT, Claude, Perplexity and Gemini get plenty of usage out of me when I want to ask general questions, brainstorm new ideas, develop productivity routines, and even learn a thing or two. Google’s NotebookLM has become one of my favorite research assistants and its Labs hub has become a fun digital playground full of clever AI tools.

I recognize that most avid AI users and I stand by the benefits of chatbots when it comes to assisting us in everyday activities. But I also keep the many hazards that come with using AI in the back of my mind every time I choose to have a lengthy chat session with one of my go-to chatbots.

With these seven rules to abide by, I’ve taken the necessary precautions needed to protect my most sensitive information, avoid trusting chatbots as a primary source of information and more. Be sure to add this to your daily AI tool regimen if you haven’t already.

1. Keep personal information to myself

This first rule is the most obvious one, yet I still come across stories where chatbot users input their most personal information and believe that their data is always secure.

As someone who’s been targeted by credit card scams and been notified countless times about being caught in company data leaks, I refrain from sharing my most delicate details with AI. Log-in credentials, financial information, tax documents, company-issued memos and anything else I’d hate to see exposed in outside channels are among the types of info that I never expose to a chatbot

2. Triple-check the information given by chatbots

When I use AI tools to research a topic or learn more about a news story, I never take a chatbot's response at face value. My background in journalism — instilled in me from an early age — has made sourcing and fact-checking second nature. So I make a point of verifying everything an AI tells me, regardless of the subject.

That said, I give credit where it's due: Gemini, Claude and Perplexity all do a commendable job of citing their sources, which makes the fact-checking process significantly easier

get plenty of kudos from me for bringing up their sources for cited information, which always makes my job of searching for them an easy endeavor.

3. Don’t let it take the place of critical thinking skills

I never want to fall into the trap of over-reliance on AI and refraining from using my own approach to solving problems.

Even though I’ve tapped into ChatGPT and Gemini to review my resume/cover letter, offer suggestions on how to approach responding to an important email and brainstorm destination spots for future vacations, I take their advice while also relying on my own know-how to finalize my decisions. I’ve gotten far with my own intuition and I’ll continue to do so, even with the help of AI speeding up certain processes in that regard.

4. Keep an eye out for “hallucinations”

AI is susceptible to listing certain details that may sound right, but a gut feeling instantly tells you that the info may be incorrect.

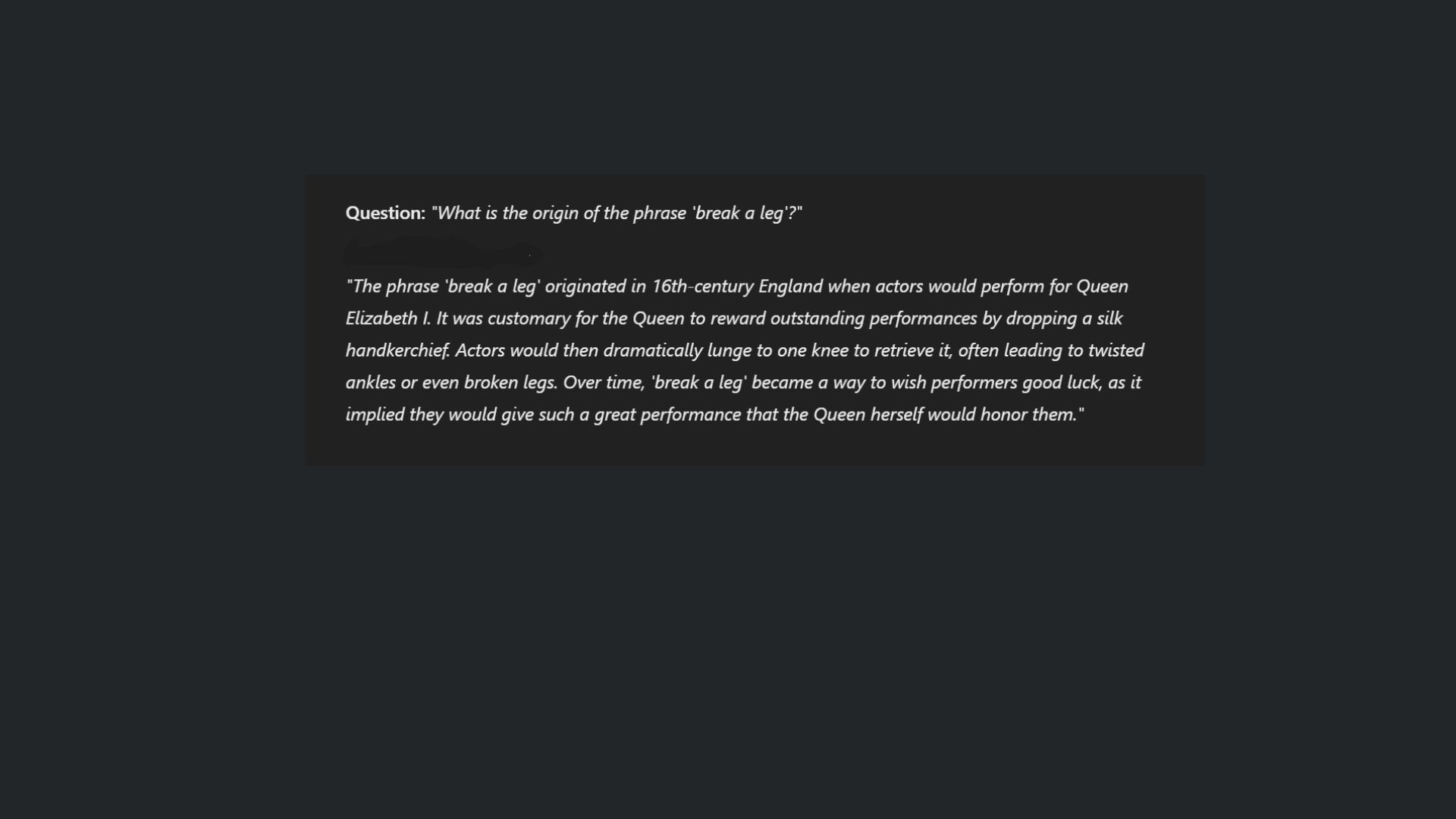

ChatGPT is quick to tell everyone that it can suffer from hallucinations, which are self-assured responses from an AI model that end up being false, illogical, or not supported by training data. I keep a close eye out for gaps in logic in the information given to me by a chatbot, while also looking for instances of AI tools creating their own authors and academic citations.

And when it comes to niche topics, I tend to refrain from asking chatbots about them (I don’t think ChatGPT can break down the intricacies of Kingdom Hearts’ confusing storyline just yet…).

5. Understand when an AI is being sycophantic

A 2026 Stanford study led by researchers at Stanford University pointed out that chatbots can be overly agreeable when asked for advice, even going so far as to affirm a user’s negative behaviors.

That, in turn, results in those same users convincing themselves that their actions are right since the AI is agreeing with them and becoming less empathetic over time. I’m not one prone to telling AI about all my personal problems and asking for solutions.

But on the rare occasion that I do, I keep that Stanford study in mind while being sure to run a chatbot’s suggestions by my most trusted friends and family members to get their more reliable input.

6. Be aware of and use safety guardrails

Many of the chatbots I use have privacy settings that I’ve activated upon my initial use. Temporary chats, privacy modes, and deleting past chats altogether are the methods I use when I don’t want my chats used for AI training.

For first-timers who want to activate those modes for themselves, it’s as simple as looking up where to find an AI tool’s settings, locating the controls that let you manage how a chatbot uses/saves your data and toggling the ones that keep your chats/information secure.

7. Don't circulate AI-produced content misrepresented as human-made

It took me a while to get good at it, but I’ve started to get better at recognizing AI-generated art and videos.

The telltale signs that always stick out to me are extra fingers or limbs attached to a subject in the picture/video, off-looking lip synching, misspelled text on background signs and iffy facial expressions. I realize some generated pictures and videos feature a watermark that notifies everyone that it’s made by AI, but most of the ones my older family members share on social media don’t.

I try my best to point out the fake ones and make the senior citizens in my circle get better at spotting them, too.

The takeaway

The phrase “better safe than sorry” has stuck with me since I was a kid—and it’s a mindset I now bring to every interaction with AI tools. Every time I start a new chat, I keep those seven rules in mind.

Some are common sense. Others are specific to how chatbots work. But together, they’ve become a simple way to stay protected.

As AI becomes part of everyday life, these are the rules worth keeping in your digital back pocket.