AI will save us or be the end of us. That's not fact or even an opinion; it's a TL;DR reduction of the very real tension between proponents of AI and those who fear it.

Interestingly, sometimes that tension resides in a single person. It is quite fair and reasonable to use ChatGPT for basic deep dive data searches and for quick answers on how to talk to an uncooperative child, but to also fear that perhaps that same AI knows too much about you and might, in its own agentic way, start to act on your behalf and do things you never intended. At scale, we worry about AI controlling weapons or even launching a catastrophic war.

There is, obviously, a continuum from what AI can do right now and what it might be able to do in 6-to-18 months. No one can say for sure what that destination is or what comes after it, but the twin thoughts of hope and deep anxiety will persist right through to the moment when we realize AI is thinking thoughts and making moves.

These are not new ideas, very far from it in fact.

75 years ago, Alan Turing, arguably the father of Artificial Intelligence, offered a stark warning about thinking machines: "Once the machine thinking method had started, it would not take long to outstrip our feeble powers. At some stage, therefore, we should have to expect the machines to take control.”

Turing is credited with proposing a method, or a "test", to determine when a machine or program is no longer distinguishable from a human by a human. Spend a minute or two with Gemini, ChatGPT, Grok, Copilot, or Claude, and you'll know we've already exceeded the parameters of that test. These digital robots sound like us.

The call's coming from inside the house

Decades after Turing, but still a few years before our current AI revolution, those who are now building these AI platforms were already sounding the alarms. From the grandfather of AI, Geoffrey Hinton, to Sam Altman, who, in 2023, described the AI worst-case scenario as "lights out for all of us," few, even those close to the technology and development of these vast and powerful models, are immune to scaremongering.

Consumers are also trending in the wrong direction. A recent Pew study found that "50% of Americans are more concerned than excited about the increased use of AI in daily life". Researchers added that the number of people who are more concerned is actually increasing year-over-year.

The rapid development of AI models and their increasingly agentic capabilities has surely only accelerated these fears, but also spread the scariest rhetoric about how AI will consume all our jobs, or drive autonomous weapons to kill us.

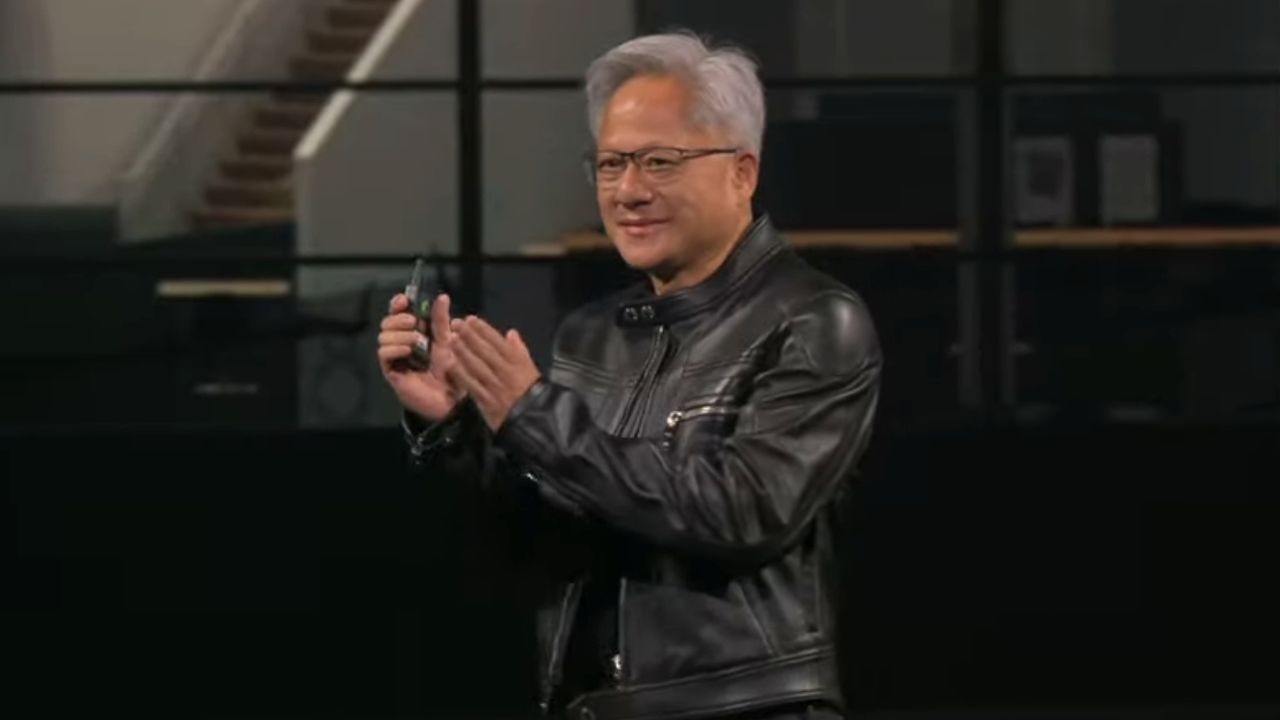

This week, one of the chief architects of AI's stunning rise, Nvidia CEO Jensen Huang, said it has to stop.

AI facts, not irrational fantasy

Huang, who had just spent almost three hours extolling Nvidia's new Vera Rubin AI Platform at its GTC Conference keynote in California, sat down for a lengthy interview with Stratechery, where he offered a stark warning.

Jensen was talking about, among other things, efforts to continue selling H2O AI accelerators in China, something the Trump administration originally blocked before Huang convinced them otherwise.

The interviewer asked what Huang learned from his time in Washington, D.C., and Huang noted how deeply "all the doomers were integrated into Washington D.C."

He pointed to "incredible stories," calling them "inventions" that scare policy makers.

I don’t like it when doomers are out scaring people.

Jensen Huang

For Huang, an understanding that these tools and platforms are real (and doing real work) and not "some kind of a mystical science fiction embodiment," is critical.

"I don’t like it when doomers are out scaring people," he told Stratechery, "I think there’s a difference between genuinely being concerned and warning people versus...creating rhetoric that scares people."

Obviously, as the company that's selling most of the chips helping generate new models and even answering prompts in the cloud, Huang has a vested interest in AI's survival and growth. He also has a point, though.

Rational acceptance

AI, like so many fast-paced, society-shaking innovations, is neither all good nor all bad. Like the internet before it or the industrial revolution, we'll make great strides with AI, but we'll also go through significant pain, like job changes and loss, and mistakes made by AI or by people who put too much trust in AIs that know how to act confidently without being right.

Other things are true, though: AI will play a part in scientific and medical breakthroughs. It will revolutionize work and maybe even play. And, it also won't go away.

Fear of AI is not AI regulations or restrictions. Even the doomsayers in Washington know that this is a race the US can not afford to lose. China will not slow down. It will likely pay even less attention to safety and guardrails. If we all only listen to our darkest thoughts about AI, China will win, and then our worst fears about AI won't be realized by models built in the West, but by those created by our chief adversaries.