- Google Vids platform now includes Veo 3.1 AI video and Lyria 3 AI music models, among other upgrades

- The update is designed to make AI video creation easier and more useful for ordinary people

- The investment in Vids comes as OpenAI pulls back from Sora’s consumer-facing AI video platform

Sora's abrupt vanishing trick has made plenty of people wonder about the future of AI video production. Nonetheless, Google is doubling down on the idea with a set of major upgrades to Google Vids, its browser-based AI video creation toolkit. Once aimed more at business and enterprise services, the latest batch of updates is pitched right at the average dabbler in AI production.

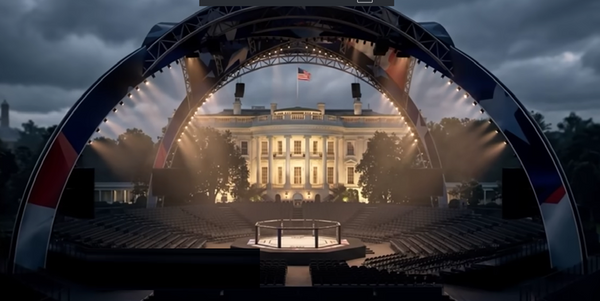

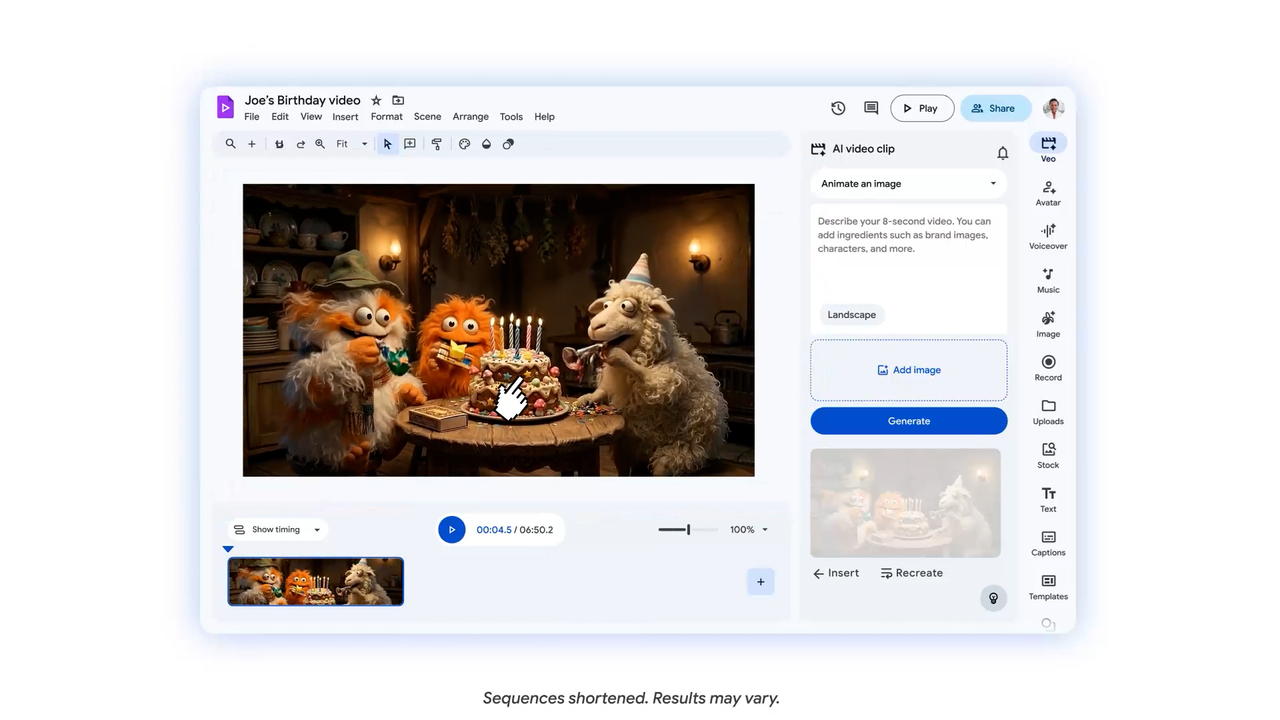

The most notable addition is Veo 3.1, Google’s latest video generation model, now built directly into Vids. Anyone with a Google account can generate AI video clips at no cost, with ten generations per month included for personal users. Google pitches it as a way to make animated flyers for parties, greeting cards, or other fun projects. Note that it is not supposed to be a way of making some cinematic masterpiece.

For most people, Veo 3.1 is less interesting as a feat of machine creativity than as a shortcut. If you want a cheerful clip to open a family slideshow, a visual for a school project, or a quick social post for something you are promoting on the side, the hardest part is often not having the idea. It is making the thing look like more than an elaborate PowerPoint.

Sing-along AI

The same goes for AI-produced music. Google Vids is integrating the Lyria 3 and Lyria 3 Pro models, though they aren't quite as available as Veo 3.1. Only Google AI Pro and Ultra subscribers can use them to generate custom soundtracks ranging from 30-second clips to three-minute tracks.

Unless you have a talent for composition or music editing, the audio addition is more than a small flourish. Plenty of homemade videos are ruined by the soundtrack, even if the visuals are great. A slightly sentimental family montage can survive mediocre transitions. It cannot survive a bad royalty-free ukulele track.

Vids also employs AI to make the look and sound of its "avatars." These customizable digital characters can be directed to behave however you wish in a video while maintaining a consistent look and voice across scenes. You can ask the AI models to adjust their settings, outfits, and even the props they interact with by typing out your request.

It's also arguably the feature deepest in the uncanny valley. But, if you're okay with that, it's also a clear example of Google trying to make AI media creation feel like just another software tool and not just a novelty.

Exit Sora, enter Veo for everyone

While OpenAI may be stepping back from the idea that everyday users want a separate destination just for AI-generated video experiments. Google’s move suggests the company thinks people might very much want AI video features embedded inside a bigger platform.

Google is also scaling this aggressively. AI Ultra and Workspace AI Ultra users can now generate up to 1,000 Veo videos per month, which is not a casual side feature number. That is “we think this is becoming part of how people work and create now” territory.

And that is really the larger story here. Google is not just adding AI bells and whistles to a video app because they're making a specific bet about what the average person actually wants from these tools. Not a standalone playground for abstract generative experiments, but a set of embedded features that help turn everyday moments into finished, shareable things with less effort.

That may also explain why this update feels better timed than it first appears. If OpenAI’s consumer retreat from Sora suggests that AI video as a destination was a harder sell than expected, Google’s approach points to a more sustainable version of the category.