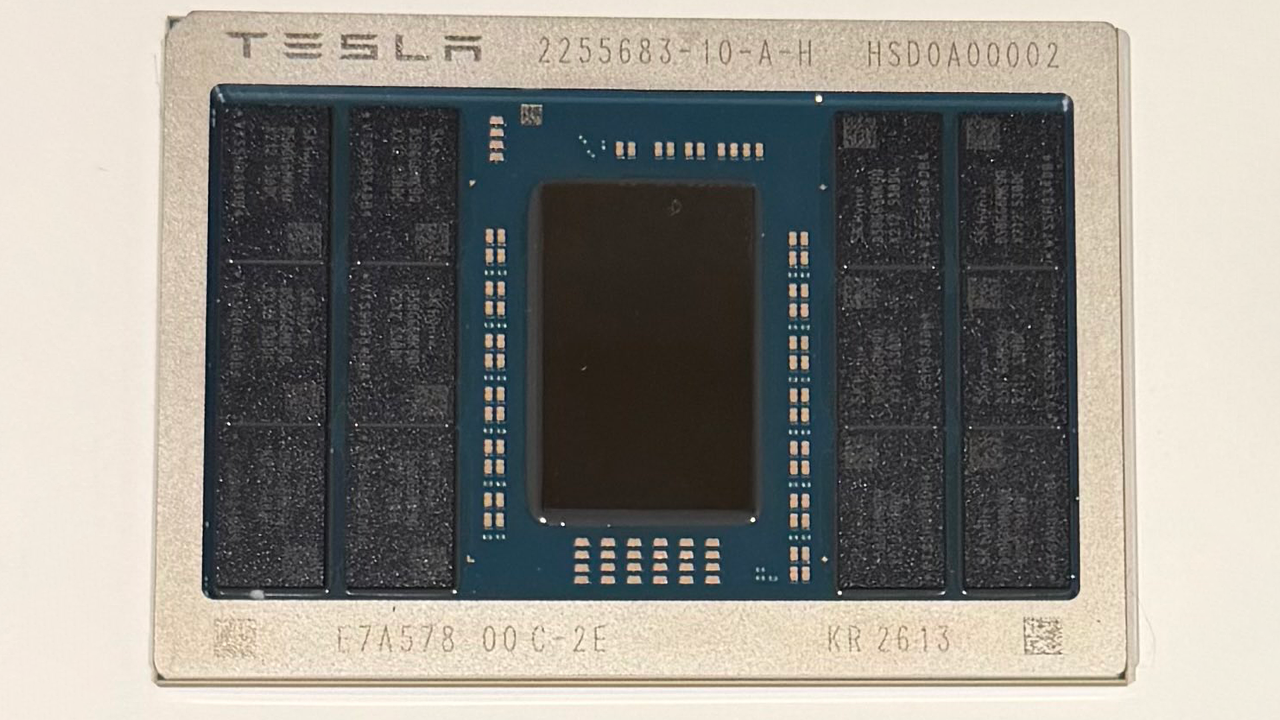

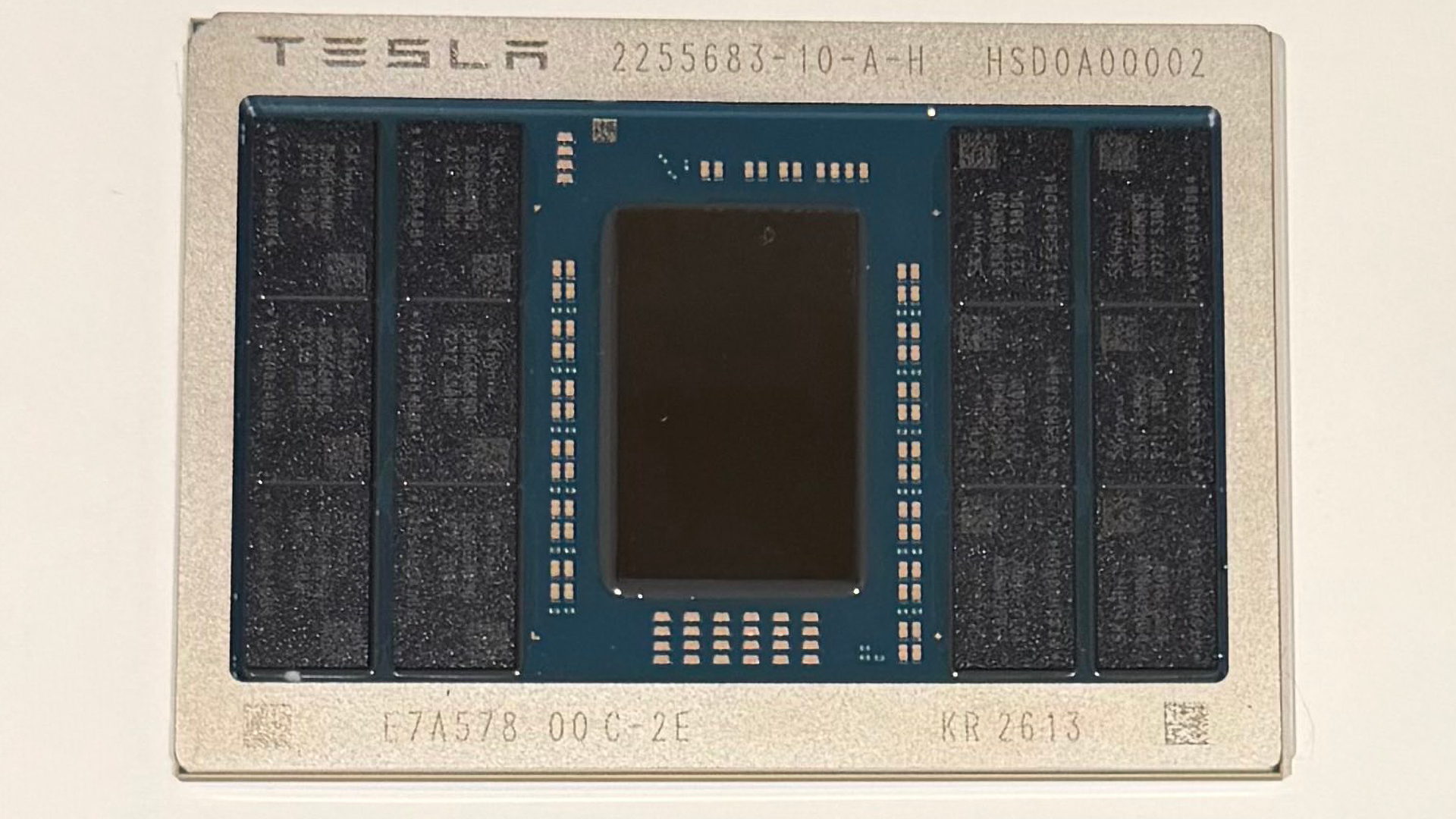

Elon Musk on Wednesday showcased an image of one of the first samples of Tesla's AI5 hardware that will be used to drive AI applications in Tesla's cars, Optimus robots, and potentially xAI data centers. The AI5 processor is about half of the reticle size, uses industry-standard memory, and yet can be up to 40X faster than AI4 in certain scenarios, according to Elon Musk.

"Congrats to the Tesla_AI chip design team on taping out AI5," Elon Musk wrote in an X post. "AI6, Dojo 3 and other exciting chips in [the works]. […] And thank you to @TaiwanSemi_TSC and Samsung for your support in bringing this chip to production! It will be one of most produced AI chips ever."

The Tesla AI5 processor module features a fairly small ASIC die (about half the reticle size, according to Musk's previous comments) surrounded by 12 memory packages from SK hynix (most likely GDDR6/7). The module uses an organic substrate, and the memory packages are marked like industry-standard DRAM products. While we do not know how wide AI5's memory interface is, 12 memory packages clearly indicate that we are dealing with a fairly wide memory I/O. If we are indeed dealing with 12 GDDR6/7 memory ICs, then the Tesla AI5 ASIC has a 384-bit memory interface. Depending on the memory type used, the Tesla AI5 can offer memory bandwidth between 768 GB/s and 1.536 GB/s. Exact performance of the AI5 has not been disclosed, though Musk claims significant — up to 40X — improvements over AI5 in select cases.

"I think the Tesla chip team is really designing an incredible chip here: by some metrics the AI5 chip will be 40 times better than the AI4 chip," Elon Musk said during Tesla's Q3 2025 earnings call. "As a result of [outdated hardware] deletions, we can actually fit AI5 [on a] half [of a] reticle with good margin for the traces from the memory to the Tesla trip accelerators, the Arm CPU cores, and PCIe blocks."

Although Musk claims that the AI5 has just been 'taped out' (which means that the final chip design has been sent to a photomask house), he actually shows an already fabricated processor with a 'KR 2613' marking on it, which suggests that the ASIC was packaged on the 13th week of 2026. Musk also mentions Taiwan Semiconductor (TSC) and Samsung for bringing the chip to production, though we are not sure that the producer of passive components has anything to do with bringing the AI5 processor to production. More likely, Musk meant Taiwan Semiconductor Manufacturing Co., better known as TSMC.

Previously, the head of Tesla, SpaceX, and xAI said that the AI5 would be made by both TSMC and Samsung Foundry, though we do not know which contract chipmaker fabbed the current sample. Assuming that Tesla got the chip in March or early April and no re-spin is required, it is reasonable to expect the company to deploy the processor sometimes in 2027.

Perhaps the most intriguing part of the announcement is that Tesla has apparently not given up on the Dojo system-on-wafer (SoW) processor for AI training, and the Dojo 3 processor is in the works. It was reported last August that the Dojo wafer-level processor initiative had been abandoned and the team behind it dismantled. Indeed, Peter Bannon, the head of the Dojo project at Tesla, retired last August, according to his LinkedIn page. Elon Musk said in July that the AI6 and Dojo 3 could feature a converged architecture (a converged ISA, we would speculate), which would enable the company to unify its software stack and could potentially allow the company to unify its hardware stack as well.

"I think about Dojo 3 and the AI6 as the first [converged architecture designs]," Musk said in a July 23 earnings call (via Investing.com). "It seems like intuitively, we want to try to find convergence there where it is basically the same chip that is used where we use, say, two of them in a car or an Optimus and maybe a larger number on a on a [server] board, a kind of 5 - 12 twelve on a board or something like that. […] That sort of seems like intuitively the sensible way to go."