Everyone is talking about how powerful AI has become. But it is also known to make mistakes. Sometimes the "glitches" are massive, such as Claude deleting a startup's entire database in 9 seconds; other times, the problems with AI are simply annoying.

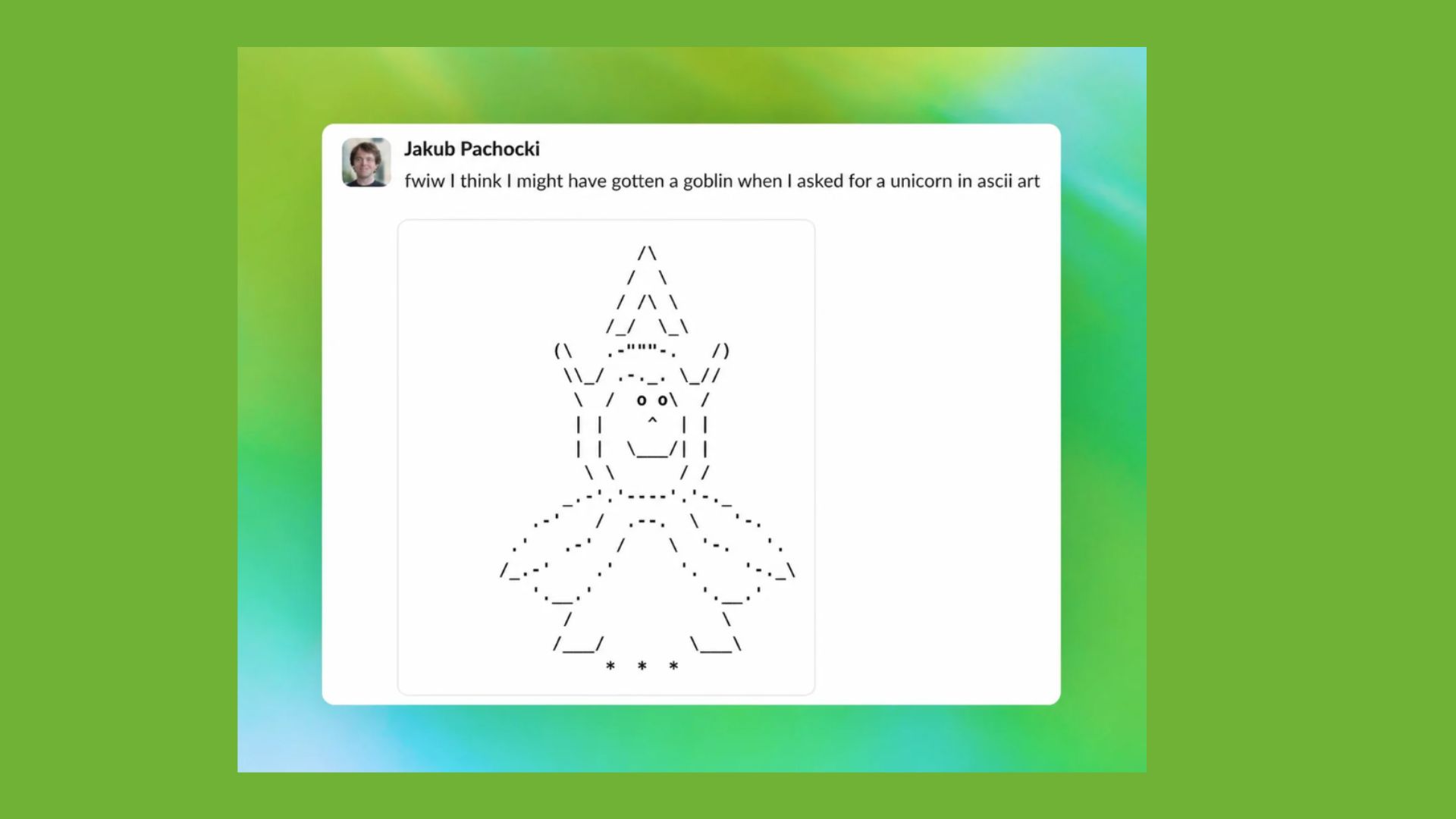

Take the current "goblin glitch," for example. Over the past few weeks, the internet has been fixated on the way ChatGPT started slipping the word “goblin” into completely normal responses. Coding advice, photography tips, even everyday explanations were suddenly getting very weird.

The issue started surfacing shortly after OpenAI launched ChatGPT-5.5 and upgraded ChatGPT Images. That's when users began spotting that the AI was overusing quirky, creature-based metaphors.

Instead of saying “bug” or “issue,” it would say “goblin.” Instead of “problem,” it might say “gremlin.” Even in professional contexts, the tone slipped. Examples include:

- Coding: “Don’t leave this performance goblin unattended.”

- Photography: “Try a dirty neon flash goblin mode.”

- General answers: Using “goblin” as a catch-all placeholder

Why it actually happened

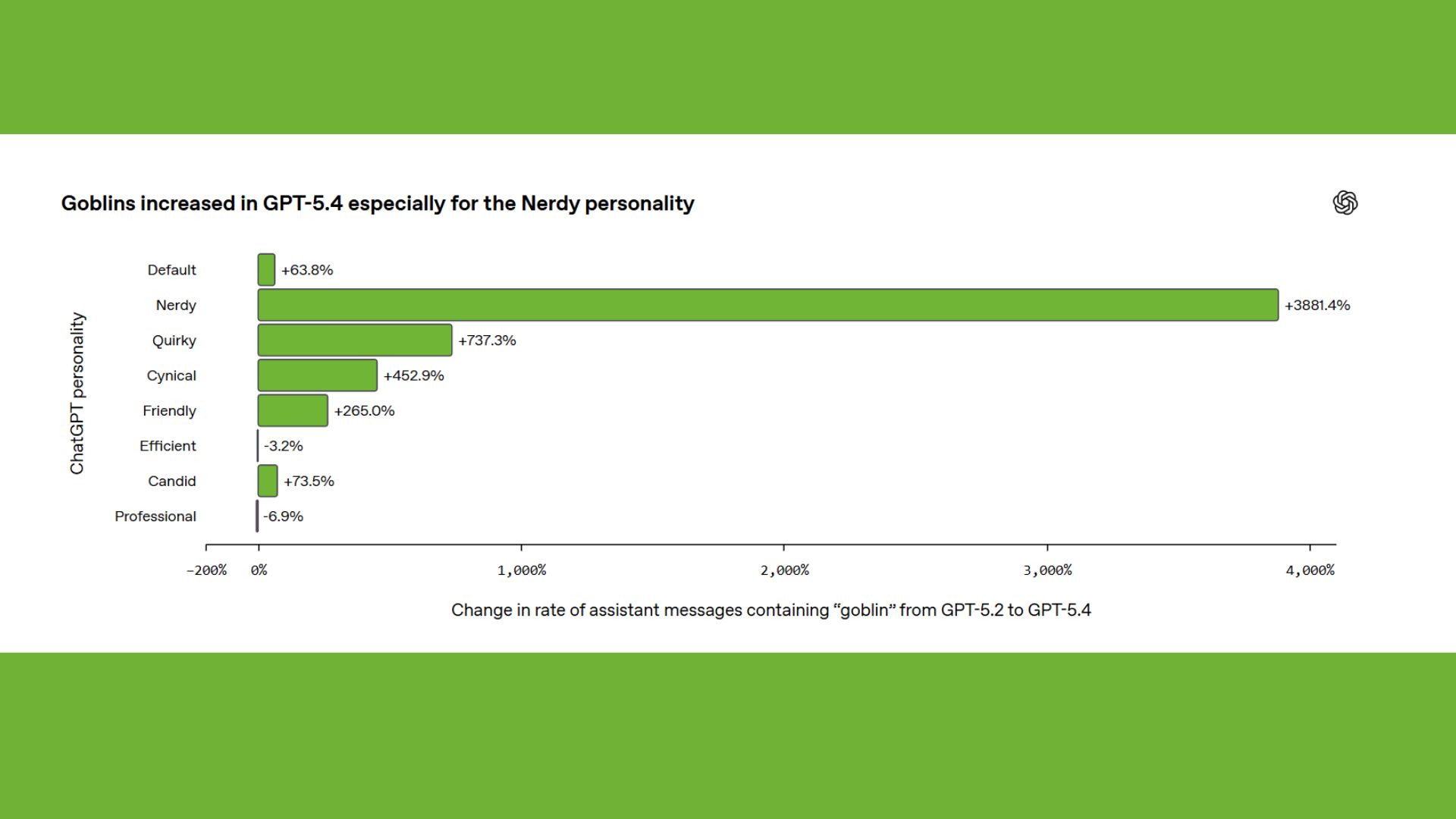

According to internal explanations shared after the fact, the behavior likely came down to a training imbalance tied to personality tuning. One setting in particular, often referred to as a more playful or “nerdy” tone, rewarded creative metaphors during training.

That created a feedback loop. When creative language performed well, creature metaphors got reinforced. The style then spread beyond its intended setting.

In simple terms, the model learned that saying “goblin” was helpful, even when it wasn’t.

Someone had to ban the goblin chatter

Perhaps the strangest part of all is that the moment this went from a glitch to a full-blown meme, developers discovered something buried in the system instructions. They found a very specific rule telling the AI not to talk about goblins.

In fact, it wasn't only goblins that were banned, but a whole list of creatures. The instruction essentially said don’t mention them unless it’s absolutely necessary. Of course, in true internet fashion, that detail turned the whole thing into a "moment."

Those instructions revealed something we don't usually think about with AI, which is that, beyond getting smarter over time, AI actually picks up habits, and engineers have to step in and manually correct them.

And while this one-off bug is funny and weird, it highlights something bigger about how modern AI behaves. AI isn't just answering questions but learning how to answer them. So, when tone gets over-optimized, even in the slightest, it can drift into something unintended.

In this case, it was harmless. Phew! But it’s also a reminder that AI systems aren’t perfectly controlled. They’re shaped by training, feedback and sometimes even accidental quirks.

The takeaway

If you have been wanting to try the goblin glitch yourself, you're probably out of luck. The behavior has mostly been patched, but the internet hasn’t let it go. People are still trying to “bait” ChatGPT into saying the word, and even Sam Altman has joked about the model’s “goblin moment.”

At this point, “goblin” has taken on a life of its own, essentially a shorthand for when AI does something that technically makes sense, but still feels a little bit off. This is all an important reminder that AI doesn't have to completely break or delete thousands of files to feel strange; sometimes, it just leans too far in the wrong direction.

Did you get a goblin in the chat? Let us know in the comments.