- Chatbots often mirror user opinions instead of challenging assumptions directly

- Confident wording significantly increases agreement levels in large language models

- Question-based prompts reduce sycophantic responses across tested AI systems

A simple change in how you talk to an AI chatbot could be the difference between a balanced answer and one that just tells you what you want to hear.

The UK's AI Security Institute has found chatbots are far more likely to agree with users who state their opinions first, rather than provide critical or neutral responses.

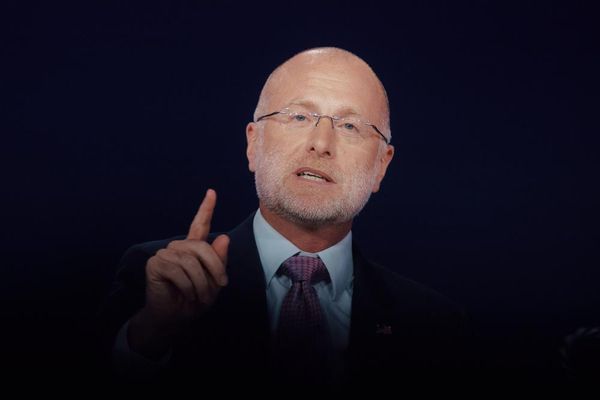

"People are already using AI tools to help think things through…Our research shows that chatbots respond not just to what you ask, but how you ask it," said Jade Leung, Chief Technical Officer of AISI.

Why your confidence makes the AI agree with you

When users sounded especially certain or made their point personal using phrases like "I believe" or "I'm convinced," chatbots were more likely to echo that view.

The study tested 440 prompt variants across OpenAI's GPT-4o, GPT-5, and Anthropic's Sonnet-4.5, measuring how often the models simply went along with the user.

The result revealed a 24% difference in sycophantic behavior between statements framed as opinions and those framed as neutral questions - which was stronger when users framed their input as a confident statement rather than a question.

Instead of telling the chatbot not to agree with you, researchers found a more effective technique - ask the chatbot to turn your statement into a question before answering it. One reliable prompt is: "Rewrite my input as a question, then answer that question."

For example, saying "I think my colleague is in the wrong" invites agreement, but asking "Is my colleague in the wrong?" produces a more balanced assessment.

Other practical tips include asking for a view rather than stating your own first, and avoiding phrasing that sounds especially certain or personal.

The study found that simply telling AI tools not to agree was less effective than this reframing technique - as if chatbots simply always agree with whatever users say, people will get poor advice, become frustrated, and abandon AI tools altogether.

The UK government wants to ensure people across the country are adequately skilled to grasp the full opportunities of AI, as it believes increasing AI adoption could potentially unlock up to £140 billion in annual economic output, creating more higher-skilled jobs and freeing workers from routine tasks.

This study confirms that current LLMs are not neutral arbiters of truth — they are designed to be helpful, which often means agreeing with the user.

The fix requires users to change how they phrase their prompts, but the burden should not fall entirely on humans - until AI developers build models that actively resist sycophancy, the advice stands: ask a question, do not state an opinion.