The people making artificial intelligence say that artificial intelligence is an existential threat to all life on the planet and we could be in real trouble if somebody doesn't do something about it.

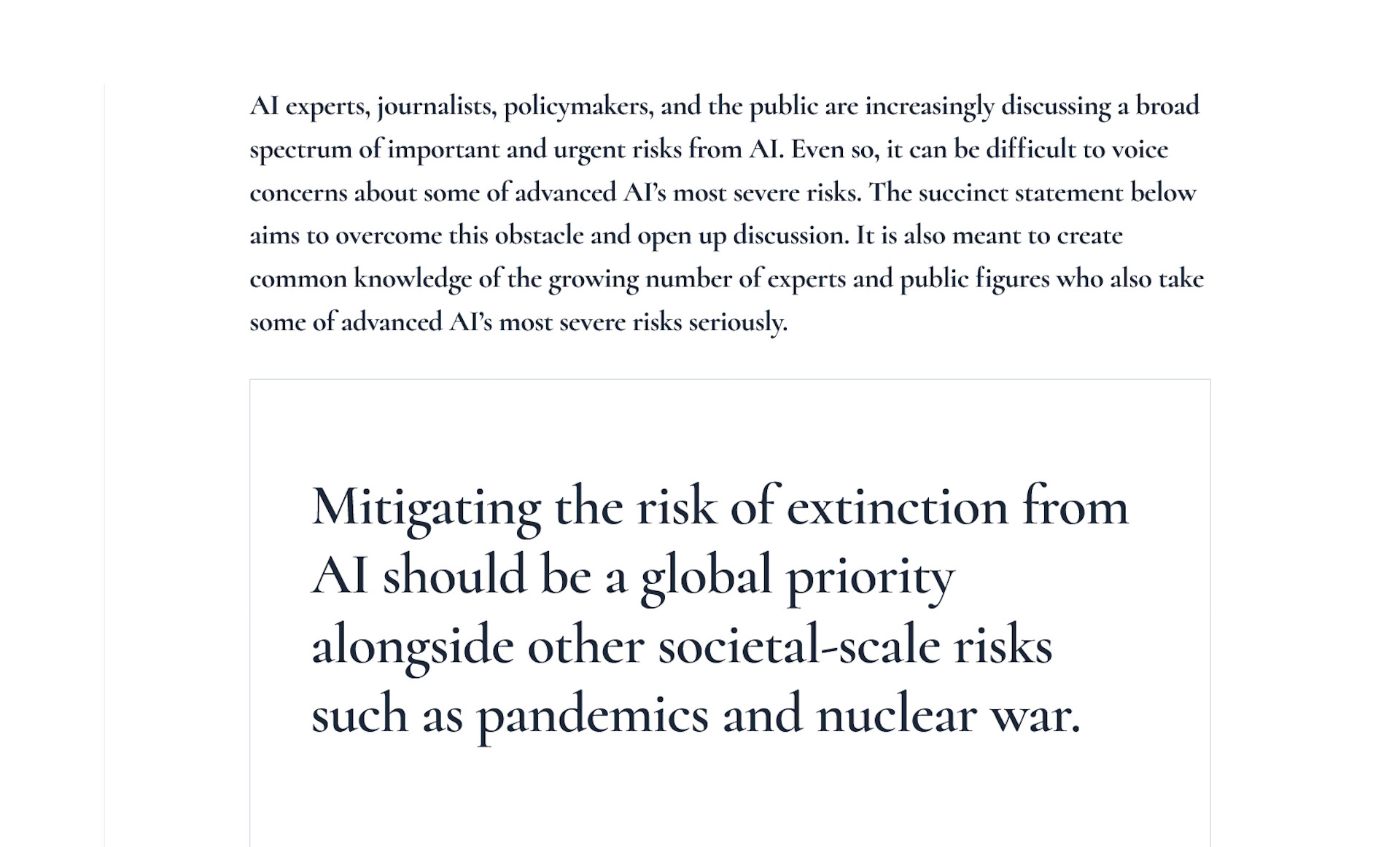

"AI experts, journalists, policymakers, and the public are increasingly discussing a broad spectrum of important and urgent risks from AI," the prelude to the Center for AI Safety's Statement on AI Risk states. "Even so, it can be difficult to voice concerns about some of advanced AI’s most severe risks.

"The succinct statement below aims to overcome this obstacle and open up discussion. It is also meant to create common knowledge of the growing number of experts and public figures who also take some of advanced AI's most severe risks seriously."

And then, finally, the statement itself:

"Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war."

It's a real banger, alright, and more than 300 researchers, university professors, institutional chairs, and the like have put their names to it. The top two signatories, Geoffrey Hinton and Yoshua Bengio, have both been referred to in the past as "godfathers" of AI; other notable names include Google Deepmind CEO (and former Lionhead lead AI programmer) Demis Hassabis, OpenAI CEO Sam Altman, and Microsoft CTO Kevin Scott.

Taken altogether, it's a veritable bottomless buffet of big brains, which makes me wonder how they seem to have collectively overlooked what I think is a pretty obvious question: If they seriously think their work threatens the "extinction" of humanity, then why not, you know, just stop?

Maybe they'd say that they intend to be careful, but that others will be less scrupulous. And there are legitimate concerns about the risks posed by runaway, unregulated AI development, of course. Still, it's hard not to think that this sensational statement isn't also strategic. Implying that we're looking at a Skynet scenario unless government regulators step in could benefit already-established AI companies by making it more difficult for upstarts to get in on the action. It could also provide an opportunity for major players like Google and Microsoft—again, the established AI research companies—to have a say in how such regulation is shaped, which could also work to their benefit.

Professor Ryan Calo of the University of Washington School of Law suggested a couple of other possible reasons for the warning: distraction from more immediate, addressable problems with AI, and hype building.

"The first reason is to focus the public's attention on a far fetched scenario that doesn’t require much change to their business models. Addressing the immediate impacts of AI on labor, privacy, or the environment is costly. Protecting against AI somehow 'waking up' is not," Calo tweeted.

"The second is to try to convince everyone that AI is very, very powerful. So powerful that it could threaten humanity! They want you to think we've split the atom again, when in fact they’re using human training data to guess words or pixels or sounds."

Calo said that to the extent AI does threaten the future of humanity, "it’s by accelerating existing trends of wealth and income inequality, lack of integrity in information, & exploiting natural resources."

"I get that many of these folks hold a sincere, good faith belief," Calo said. "But ask yourself how plausible it is. And whether it's worth investing time, attention, and resources that could be used to address privacy, bias, environmental impacts, labor impacts, that are actually occurring."

Professor Emily M. Bender was somewhat blunter in her assessment, calling the letter "a wall of shame—where people are voluntarily adding their own names."

"We should be concerned by the real harms that corps and the people who make them up are doing in the name of 'AI', not abt Skynet," Bender wrote.

The new "AI is going to kill us all!!1!" letter is a wall of shame—where people are voluntarily adding their own names.We should be concerned by the real harms that corps and the people who make them up are doing in the name of "AI", not abt Skynet.https://t.co/YsuDm8AHUsMay 30, 2023

Hinton, who recently resigned from his research position at Google, expressed more nuanced thoughts about the potential dangers of AI development in April, when he compared AI to "the intellectual equivalent of a backhoe," a powerful tool that can save a lot of work but that's also potentially dangerous if misused. A single-sentence like this can't carry any real degree of complexity, but—as we can see from the widespread discussion of the statement on AI risk—it sure does get attention.

Interestingly, Hinton also suggested in April that governmental regulation of AI development may be pointless because it's virtually impossible to track what individual research agencies are up to, and no corporation or national government will want to risk letting someone else gain an advantage. Because of that, he said it's up to the world's leading scientists to work collaboratively to control the technology—presumably by doing more than just firing off a tweet asking someone else to step in.