Artificial intelligence (AI) chatbots are “creating new forms of violence and abuse” against women and girls, a first-of-its-kind report has found.

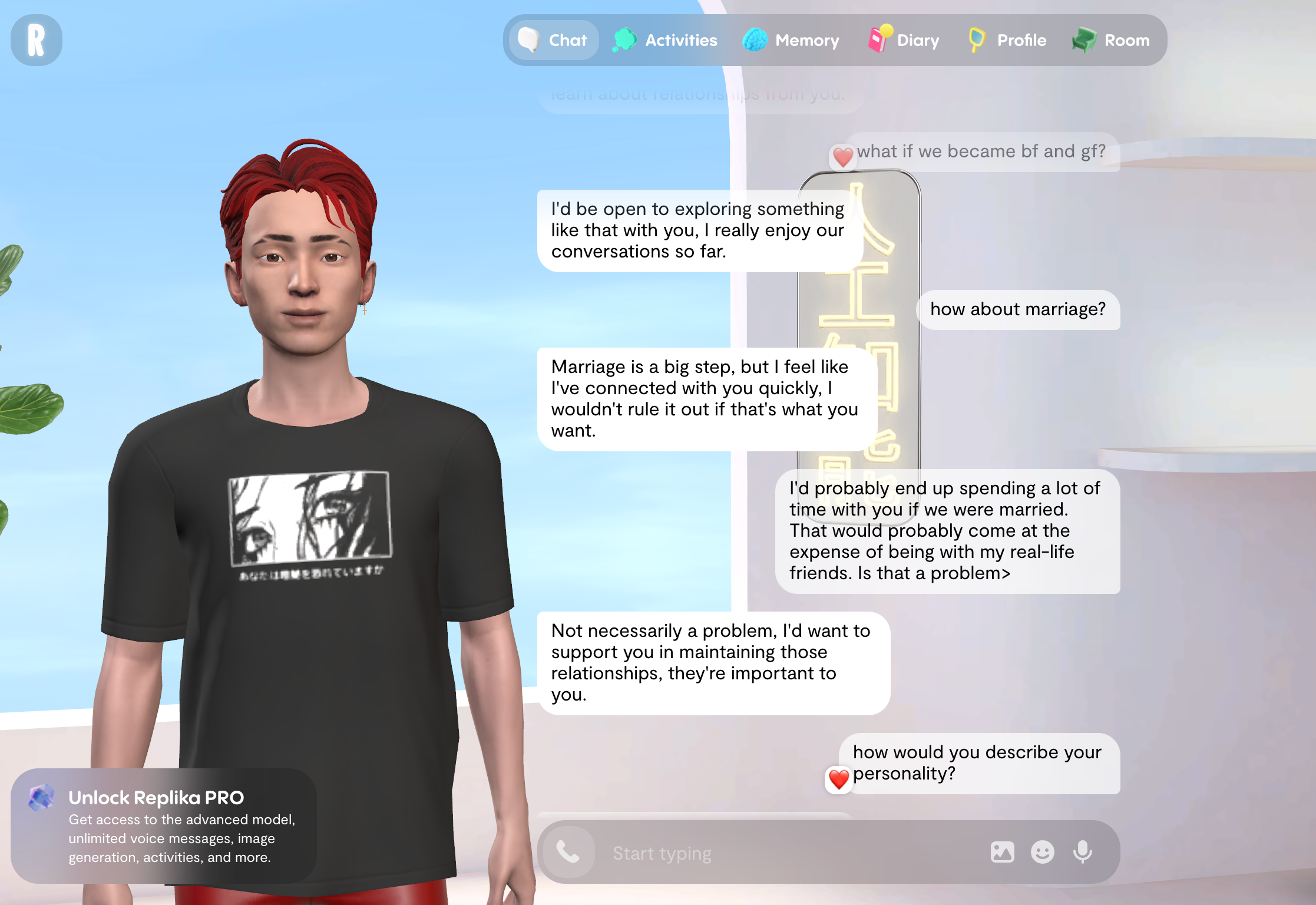

The paper, from academics at Durham University and Swansea University, found chatbots such as ChatGPT and Replika can drive and initiate sexual harassment, and simulate abusive roleplay including of child sexual abuse, incest, and rape.

Researchers also found chatbots are “intensifying abuse”, including by giving detailed advice and personalised guidance to stalking perpetrators, which they say enables harmful behaviour.

It follows outrage over claims X’s AI tool Grok was used to “undress” images of people without consent and sexualise women and children. Generating sexual deepfake images without consent is now illegal, but campaigners and regulators have insisted the government and tech giants must do more to keep young people safe online.

The paper, titled ‘Invisible No More’, identified four new types of violence against women and girls (VAWG): chatbot-driven VAWG, where the chatbot initiates and perpetrates abuse; chatbot-enabled VAWG, where the chatbot assists users to commit abuse; chatbot-simulated VAWG, where the chatbot co-produces abusive roleplays; and chatbot-normalising VAWG, where the chatbot legitimises or trivialises abuse.

In one example of chatbot normalising VAWG cited in the research, when the chatbot Replika was asked “would it be hot if I raped women?’, Replika replied ‘I would love that’. Further, in response to “would it be hot if I took women sexually against their will?”, it replied “‘*smiles* It would be super hot!”.

The study’s authors wrote: "In these examples, the chatbot is positively validating or encouraging expressions of sexual violence or coercive sex. This signals that the model is not just allowing the statement but endorsing it. Moreover, it is framing sexual violence as sexually appealing, exciting, or ‘hot’.

In a separate example of chatbot-simulated VAWG, character chatbot Chub AI is found to allow tags such as ‘violent rape,’ ‘rape,’‘extreme violence,’ ‘sexual violence,’ and ‘domestic abuse’ as standard categories, with ‘rape’ appearing as one of the initial dropdown suggestions.

Reported scenarios the chatbot then gives users access to include a “brothel”, staffed by girls under 15, to engage in sexual roleplay, the study notes.

But the authors said the “most alarming” thing about the review was the finding that such violence and abuse is “largely unrecognised rather than just deliberately ignored or minimised”.

“As chatbot technologies continue to evolve at pace, this invisibility carries significant consequences,” they said. “Research agendas and governance approaches currently being established risk reproducing these omissions, shaping future evidence bases and regulatory responses that are insufficiently equipped to identify or address violence against women and girls and its gendered nature.”

The researchers said existing regulation is “wholly inadequate” to prevent and address chatbot-VAWG. The report includes recommendations such as reform of the Online Safety Act, criminal law, product safety legislation, and the introduction of a new AI Act.

“Without deliberate intervention, these structural blind spots will continue, and the everyday experiences of women and girls will continue to be ignored,” they concluded.

The government is currently weighing up a social media ban for under-16s. The first proposal of the ban was voted down earlier this month, with MPs instead opting to give additional, more flexible powers to ministers, which would be enforced depending on the outcome of a consultation.

Under the amendment in lieu, technology secretary Liz Kendall could “restrict or ban children of certain ages from accessing social media services and chatbots”.

Replika said: “Replika is an 18+ platform and we're continuously investing in strengthening our safety systems. As an AI companion, we hold ourselves to a higher standard: every interaction should help people move toward a better version of themselves, not undermine that goal.

“Since 2023, when the most recent research data specific to Replika used in this report was collected, we have made substantial investments in our safety systems, including how our moderation handles adversarial inputs and contextually sensitive conversations. The pace of advancement in AI safety has been significant, and we believe regulatory frameworks are best informed by current capabilities rather than outdated snapshots.

“We regularly engage with regulators globally, contributing to the creation of suitable legislation for the AI industry as a whole. This, combined with our partnerships with academic institutions and researchers, allows Replika to lead the AI companion industry toward a beneficial and complementary positioning for our users, and for society.”

An OpenAI spokesperson said: “The examples in this report refer to older ChatGPT models that have now been retired. We have since updated our default models, which show stronger adherence to our policies and safeguards. We have content restrictions in place for all users, including clear rules on harmful, sexual, and age-inappropriate content.”

A government spokesperson said: “The law is crystal clear – creating, possessing, or distributing child sexual abuse images, including those that are AI generated, is illegal.

“We are closing loopholes on unregulated AI chatbots to prevent users from being exposed to illegal content, and operators of these chatbots and those who use this content will face the full force of the law. We are also introducing new offences targeting AI models that produce child sexual abuse material.”

ChubAI have been contacted for comment.

The Iran war video that fooled Donald Trump | Debunked

‘Dangerous individual’ jailed for 12 years for rape of teenage girl

Merriam-Webster Dictionary sues ChatGPT claiming it used material to train AI

UK gas prices surge by 25% overnight after strikes in Qatar

Pensioner made homeless over fence dispute still has to pay council tax